Bredemarket helps identity/biometric firms.

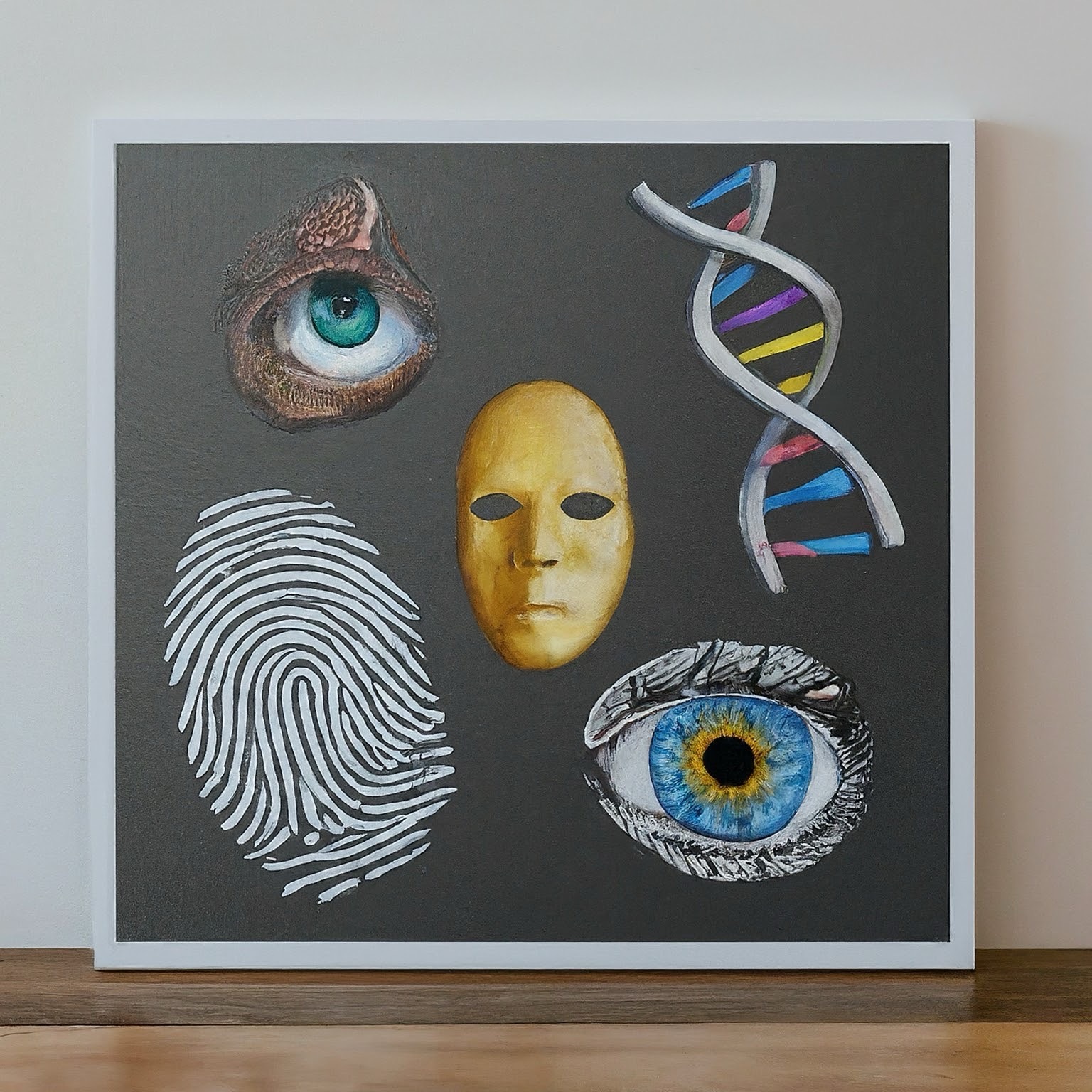

- Finger, face, iris, voice, DNA, ID documents, geolocation, and even knowledge.

- Content-Proposal-Analysis. (Bredemarket’s “CPA.”)

Don’t miss the boat.

Augment your team with Bredemarket.

Identity/biometrics/technology marketing and writing services

Bredemarket helps identity/biometric firms.

Don’t miss the boat.

Augment your team with Bredemarket.

Biometric product marketing expert.

Modalities: Finger, face, iris, voice, DNA.

Plus other factors: IDs, data.

John E. Bredehoft has worked for Incode, IDEMIA, MorphoTrak, Motorola, Printrak, and a host of Bredemarket clients.

(Some images AI-generated by Google Gemini.)

(Part of the biometric product marketing expert series)

When marketing your facial recognition product (or any product), you need to pay attention to your positioning and messaging. This includes developing the answers to why, how, and what questions. But your positioning and your resulting messaging are deeply influenced by the characteristics of your product.

There are hundreds of facial recognition products on the market that are used for identity verification, authentication, crime solving (but ONLY as an investigative lead), and other purposes.

Some of these solutions ONLY use face as a biometric modality. Others use additional biometric modalities.

Your positioning depends upon whether your solution only uses face, or uses other factors such as voice.

Of course, if you initially only offer a face solution and then offer a second biometric, you’ll have to rewrite all your material. “You know how we said that face is great? Well, face and gait are even greater!”

It’s no secret that I am NOT a fan of the “passwords are dead” movement.

It seems that many of the people that are waiting the long-delayed death of the password think that biometrics is the magic solution that will completely replace passwords.

For this reason, your company might have decided to use biometrics as your sole factor of identity verification and authentication.

Or perhaps your company took a different approach, and believes that multiple factors—perhaps all five factors—are required to truly verify and/or authenticate an individual. Use some combination of biometrics, secure documents such as driver’s licenses, geolocation, “something you do” such as a particular swiping pattern, and even (horrors!) knowledge-based authentication such as passwords or PINs.

This naturally shapes your positioning and messaging.

So position yourself however you need to position yourself. Again, be prepared to change if your single factor solution adopts a second factor.

Every company has its own way of approaching a problem, and your company is no different. As you prepare to market your products, survey your product, your customers, and your prospects and choose the correct positioning (and messaging) for your own circumstances.

And if you need help with biometric positioning and messaging, feel free to contact the biometric product marketing expert, John E. Bredehoft. (Full-time employment opportunities via LinkedIn, consulting opportunities via Bredemarket.)

In the meantime, take care of yourself, and each other.

I’ve talked about synthetic identity fraud a lot in the Bredemarket blog over the past several years. I’ll summarize a few examples in this post, talk about how to fight synthetic identity fraud, and wrap up by suggesting how to get the word out about your anti-synthetic identity solution.

But first let’s look at a few examples of synthetic identity.

As far back as December 2020, I discussed Kris’ Rides’ encounter with a synthetic employee from a company with a number of synthetic employees (many of who were young females).

More recently, I discussed attempts to create synthetic identities using gummy fingers and fake/fraudulent voices. The topic of deepfakes continues to be hot across all biometric modalities.

I shared a video I created about synthetic identities and their use to create fraudulent financial identities.

I even discussed Kelly Shepherd, the fake vegan mom created by HBO executive Casey Bloys to respond to HBO critics.

And that’s just some of what Bredemarket has written about synthetic identity. You can find the complete list of my synthetic identity posts here.

It isn’t enough to talk about the fact that synthetic identities exist: sometimes for innocent reasons, sometimes for outright fraudulent reasons.

You need to communicate how to fight synthetic identities, especially if your firm offers an anti-fraud solution.

Here are four ways to fight synthetic identities:

If you conduct all four tests, then you have used multiple factors of authentication to confirm that the person is who they say they are. If the identity is synthetic, chances are the purported person will fail at least one of these tests.

If you fight synthetic identity fraud, you should let people know about your solution.

Perhaps you can use Bredemarket, the identity content marketing expert. I work with you (and I have worked with others) to ensure that your content meets your awareness, consideration, and/or conversion goals.

How can I work with you to communicate your firm’s anti-synthetic identity message? For example, I can apply my identity/biometric blog expert knowledge to create an identity blog post for your firm. Blog posts provide an immediate business impact to your firm, and are easy to reshare and repurpose. For B2B needs, LinkedIn articles provide similar benefits.

If Bredemarket can help your firm convey your message about synthetic identity, let’s talk.

(Part of the biometric product marketing expert series)

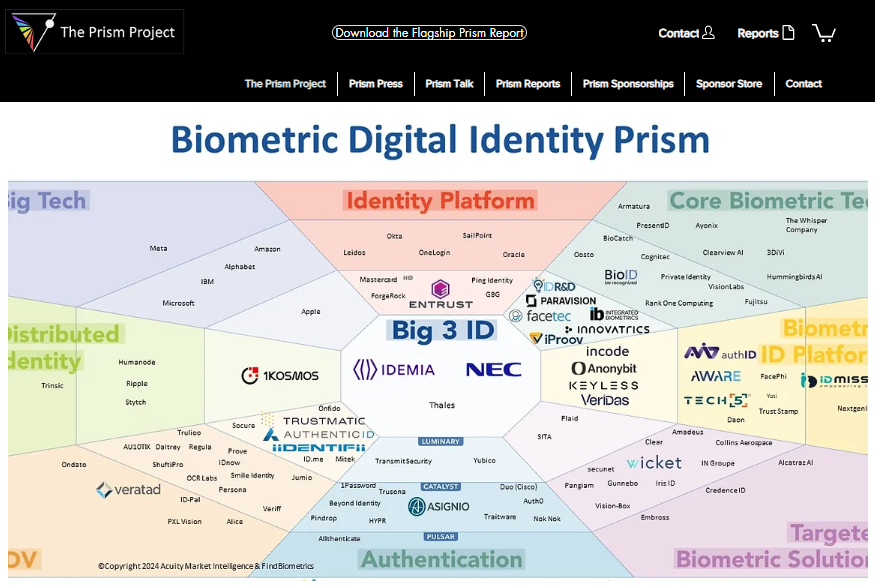

There are a LOT of biometric companies out there.

With over 100 firms in the biometric industry, their offerings are going to naturally differ—even if all the firms are TRYING to copy each other and offer “me too” solutions.

I’ve worked for over a dozen biometric firms as an employee or independent contractor, and I’ve analyzed over 80 biometric firms in competitive intelligence exercises, so I’m well aware of the vast implementation differences between the biometric offerings.

Some of the implementation differences provoke vehement disagreements between biometric firms regarding which choice is correct. Yes, we FIGHT.

Let’s look at three (out of many) of these implementation differences and see how they affect YOUR company’s content marketing efforts—whether you’re engaging in identity blog post writing, or some other content marketing activity.

Firms that develop biometric solutions make (or should make) the following choices when implementing their solutions.

I will address each of these questions in turn, highlighting the pros and cons of each implementation choice. After that, we’ll see how this affects your firm’s content marketing.

Back in June 2023 I defined what a “presentation attack” is.

(I)nstead of capturing a true biometric from a person, the biometric sensor is fooled into capturing a fake biometric: an artificial finger, a face with a mask on it, or a face on a video screen (rather than a face of a live person).

This tomfoolery is called a “presentation attack” (becuase you’re attacking security with a fake presentation).

Then I talked about standards and testing.

But the standards folks have developed ISO/IEC 30107-3:2023, Information technology — Biometric presentation attack detection — Part 3: Testing and reporting.

And an organization called iBeta is one of the testing facilities authorized to test in accordance with the standard and to determine whether a biometric reader can detect the “liveness” of a biometric sample.

(Friends, I’m not going to get into passive liveness and active liveness. That’s best saved for another day.)

Well…that day is today.

Now I could cite a firm using active liveness detection to say why it’s great, or I could cite a firm using passive liveness detection to say why it’s great. But perhaps the most balanced assessment comes from facia, which offers both types of liveness detection. How does facia define the two types of liveness detection?

Active liveness detection, as the name suggests, requires some sort of activity from the user. If a system is unable to detect liveness, it will ask the user to perform some specific actions such as nodding, blinking or any other facial movement. This allows the system to detect natural movements and separate it from a system trying to mimic a human being….

Passive liveness detection operates discreetly in the background, requiring no explicit action from the user. The system’s artificial intelligence continuously analyses facial movements, depth, texture, and other biometric indicators to detect an individual’s liveness.

Briefly, the pros and cons of the two methods are as follows:

So in truth the choice is up to each firm. I’ve worked with firms that used both liveness detection methods, and while I’ve spent most of my time with passive implementations, the active ones can work also.

A perfect wishy-washy statement that will get BOTH sides angry at me. (Except perhaps for companies like facia that use both.)

There are a lot of applications for age assurance, or knowing how old a person is. These include smoking tobacco or marijuana, buying firearms, driving a car, drinking alcohol, gambling, viewing adult content, using social media, or buying garden implements.

If you need to know a person’s age, you can ask them. Because people never lie.

Well, maybe they do. There are two better age assurance methods:

I’ve gone back and forth on this. As I previously mentioned, my employment history includes time with a firm produces driver’s licenses for the majority of U.S. states. And back when that firm was providing my paycheck, I was financially incentivized to champion age verification based upon the driver’s licenses that my company (or occasionally some inferior company) produced.

But as age assurance applications moved into other areas such as social media use, a problem occurred since 13 year olds usually don’t have government IDs. A few of them may have passports or other government IDs, but none of them have driver’s licenses.

But does age estimation work? I’m not sure if ANYONE has posted a non-biased view, so I’ll try to do so myself.

How precise is age estimation? We’ll find out soon, once NIST releases the results of its Face Analysis Technology Evaluation (FATE) Age Estimation & Verification test. The release of results is expected in early May.

Fingerprints, palm prints, faces, irises, and everything up to gait. (And behavioral biometrics.) There are a lot of biometric modalities out there, and one that has been around for years is the voice biometric.

I’ve discussed this topic before, and the partial title of the post (“We’ll Survive Voice Spoofing”) gives away how I feel about the matter, but I’ll present both sides of the issue.

No one can deny that voice spoofing exists and is effective, but many of the examples cited by the popular press are cases in which a HUMAN (rather than an ALGORITHM) was fooled by a deepfake voice. But voice recognition software can also be fooled.

(Incidentally, there is a difference between voice recognition and speech recognition. Voice recognition attempts to determine who a person is. Speech recognition attempts to determine what a person says.)

Take a study from the University of Waterloo, summarized here, that proclaims: “Computer scientists at the University of Waterloo have discovered a method of attack that can successfully bypass voice authentication security systems with up to a 99% success rate after only six tries.”

If you re-read that sentence, you will notice that it includes the words “up to.” Those words are significant if you actually read the article.

In a recent test against Amazon Connect’s voice authentication system, they achieved a 10 per cent success rate in one four-second attack, with this rate rising to over 40 per cent in less than thirty seconds. With some of the less sophisticated voice authentication systems they targeted, they achieved a 99 per cent success rate after six attempts.

Similar to Gender Shades, the University of Waterloo study does not appear to have tested hundreds of voice recognition algorithms. But there are other studies.

You’ll note that the top performers don’t have error rates anywhere near the University of Waterloo’s 99 percent.

So some firms will argue that voice recognition can be spoofed and thus cannot be trusted, while other firms will argue that the best voice recognition algorithms are rarely fooled.

Obviously, different firms are going to respond to the three questions above in different ways.

There is no universal truth here, and the message your firm conveys depends upon your firm’s unique characteristics.

And those characteristics can change.

Bear this in mind as you create your blog, white paper, case study, or other identity/biometric content, or have someone like the biometric content marketing expert Bredemarket work with you to create your content. There are people who sincerely hold the opposite belief of your firm…but your firm needs to argue that those people are, um, misinformed.

And as a postscript I’ll provide two videos that feature voices. The first is for those who detected my reference to the ABBA song “Waterloo.”

The second features the late Steve Bridges as President George W. Bush at the White House Correspondents Dinner.

(Part of the biometric product marketing expert series)

There are many different types of perfection.

This post concentrates on IDENTIFICATION perfection, or the ability to enjoy zero errors when identifying individuals.

The risk of claiming identification perfection (or any perfection) is that a SINGLE counter-example disproves the claim.

In fact, I go so far as to avoid using the phrase “no two fingerprints are alike.” Many years ago (before 2009) in an International Association for Identification meeting, I heard someone justify the claim by saying, “We haven’t found a counter-example yet.” That doesn’t mean that we’ll NEVER find one.

You’ve probably heard me tell the story before about how I misspelled the word “quality.”

In a process improvement document.

While employed by Motorola (pre-split).

At first glance, it appears that Motorola would be the last place to make a boneheaded mistake like that. After all, Motorola is known for its focus on quality.

But in actuality, Motorola was the perfect place to make such a mistake, since it was one of the champions of the “Six Sigma” philosophy (which targets a maximum of 3.4 defects per million opportunities). Motorola realized that manufacturing perfection is impossible, so manufacturers (and the people in Motorola’s weird Biometric Business Unit) should instead concentrate on reducing the error rate as much as possible.

So one misspelling could be tolerated, but I shudder to think what would have happened if I had misspelled “quality” a second time.

As identity/biometric professionals well know, there are five authentication factors that you can use to gain access to a person’s account. (You can also use these factors for identity verification to establish the person’s account in the first place.)

I described one of these factors, “something you are,” in a 2021 post on the five authentication factors.

Something You Are. I’ve spent…a long time with this factor, since this is the factor that includes biometrics modalities (finger, face, iris, DNA, voice, vein, etc.). It also includes behavioral biometrics, provided that they are truly behavioral and relatively static.

From https://bredemarket.com/2021/03/02/the-five-authentication-factors/

As I mentioned in August, there are a number of biometric modalities, including face, fingerprint, iris, hand geometry, palm print, signature, voice, gait, and many more.

If your firm offers an identity solution that partially depends upon “something you are,” then you need to create content (blog, case study, social media, white paper, etc.) that converts prospects for your identity/biometric product/service and drives content results.

Bredemarket can help.

Click below for details.

In case you missed it…

But are computerized systems any better, and can they detect spoofed voices?

Well, in the same way that fingerprint readers worked to overcome gummy bears, voice readers are working to overcome deepfake voices.

This is only the beginning of the war against voice spoofing. Other companies will pioneer new advances that will tell the real voices from the fake ones.

As for independent testing:

For the rest of the story, see “We Survived Gummy Fingers. We’re Surviving Facial Recognition Inaccuracy. We’ll Survive Voice Spoofing.”

Yes, I’m stealing the Biometric Update practice of combining multiple items into a single post, but this lets me take a brief break from identity (mostly) and examine three general technology stories:

First, let’s define “neuroprosthetics/neuroprosthesis”:

Neuroprosthetics “is a discipline related to neuroscience and biomedical engineering concerned with developing neural prostheses, artificial devices to replace or improve the function of an impaired nervous system.

From: Neuromodulation (Second Edition), 2018

Various news sources highlighted the story of amyotrophic lateral sclerosis (ALS) patient Pat Bennett and her somewhat-enhanced ability to formulate words, resulting from research at Stanford University.

Because I was curious, I sought the Nature article that discussed the research in detail, “A high-performance speech neuroprosthesis.” The article describes a proof of concept of a speech brain-computer interface (BCI).

Here we demonstrate a speech-to-text BCI that records spiking activity from intracortical microelectrode arrays. Enabled by these high-resolution recordings, our study participant—who can no longer speak intelligibly owing to amyotrophic lateral sclerosis—achieved a 9.1% word error rate on a 50-word vocabulary (2.7 times fewer errors than the previous state-of-the-art speech BCI2) and a 23.8% word error rate on a 125,000-word vocabulary (the first successful demonstration, to our knowledge, of large-vocabulary decoding). Our participant’s attempted speech was decoded at 62 words per minute, which is 3.4 times as fast as the previous record8 and begins to approach the speed of natural conversation (160 words per minute9).

From https://www.nature.com/articles/s41586-023-06377-x

While a 125,000 word vocabulary is impressive (most adult native English speakers have a vocabulary of 20,000-35,000 words), a 76.2% accuracy rate is so-so.

Stanford Medicine published a more lay-oriented article and a video that described Bennett’s condition, and the results of the study.

For Bennett, the (ALS) deterioration began not in her spinal cord, as is typical, but in her brain stem. She can still move around, dress herself and use her fingers to type, albeit with increasing difficulty. But she can no longer use the muscles of her lips, tongue, larynx and jaws to enunciate clearly the phonemes — or units of sound, such as sh — that are the building blocks of speech….

After four months, Bennett’s attempted utterances were being converted into words on a computer screen at 62 words per minute — more than three times as fast as the previous record for BCI-assisted communication.

From https://med.stanford.edu/news/all-news/2023/08/brain-implant-speech-als.html

Now let’s shift to companies that need to produce marketing collateral. Bredemarket produces collateral, but not to the scale that big companies need to produce. A single company may have to produce millions of pieces of collateral, each of which is specific to a particular product, in a particular region, for a particular audience/persona. Even Bredemarket could potentially produce all sorts of content, if it weren’t so difficult to do so:

All of this specialized content, using all of these different image and video formats? I’m not gonna create all that.

But as KBWEB Consult (a boutique technology consulting firm specializing in the implementation and delivery of Adobe Enterprise Cloud technologies) points out in its article “Implementing Rapid Omnichannel Messaging with AEM Dynamic Media,” Adobe Experience Manager has tools to speed up this process and create correctly-messaged content in ALL the formats for ALL the audiences.

One of those tools is Dynamic Media.

AEM Dynamic Media accelerates omnichannel personalization, ensuring your business messages are presented quickly and in the proper formats. Starting with a master file, Dynamic Media quickly adjusts images and videos to satisfy varying asset specifications, contributing to increased content velocity.

From https://kbwebconsult.com/implementing-rapid-omnichannel-messaging-with-aem-dynamic-media/

For those who aren’t immersed in marketing talk:

The article also discusses further implementation issues that are of interest to AEM users. If you are such a user, check the article out.

I previously said that I was MOSTLY taking a break from identity, but graph databases impact items well beyond identity.

A graph database, also referred to as a semantic database, is a software application designed to store, query and modify network graphs. A network graph is a visual construct that consists of nodes and edges. Each node represents an entity (such as a person) and each edge represents a connection or relationship between two nodes.

Graph databases have been around in some variation for along time. For example, a family tree is a very simple graph database….

Graph databases are well-suited for analyzing interconnections…

From https://www.techtarget.com/whatis/definition/graph-database

The claim is that the interconnection analysis capabilities of graph databases are much more flexible and comprehensive than the capabilities of traditional relational databases. While graph databases are not always better than relational databases, they are better for cerrtain types of data.

To see how this applies to identity and access management (IAM), I’ll turn to IndyKite, whose Lasse Andersen recently presented on graph database use in IAM (in a webinar sponsored by Strativ Group). IndyKite describes its solution as follows (in part):

A knowledge graph that holistically captures the identities of customers and IoT devices along with the rich relationships between them

A dynamic and real-time data model that unifies disconnected identity data and business metadata into one contextualized layer

From https://www.indykite.com/identity-knowledge-graph

So what?

For example, how does such a solution benefit banking and financial services providers who wish to support financial identity?

Identity-first security to enable trusted, seamless customer experiences

From https://www.indykite.com/banking

Yes, I know that every identity company (with one exception) uses the word “trust,” and they all use the word “seamless.”

But this particular technology benefits banking customers (at least the honest ones) by using the available interconnections to provide all the essential information about the customer and the customer’s devices, in a way that does not inconvenience the customer. IndyKite claims “greater privacy and security,” along with flexibility for future expansion.

In other words, it increases velocity.

I hope you provided this quick overview of these three technology advances.

But do you have a technology story that YOU want to tell?

Perhaps Bredemarket, the technology content marketing expert, can help you select the words to tell your story. If you’re interested in talking, let me know.

(Part of the biometric product marketing expert series)

On the surface, it sounds scary. Tricking automated speaker identification systems with PVC pipe?

(D)igital security engineers at the University of Wisconsin–Madison have found these systems are not quite as foolproof when it comes to a novel analog attack. They found that speaking through customized PVC pipes — the type found at most hardware stores — can trick machine learning algorithms that support automatic speaker identification systems.

From https://news.wisc.edu/down-the-tubes-common-pvc-pipes-can-hack-voice-identification-systems/

So how does the trick work?

The project began when the team began probing automatic speaker identification systems for weaknesses. When they spoke clearly, the models behaved as advertised. But when they spoke through their hands or talked into a box instead of speaking clearly, the models did not behave as expected.

(Shimaa) Ahmed investigated whether it was possible to alter the resonance, or specific frequency vibrations, of a voice to defeat the security system. Because her work began while she was stuck at home due to COVID-19, Ahmed began by speaking through paper towel tubes to test the idea. Later, after returning to the lab, the group hired Yash Wani, then an undergraduate and now a PhD student, to help modify PVC pipes at the UW Makerspace. Using various diameters of pipe purchased at a local hardware store, Ahmed, Yani and their team altered the length and diameter of the pipes until they could produce the same resonance as they voice they were attempting to imitate.

Eventually, the team developed an algorithm that can calculate the PVC pipe dimensions needed to transform the resonance of almost any voice to imitate another. In fact, the researchers successfully fooled the security systems with the PVC tube attack 60 percent of the time in a test set of 91 voices, while unaltered human impersonators were able to fool the systems only 6 percent of the time.

From https://news.wisc.edu/down-the-tubes-common-pvc-pipes-can-hack-voice-identification-systems/

Impressive results. But…

We’ve run across these biometric spoof claims before, specifically in the first test that asserted that face categorization algorithms were racist and sexist. (Face categorization, not face recognition. That’s another story.) If you didn’t view the Gender Shades website, you’d immediately assume that the hundreds of existing face categorization algorithms had just been proven to be racist and sexist. But if you read the Gender Shades study, you’ll see that it only tested three algorithms (IBM, Microsoft, and Face++). Similarly, the Master Faces study only looked at three algorithms (Dlib, FaceNet, and SphereFace).

So let’s ask the question: which voice algorithms did UW-Madison test?

Here’s what the study (PDF) says.

We evaluate two state-of-the-art ASI models: (1) the x-vector network [51] implemented by Shamsabadi et al. [45], and (2) the emphasized channel attention, propagation and aggregation time delay neural network (ECAPATDNN) [17], implemented by SpeechBrain.1 Both models were trained on VoxCeleb dataset [15, 36, 37], a benchmark dataset for ASI. The x-vector network is trained on 250 speakers using 8 kHz sampling rate. ECAPA-TDNN is trained on 7205 speakers using 16 kHz sampling rate. Both models report a test accuracy within 98-99%.

From https://www.usenix.org/system/files/sec23fall-prepub-452-ahmed.pdf

So what we know is that this test, which used these two ASI models trained on a particular dataset, demonstrated an ability to fool systems 60 percent of the time.

But…

In other words (and I’m adapting my own text here), how do the results of this study affect “current automatic speaker identification products”?

The answer is “We don’t know.”

So pipe down…until we actually test commercial algorithms for this technique.

But I’m sure that the UW-Madison researchers and I agree on one thing: more research is needed.