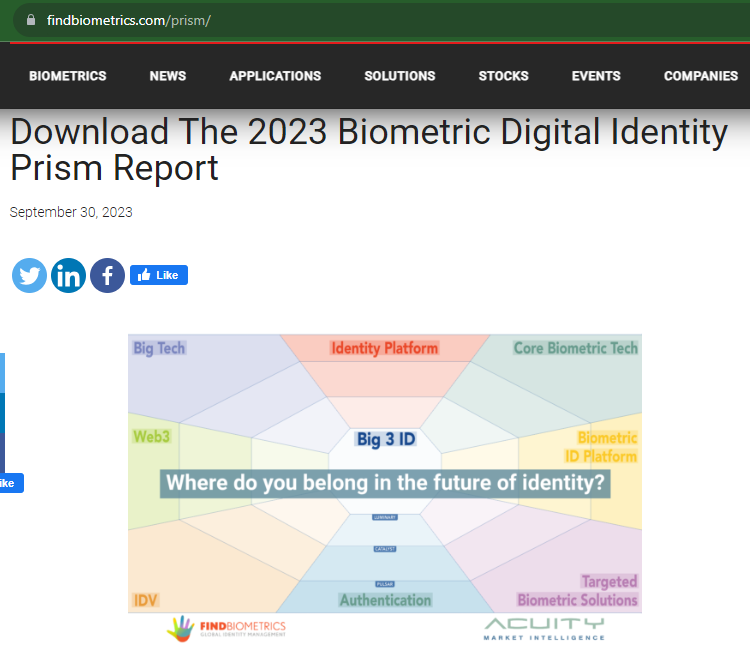

On September 30, FindBiometrics and Acuity Market Intelligence released the production version of the Biometric Digital Identity Prism Report. You can request to download it here.

Central to the concept of the Biometric Digital Identity Prism is the idea of the “Big 3 ID,” which the authors define as follows:

These firms have a global presence, a proven track record, and moderate-to-advanced activity in every other prism beam.

From “The Biometric Digital Identity Prism Report, September 2023.”

The Big 3 are IDEMIA, NEC, and Thales.

But FindBiometrics and Acuity Market Intelligence didn’t invent the Big 3. The concept has been around for 40 years. And two of today’s Big 3 weren’t in the Big 3 when things started. Oh, and there weren’t always 3; sometimes there were 4, and some could argue that there were 5.

So how did we get from the Big 3 of 40 years ago to the Big 3 of today?

The Big 3 in the 1980s

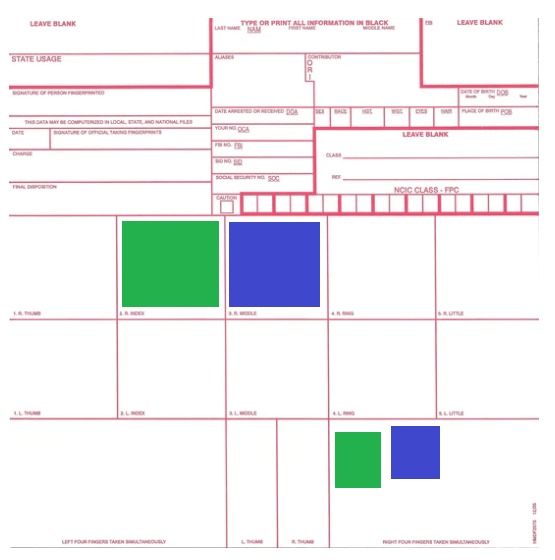

Back in 1986 (eight years before I learned how to spell AFIS) the American National Standards Institute, in conjunction with the National Bureau of Standards, issued ANSI/NBS-ICST 1-1986, a data format for information interchange of fingerprints. The PDF of this long-superseded standard is available here.

When creating this standard, ANSI and the NBS worked with a number of law enforcement agencies, as well as companies in the nascent fingerprint industry. There is a whole list of companies cited at the beginning of the standard, but I’d like to name four of them.

- De La Rue Printrak, Inc.

- Identix, Inc.

- Morpho Systems

- NEC Information Systems, Inc.

While all four of these companies produced computerized fingerprinting equipment, three of them had successfully produced automated fingerprint identification systems, or AFIS. As Chapter 6 of the Fingerprint Sourcebook subsequently noted:

- De La Rue Printrak (formerly part of Rockwell, which was formerly Autonetics) had deployed AFIS equipment for the U.S. Federal Bureau of Investigation and for the cities of Minneapolis and St. Paul as well as other cities. Dorothy Bullard (more about her later) has written about Printrak’s history, as has Reference for Business.

- Morpho Systems resulted from French AFIS efforts, separate from those of the FBI. These efforts launched Morpho’s long-standing relationship with the French National Police, as well as a similar relationship (now former relationship) with Pierce County, Washington.

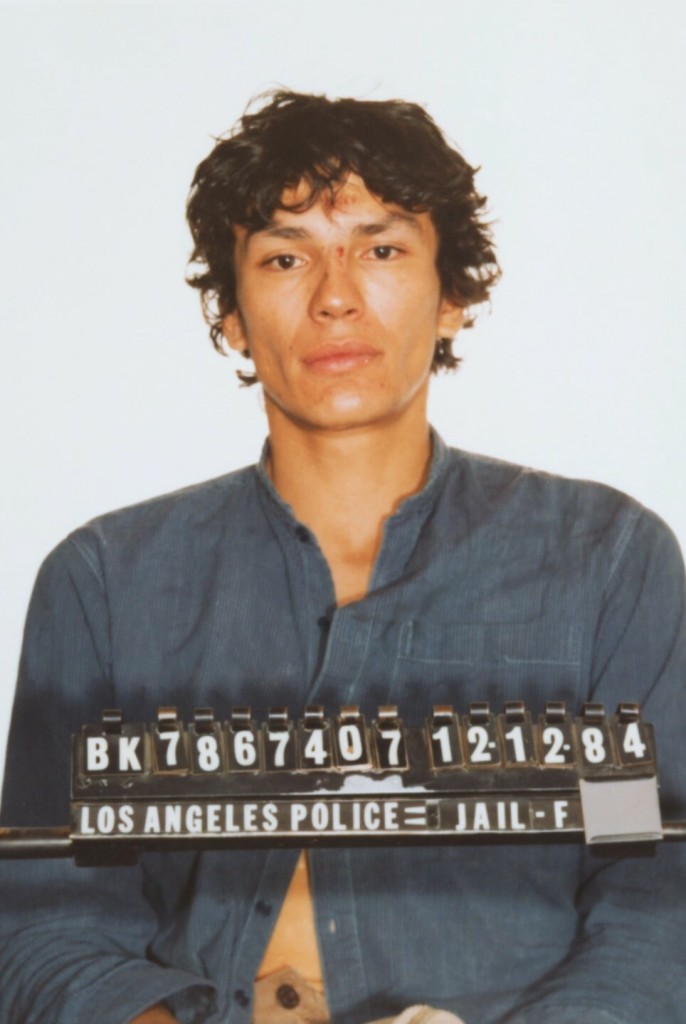

- NEC had deployed AFIS equipment for the National Police Academy of Japan, and (after some prodding; read Chapter 6 for the story) the city of San Francisco. Eventually the state of California obtained an NEC system, which played a part in the identification of “Night Stalker” Richard Ramirez.

After the success of the San Francisco and California AFIS systems, many other jurisdictions began clamoring for AFIS of their own, and turned to these three vendors to supply them.

The Big 4 in the 1990s

But in 1990, these three firms were joined by a fourth upstart, Cogent Systems of South Pasadena, California.

While customers initially preferred the Big 3 to the upstart, Cogent Systems eventually installed a statewide system in Ohio and a border control system for the U.S. government, plus a vast number of local systems at the county and city level.

Between 1991 and 1994, the (Immigfation and Naturalization Service) conducted several studies of automated fingerprint systems, primarily in the San Diego, California, Border Patrol Sector. These studies demonstrated to the INS the feasibility of using a biometric fingerprint identification system to identify apprehended aliens on a large scale. In September 1994, Congress provided almost $30 million for the INS to deploy its fingerprint identification system. In October 1994, the INS began using the system, called IDENT, first in the San Diego Border Patrol Sector and then throughout the rest of the Southwest Border.

From https://oig.justice.gov/reports/plus/e0203/back.htm

I was a proposal writer for Printrak (divested by De La Rue) in the 1990s, and competed against Cogent, Morpho, and NEC in AFIS procurements. By the time I moved from proposals to product management, the next redefinition of the “big” vendors occurred.

The Big 3 in 2003

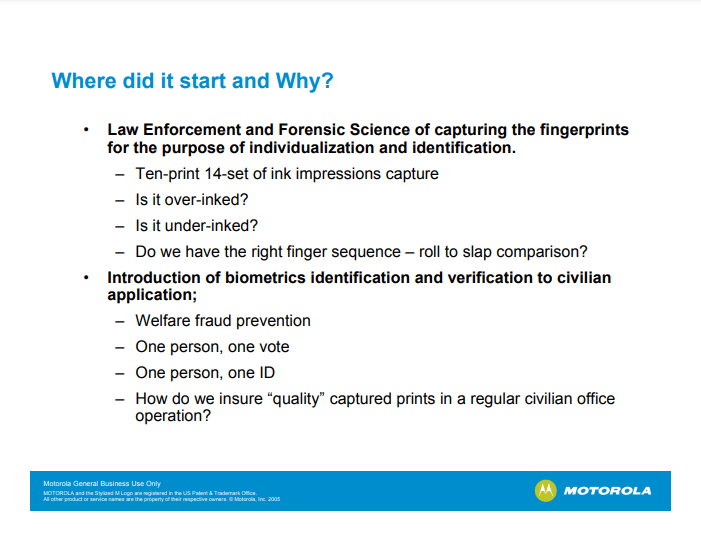

There are a lot of name changes that affected AFIS participants, one of which was the 1988 name change of the National Bureau of Standards to the National Institute of Standards and Technology (NIST). As fingerprints and other biometric modalities were increasingly employed by government agencies, NIST began conducting tests of biometric systems. These tests continue to this day, as I have previously noted.

One of NIST’s first tests was the Fingerprint Vendor Technology Evaluation of 2003 (FpVTE 2003).

For those who are familiar with NIST testing, it’s no surprise that the test was thorough:

FpVTE 2003 consists of multiple tests performed with combinations of fingers (e.g., single fingers, two index fingers, four to ten fingers) and different types and qualities of operational fingerprints (e.g., flat livescan images from visa applicants, multi-finger slap livescan images from present-day booking or background check systems, or rolled and flat inked fingerprints from legacy criminal databases).

From https://www.nist.gov/itl/iad/image-group/fingerprint-vendor-technology-evaluation-fpvte-2003

Eighteen vendors submitted their fingerprint algorithms to NIST for one or more of the various tests, including Bioscrypt, Cogent Systems, Identix, SAGEM MORPHO (SAGEM had acquired Morpho Systems), NEC, and Motorola (which had acquired Printrak). And at the conclusion of the testing, the FpVTE 2003 summary (PDF) made this statement:

Of the systems tested, NEC, SAGEM, and Cogent produced the most accurate results.

Which would have been great news if I were a product manager at NEC, SAGEM, and Cogent.

Unfortunately, I was a product manager at Motorola.

The effect of this report was…not good, and at least partially (but not fully) contributed to Motorola’s loss of its long-standing client, the Royal Canadian Mounted Police, to Cogent.

The Big 3, 4, or 5 after 2003

So what happened in the years after FpVTE was released? Opinions vary, but here are three possible explanations for what happened next.

Did the Big 3 become the Big 4 again?

Now I probably have a bit of bias in this area since I was a Motorola employee, but I maintain that Motorola overcame this temporary setback and vaulted back into the Big 4 within a couple of years. Among other things, Motorola deployed a national 1000 pixels-per-inch (PPI) system in Sweden several years before the FBI did.

Did the Big 3 remain the Big 3?

Motorola’s arch-enemies at Sagem Morpho had a different opinion, which was revealed when the state of West Virginia finally got around to deploying its own AFIS. A bit ironic, since the national FBI AFIS system IAFIS was located in West Virginia, or perhaps not.

Anyway, Motorola had a very effective sales staff, as was apparent when the state issued its Request for Proposal (RFP) and explicitly said that the state wanted a Motorola AFIS.

That didn’t stop Cogent, Identix, NEC, and Sagem Morpho from bidding on the project.

After the award, Dorothy Bullard and I requested copies of all of the proposals for evaluation. While Motorola (to no one’s surprise) won the competition, Dorothy and I believed that we shouldn’t have won. In particular, our arch-enemies at Sagem Morpho raised a compelling argument that it should be the chosen vendor.

Their argument? Here’s my summary: “Your RFP says that you want a Motorola AFIS. The states of Kansas (see page 6 of this PDF) and New Mexico (see this PDF) USED to have a Motorola AFIS…but replaced their systems with our MetaMorpho AFIS because it’s BETTER than the Motorola AFIS.”

But were Cogent, Motorola, NEC, and Sagem Morpho the only “big” players?

Did the Big 3 become the Big 5?

While the Big 3/Big 4 took a lot of the headlines, there were a number of other companies vying for attention. (I’ve talked about this before, but it’s worthwhile to review it again.)

- Identix, while making some efforts in the AFIS market, concentrated on creating live scan fingerprinting machines, where it competed (sometimes in court) against companies such as Digital Biometrics and Bioscrypt.

- The fingerprint companies started to compete against facial recognition companies, including Viisage and Visionics.

- Oh, and there were also iris companies such as Iridian.

- And there were other ways to identify people. Even before 9/11 mandated REAL ID (which we may get any year now), Polaroid was making great efforts to improve driver’s licenses to serve as a reliable form of identification.

In short, there were a bunch of small identity companies all over the place.

But in the course of a few short years, Dr. Joseph Atick (initially) and Robert LaPenta (subsequently) concentrated on acquiring and merging those companies into a single firm, L-1 Identity Solutions.

These multiple mergers resulted in former competitors Identix and Digital Biometrics, and former competitors Viisage and Visionics, becoming part of one big happy family. (A multinational big happy family when you count Bioscrypt.) Eventually this company offered fingerprint, face, iris, driver’s license, and passport solutions, something that none of the Big 3/Big 4 could claim (although Sagem Morpho had a facial recognition offering). And L-1 had federal contracts and state contracts that could match anything that the Big 3/Big 4 offered.

So while L-1 didn’t have a state AFIS contract like Cogent, Motorola, NEC, and Sagem Morpho did, you could argue that L-1 was important enough to be ranked with the big boys.

So for the sake of argument let’s assume that there was a Big 5, and L-1 Identity Solutions was part of it, along with the three big boys Motorola, NEC, and Safran (who had acquired Sagem and thus now owned Sagem Morpho), and the independent Cogent Systems. These five companies competed fiercly with each other (see West Virginia, above).

In a two-year period, everything would change.

The Big 3 after 2009

Hang on to your seats.

- By 2009, Safran (resulting from the merger of Sagem and Snecma) was an international powerhouse in aerospace and defense and also had identity/biometric interests. Motorola, in the meantime, was no longer enjoying the success of its RAZR phone and was looking at trimming down (prior to its eventual, um, bifurcation). In response to these dynamics, Safran announced its intent to purchase Motorola’s Biometric Business Unit in October 2008, an effort that was finalized in April 2009. The Biometric Business Unit (adopting its former name Printrak) was acquired by Sagem Morpho and became MorphoTrak. On a personal level, Dorothy Bullard moved out of Proposals and I moved into Proposals, where I got to work with my new best friends that had previously slammed Motorola for losing the Kansas and New Mexico deals. (Seriously, Cindy and Ron are great folks.)

- By 2010, the fiercely independent Cogent was independent no more, having been acquired by 3M. (NEC thought about buying Cogent but didn’t.)

- By 2011, Safran decided that it needed additional identity capabilities, so it acquired L-1 Identity Solutions and renamed the acquisition as MorphoTrust.

If you’re keeping notes, the Big 5 have now become the Big 3: 3M, Safran, and NEC (the one constant in all of this).

While there were subsequent changes (3M sold Cogent and other pieces to Gemalto, Safran sold all of Morpho to Advent International/Oberthur to form IDEMIA, and Gemalto was acquired by Thales), the Big 3 has remained constant over the last decade.

And that’s where we are today…pending future developments.

- If Alphabet or Amazon reverse their current reluctance to market their biometric offerings to governments, the entire landscape could change again.

- Or perhaps a new AI-fueled competitor could emerge.

The 1 Biometric Content Marketing Expert

This was written by John Bredehoft of Bredemarket.

If you work for the Big 3 or the Little 80+ and need marketing and writing services, the biometric content marketing expert can help you. There are several ways to get in touch:

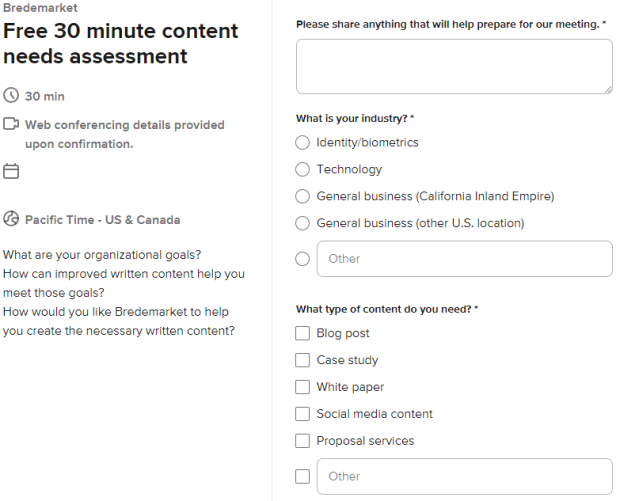

- Book a meeting with me at calendly.com/bredemarket. Be sure to fill out the information form so I can best help you.

- Email me at john.bredehoft@bredemarket.com.

- Contact me at bredemarket.com/contact/.

- Subscribe to my mailing list at http://eepurl.com/hdHIaT.