You’ve probably gathered that I don’t just post here on the Bredemarket blog.

These are some recent musical shorts—some with Canva-provided music, others with Google Lyria-generated music—that I have posted to YouTube since April.

Identity/biometrics/technology marketing and writing services

You’ve probably gathered that I don’t just post here on the Bredemarket blog.

These are some recent musical shorts—some with Canva-provided music, others with Google Lyria-generated music—that I have posted to YouTube since April.

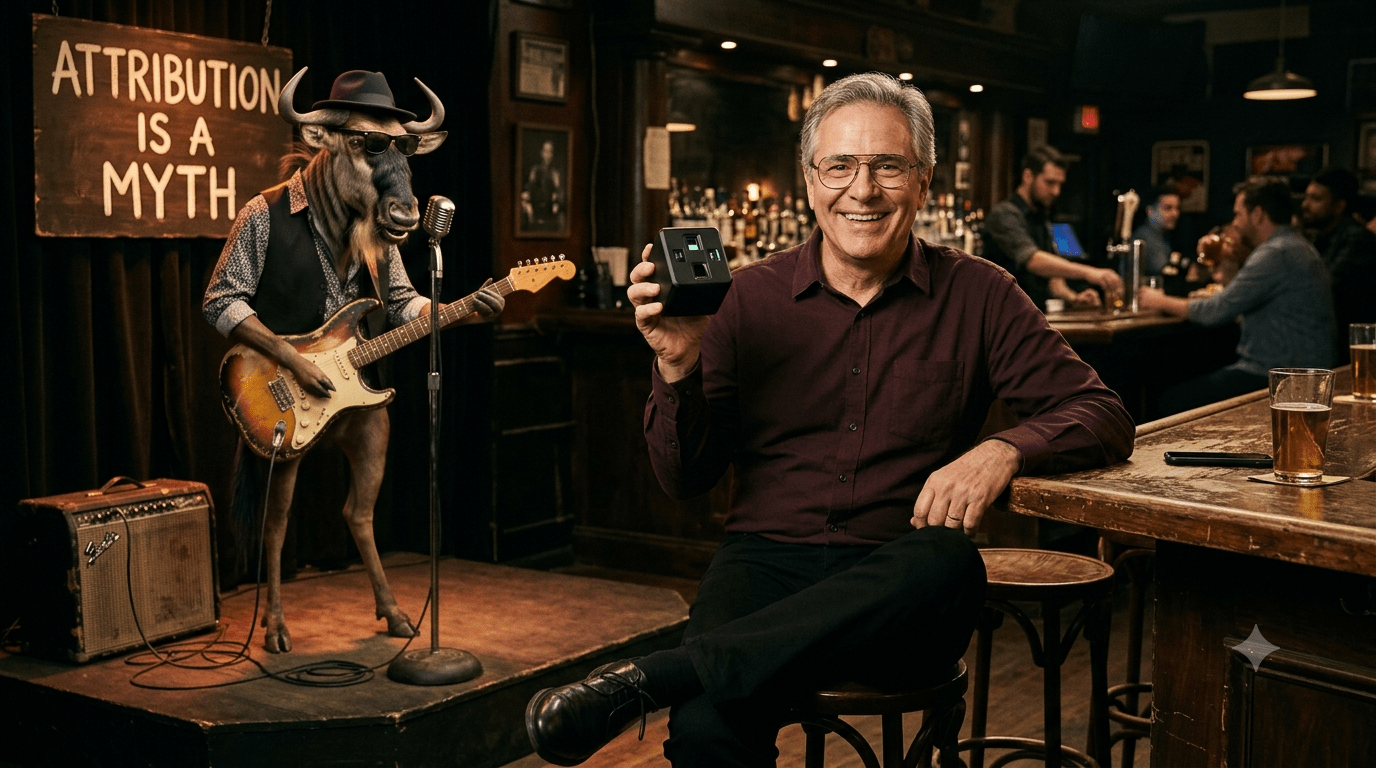

I created this (again with Canva, Google Gemini, and Google Lyria) for self-promotion.

“Lost Recognition” illustrates that facial recognition isn’t always available.

Technologically, this video was assembled in Canva using images from Google Gemini and audio (without the video) from Google Lyria.

But who cares?

I don’t create videos for Bredemarket clients, but I do provide words that address prospect needs…such as the requirement to let a person access a building on a dark night.

Talk to me about the words I can create for you.

And if you’re interested in using the “Lost Recognition” audio, here it is. Because it’s AI-generated, I can’t copyright it.

Here’s my new Google Lyria tire intelligence (TI) song, based upon my earlier post “How to Educate Yourself About TI: The Nexus.”

I was unable to create a three-minute version, so I reverted back to the 30-second length.

Compare to the TI song that I shared earlier.

A Google Lyria song about privacy. Even though modern privacy laws did not exist in 1930s Dust Bowl Oklahoma.

After encountering yet another Facebook post that seemed to go on forever, I asked Google Gemini the “why” question.

But note the last sentence of my prompt.

Why do people who use AI authored text for Facebook posts choose text that is twice as long as it needs to be? Answer succinctly.

People generally use overly long AI-generated text for Facebook posts for a few key reasons:

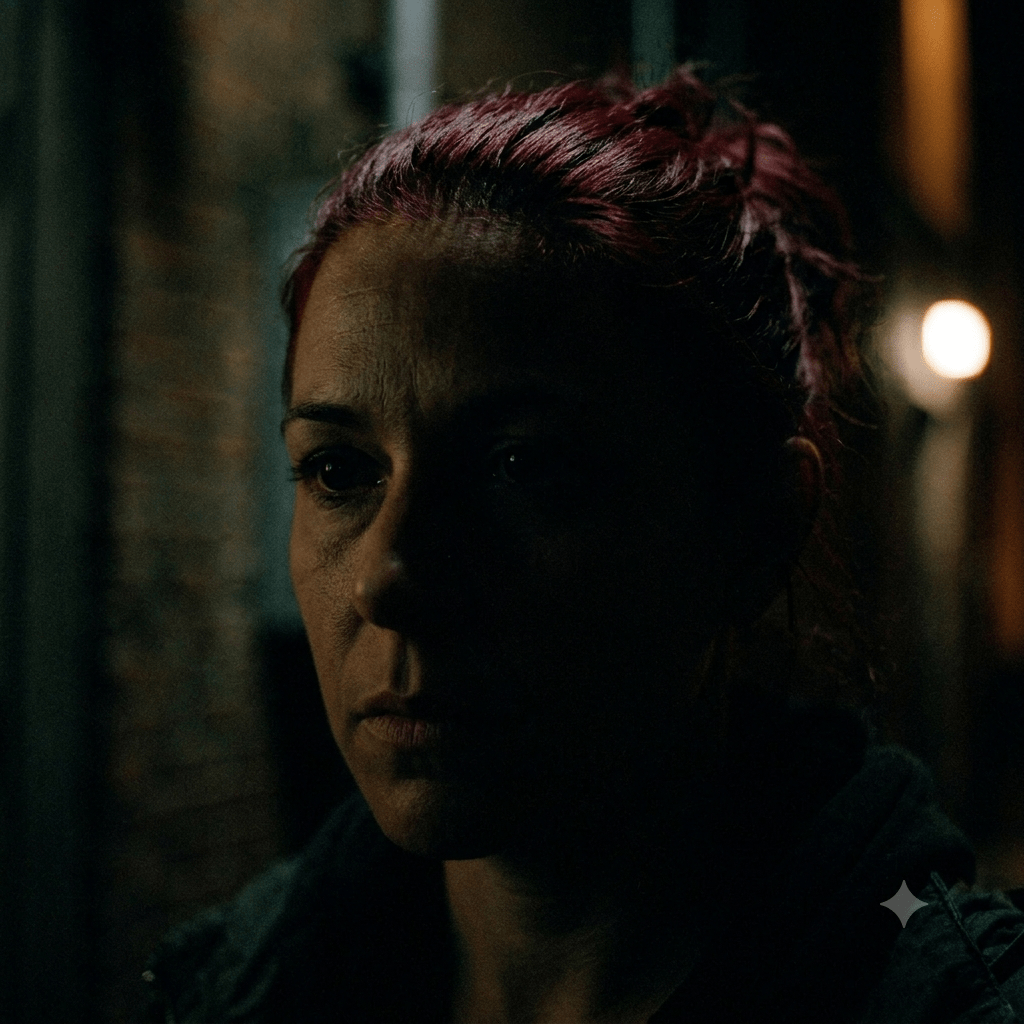

My latest Google Lyria song experiment surprised me.

I was playing back the song I had created when I noticed that the new song was longer than the standard 30 seconds. In fact, it was a full length three minute song, something only previously possible with paid versions of Lyria.

So I adjusted my prompt to take advantage of the length.

It’s probably no surprise that my latest Lyria song doesn’t touch on a couple who is never ever ever getting back together. Instead, I focused on the ICAO Doc 9303 “neutral expression” requirements I discussed in passing in this October 2025 post.

“But in one of those oddities that fill the biometric world, you can have TOO MUCH expression. Part 3 of International Civil Aviation Organization (ICAO) Document 9303, which governs machine readable travel documents, mandates that faces on travel documents must maintain a neutral expression without smiling. At the time (2003) it was believed that the facial recognition algorithms would work best if the subject were expressionless. I don’t know if that holds true today.”

That should make for a catchy song, shouldn’t it? Judge for yourself in the song “Neutral Expression.”

Wonder if the woman liked it.

She did!

You’re not gonna hear this song about dry fingerprint ridges on Top 40 radio. But for a select few biometric product marketers, it highlights a critically important issue.

Why?

Because dry fingerprint ridges, while not a common worry among the general populace, ARE a concern among law enforcement, homeland security, financial institution, and other professionals who depend on high-quality friction ridge capture to solve crimes and identify people.

And these people desperately need products that accurately capture fingerprints in challenging conditions.

And the product vendors need to communicate their product benefits to potential vendors.

That’s where Bredemarket comes to save the day.

Not with music.

(Thankfully.)

Through Bredemarket, I work with you to develop the customer-focused, benefits-oriented words that move your prospects toward your fingerprint capture solution.

If you want prospects to buy your identity product, schedule a free meeting with the biometric product marketing expert.

And…I couldn’t resist one more.

Another way to verify non-human identities is to link them to human ones. This NotebookLM explainer (not by me) offers the details.

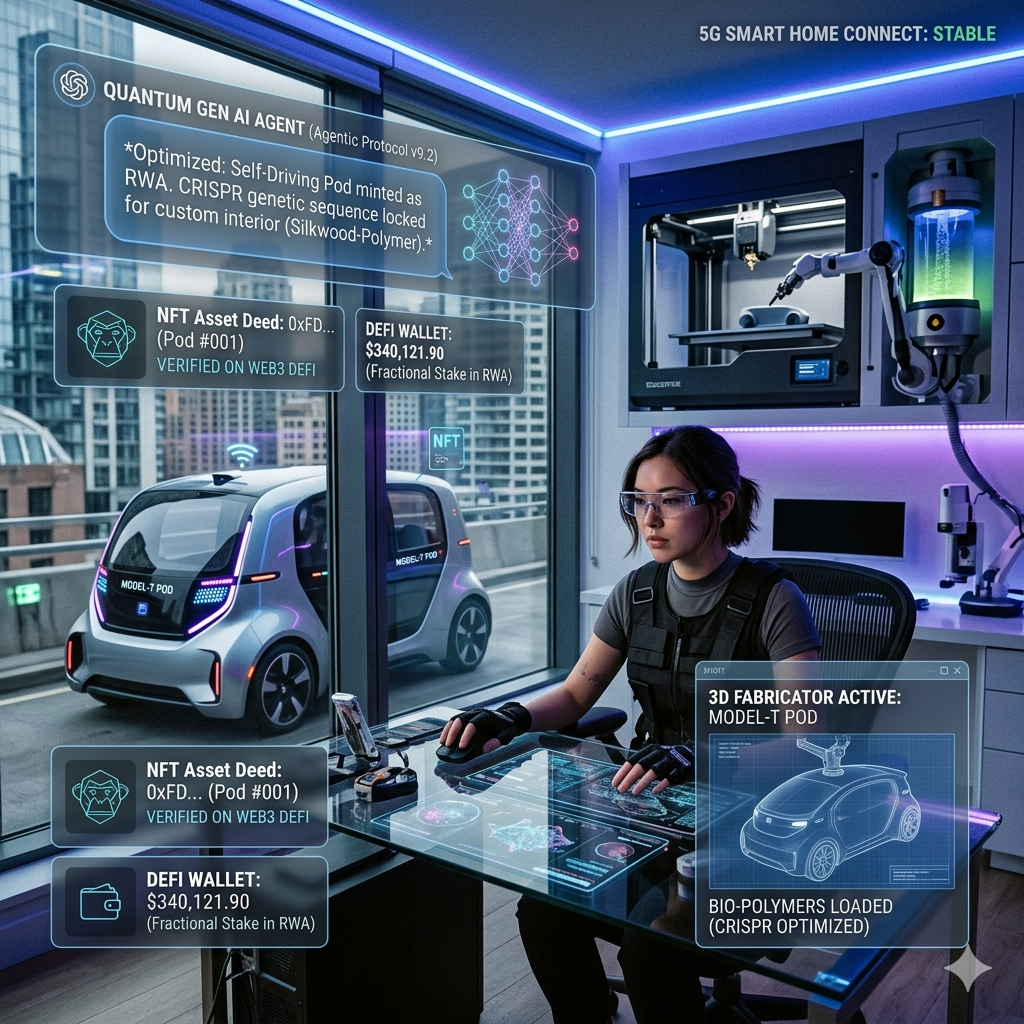

The picture above and text below were authored by Google Gemini.

Get ready to maximize your reality because our quantum-powered, generative AI agent is autonomously deploying a CRISPR-edited, synthetic biopolymer directly into your 5G-connected smart-home fabricator to 3D-print a hyper-personalized, self-driving robotaxi—instantly minting the entire experience as a fractionalized, Web3 DeFi asset with a secure NFT deed that grants your holographic avatar VIP entry into a fully decentralized, spatial-computing metaverse!