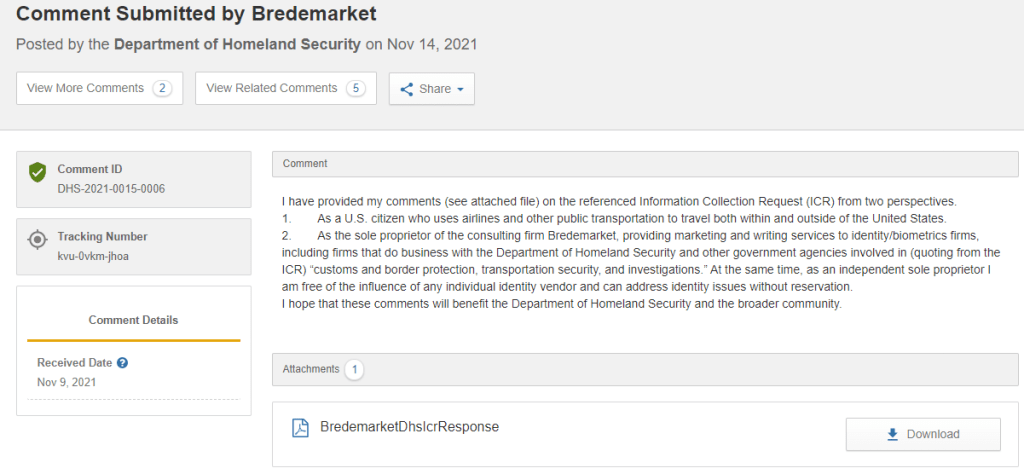

This post is adapted from Bredemarket’s November 10, 2021 submitted comments on DHS-2021-0015-0005, Information Collection Request, Public Perceptions of Emerging Technology.

The original DHS request included the following sentence in the introductory section:

AI in general and facial recognition in particular are not without public controversy, including concerns about bias, security, and privacy.

Even though this was outside of the topics specifically requiring a response, I had to respond anyway. Here’s (in part) what I said.

The topics of bias, security, and privacy deserve attention. Public misunderstandings on these topics have the capability of scuttling all of DHS’ efforts in customs and border protection, transportation security, and investigations.

Regarding bias, it is imperative upon government agencies, biometric vendors, and other interested parties (including myself as a biometric consultant) to educate and inform the public about issues relating to bias. In the interests of brevity, I will confine myself to two critical points.

- There is a difference between identification of individuals and classification of groups of individuals.

- Most of the public was introduced to the topic of biometric bias via the 2018 study Gender Shades (at the http://proceedings.mlr.press/v81/buolamwini18a/buolamwini18a.pdf URL). Unfortunately, the popular descriptions of this study confused several issues.

- The summary at the top of the Gender Shades website http://gendershades.org/ clearly frames the question asked by the study: “How well do IBM, Microsoft, and Face++ AI services guess the gender of a face?” As the study title and its summary clearly state, the study only attempted to classify the genders of faces.

- This is a different problem than the problem addressed in customs and border protection, transportation security, and investigations applications: namely, the identification of an individual. If someone purporting to be me attempts to board a plane, DHS does not care whether I am male, female, gender fluid, or anything else related to gender. DHS only cares about my individual identity.

- It is imperative that any discussion of bias as related to DHS purposes confine itself to the DHS use case of identification of individuals.

- Different algorithms exhibit different levels of bias (and different types of bias) when identifying individuals.

- While Gender Shades did not directly address this issue, it turns out that it is possible to identify differences in individual identification between different genders, races, and ages.

- The National Institute of Standards and Technology (NIST) has conducted ongoing studies of the accuracy and performance of face recognition algorithms. In one of these tests, the FRVT 1:1 Verification Test (at the https://pages.nist.gov/frvt/html/frvt11.html URL), each tested algorithm is examined for its performance among different genders, races (with nationality used as a proxy for race), and ages.

- While neither IBM nor Microsoft (two of the three algorithm providers studied in Gender Shades) have not submitted algorithms to the FRVT 1:1 Verification Test, over 360 1:1 algorithms have been tested by NIST.

- In a 2019 report issued by NIST on demographic effects (at the https://nvlpubs.nist.gov/nistpubs/ir/2019/NIST.IR.8280.pdf URL), NIST concluded that the tested algorithms “show a wide range in accuracy across developers, with the most accurate algorithms producing many fewer errors.”

- It is possible to look at the data for each individual algorithm to see detailed information on the algorithm’s performance. Click on each 1:1 algorithm to see its “report card,” including demographic results.

However, even NIST tests are just that – tests. Performance of a research algorithm on a NIST test with NIST data does not guarantee the same performance of an operational algorithm in a DHS system with DHS data.

As DHS implements biometric systems for its purposes of customs and border protection, transportation security, and investigations, DHS not only needs to internally measure the overall accuracy of these systems using DHS algorithms and data, but also needs to internally measure accuracy when these demographic factors are taken into account. While even highly accurate results may not be perceived as such by the public (the anecdotal tale of a single inaccurate result may outweigh stellar statistical accuracy in the public’s mind), such accuracy measurements are essential for the DHS to ensure that it is fulfilling its mission.

Regarding security and privacy, which are intertwined in many ways, there are legitimate questions regarding how the use of biometric technologies can detract or enhance the security and privacy of individual information. (I will confine myself to technology issues, and will not comment on the societal questions regarding knowledge of an individual’s whereabouts.)

- Data, including facial recognition vectors or templates, is stored in systems that may themselves be compromised. This is the same issue that is faced by other types of data that may be compromised, including passwords. In this regard, the security of facial recognition data is no different than the security of other data.

- In some of the DHS use cases, it is not only necessary to store facial recognition vectors or templates, but it is also necessary to store the original facial images. These are not needed by the facial recognition algorithms themselves, but by the humans who review the results of facial algorithm comparisons. As long as we continue to place facial images on driver’s licenses, passports, visas, and other secure identity documents, the need to store these facial images will continue and cannot be avoided.

- However, one must ensure that the storage of any personally identifiable information (including Social Security Numbers and other non-biometric data) is secure, and that the PII is only available on a need-to-know basis.

- In some cases, the use of facial recognition technologies can actually enhance privacy. For example, take the moves by various U.S. states to replace their existing physical driver’s licenses with smartphone-based mobile driver’s licenses (mDLs). These mDL applications can be designed to only provide necessary information to those viewing the mDL.

- When a purchase uses a physical driver’s license to buy age-restricted items such as alcohol, the store clerk viewing the license is able to see a vast amount of PII, including the purchaser’s birthdate, full name, residence address, and even height and weight. A dishonest store clerk can easily misuse this data.

- When a purchaser uses a mobile driver’s license to buy age-restricted items, most of this information is not exposed to the store clerk viewing the license. Even the purchaser’s birthdate is not exposed; all that the store clerk sees is whether or not the purchaser is old enough to buy the restricted item (for example, over the age of 21).

- Therefore, use of these technologies can actually enhance privacy.

I’ll be repurposing other portions of my response as new blog posts over the next several days.

5 Comments