(Part of the biometric product marketing expert series)

I’ll admit that I previously thought that age estimation was worthless, but I’ve since changed my mind about the necessity for it. Which is a good thing, because the U.S. National Institute of Standards and Technology (NIST) is about to add age estimation to its Face Recognition Vendor Test suite.

What is age estimation?

Before continuing, I should note that age estimation is not a way to identify people, but a way to classify people. For once, I’m stepping out of my preferred identity environment and looking at a classification question. Not “gender shades,” but “get off my lawn” (or my tricycle).

Age estimation uses facial features to estimate how old a person is, in the absence of any other information such as a birth certificate. In a Yoti white paper that I’ll discuss in a minute, the Western world has two primary use cases for age estimation:

- First, to estimate whether a person is over or under the age of 18 years. In many Western countries, the age of 18 is a significant age that grants many privileges. In my own state of California, you have to be 18 years old to vote, join the military without parental consent, marry (and legally have sex), get a tattoo, play the lottery, enter into binding contracts, sue or be sued, or take on a number of other responsibilities. Therefore, there is a pressing interest to know whether the person at the U.S. Army Recruiting Center, a tattoo parlor, or the lottery window is entitled to use the service.

- Second, to estimate whether a person is over or under the age of 13 years. Although age 13 is not as great a milestone as age 18, this is usually the age at which social media companies allow people to open accounts. Thus the social media companies and other companies that cater to teens have a pressing interest to know the teen’s age.

Why was I against age estimation?

Because I felt it was better to know an age, rather than estimate it.

My opinion was obviously influenced by my professional background. When IDEMIA was formed in 2017, I became part of a company that produced government-issued driver’s licenses for the majority of states in the United States. (OK, MorphoTrak was previously contracted to produce driver’s licenses for North Carolina, but…that didn’t last.)

With a driver’s license, you know the age of the person and don’t have to estimate anything.

And estimation is not an exact science. Here’s what Yoti’s March 2023 white paper says about age estimation accuracy:

Our True Positive Rate (TPR) for 13-17 year olds being correctly estimated as under 25 is 99.93% and there is no discernible bias across gender or skin tone. The TPRs for female and male 13-17 year olds are 99.90% and 99.94% respectively. The TPRs for skin tone 1, 2 and 3 are 99.93%, 99.89% and 99.92% respectively. This gives regulators globally a very high level of confidence that children will not be able to access adult content.

Our TPR for 6-11 year olds being correctly estimated as under 13 is 98.35%. The TPRs for female and male 6-11 year olds are 98.00% and 98.71% respectively. The TPRs for skin tone 1, 2 and 3 are 97.88%, 99.24% and 98.18% respectively so there is no material bias in this age group either.

Yoti’s facial age estimation is performed by a ‘neural network’, trained to be able to estimate human age by analysing a person’s face. Our technology is accurate for 6 to 12 year olds, with a mean absolute error (MAE) of 1.3 years, and of 1.4 years for 13 to 17 year olds. These are the two age ranges regulators focus upon to ensure that under 13s and 18s do not have access to age restricted goods and services.

From https://www.yoti.com/wp-content/uploads/Yoti-Age-Estimation-White-Paper-March-2023.pdf

While this is admirable, is it precise enough to comply with government regulations? Mean absolute errors of over a year don’t mean a hill of beans. By the letter of the law, if you are 17 years and 364 days old and you try to vote, you are breaking the law.

Why did I change my mind?

Over the last couple of months I’ve thought about this a bit more and have experienced a Jim Bakker “I was wrong” moment.

I was wrong for two reasons.

Kids don’t have government IDs

I asked myself some questions.

- How many 13 year olds do you know that have driver’s licenses? Probably none.

- How many 13 year olds do you know that have government-issued REAL IDs? Probably very few.

- How many 13 year olds do you know that have passports? Maybe a few more (especially after 9/11), but not that many.

Even at age 18, there is no guarantee that a person will have a government-issued REAL ID.

So how are 18 year olds, or 13 year olds, supposed to prove that they are old enough for services? Carry their birth certificate around?

You’ll note that Yoti didn’t target a use case for 21 year olds. This is partially because Yoti is a UK firm and therefore may not focus on the strict U.S. laws regarding alcohol, tobacco, and casino gambling. But it’s also because it’s much, much more likely that a 21 year old will have a government-issued ID, eliminating the need for age estimation.

Sometimes.

In some parts of the world, no one has government IDs

Over the past several years, I’ve analyzed a variety of identity firms. Earlier this year I took a look at Worldcoin. While Worldcoin’s World ID emphasizes privacy so much that it does not conclusively prove a person’s identity (it only proves a person’s uniqueness), and makes no attempt to provide the age of the person with the World ID, Worldcoin does have something to say about government issued IDs.

Online services often request proof of ID (usually a passport or driver’s license) to comply with Know your Customer (KYC) regulations. In theory, this could be used to deduplicate individuals globally, but it fails in practice for several reasons.

KYC services are simply not inclusive on a global scale; more than 50% of the global population does not have an ID that can be verified digitally.

From https://worldcoin.org/blog/engineering/humanness-in-the-age-of-ai

But wait. There’s more:

IDs are issued by states and national governments, with no global system for verification or accountability. Many verification services (i.e. KYC providers) rely on data from credit bureaus that is accumulated over time, hence stale, without the means to verify its authenticity with the issuing authority (i.e. governments), as there are often no APIs available. Fake IDs, as well as real data to create them, are easily available on the black market. Additionally, due to their centralized nature, corruption at the level of the issuing and verification organizations cannot be eliminated.

Same source as above.

Now this (in my opinion) doesn’t make the case for Worldcoin, but it certainly casts some doubt on a universal way to document ages.

So we’d better start measuring the accuracy of age estimation.

If only there were an independent organization that could measure age estimation, in the same way that NIST measures the accuracy of fingerprint, face, and iris identification.

You know where this is going.

How will NIST test age estimation?

Yes, NIST is in the process of incorporating an age estimation test in its battery of Face Recognition Vendor Tests.

NIST’S FRVT Age Estimation page explains why.

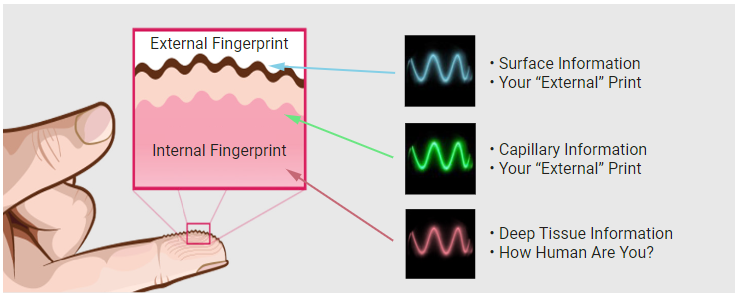

Facial age verification has recently been mandated in legislation in a number of jurisdictions. These laws are typically intended to protect minors from various harms by verifying that the individual is above a certain age. Less commonly some applications extend benefits to groups below a certain age. Further use-cases seek only to determine actual age. The mechanism for estimating age is usually not specified in legislation. Face analysis using software is one approach, and is attractive when a photograph is available or can be captured.

In 2014, NIST published a NISTIR 7995 on Performance of Automated Age Estimation. The report showed using a database with 6 million images, the most accurate age estimation algorithm have accurately estimated 67% of the age of a person in the images within five years of their actual age, with a mean absolute error (MAE) of 4.3 years. Since then, more research has dedicated to further improve the accuracy in facial age verification.

From https://pages.nist.gov/frvt/html/frvt_age_estimation.html

Note that this was in 2014. As we have seen above, Yoti asserts a dramatically lower error rate in 2023.

NIST is just ramping up the testing right now, but once it moves forward, it will be possible to compare age estimation accuracy of various algorithms, presumably in multiple scenarios.

Well, for those algorithm providers who choose to participate.

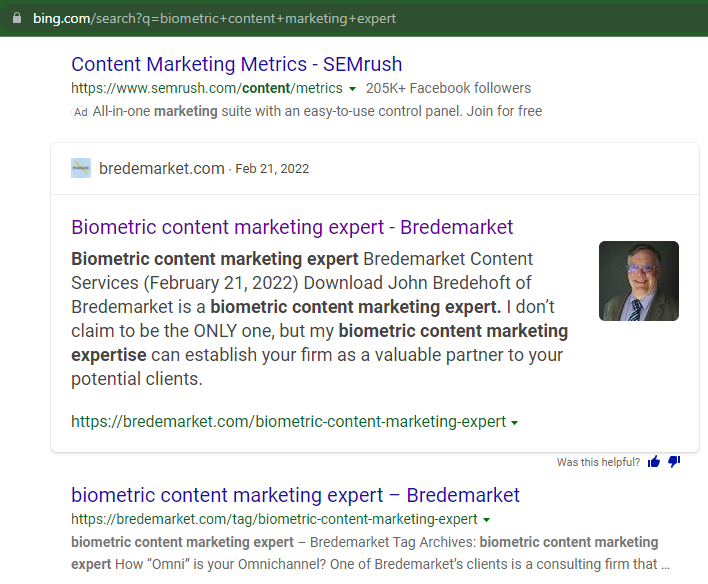

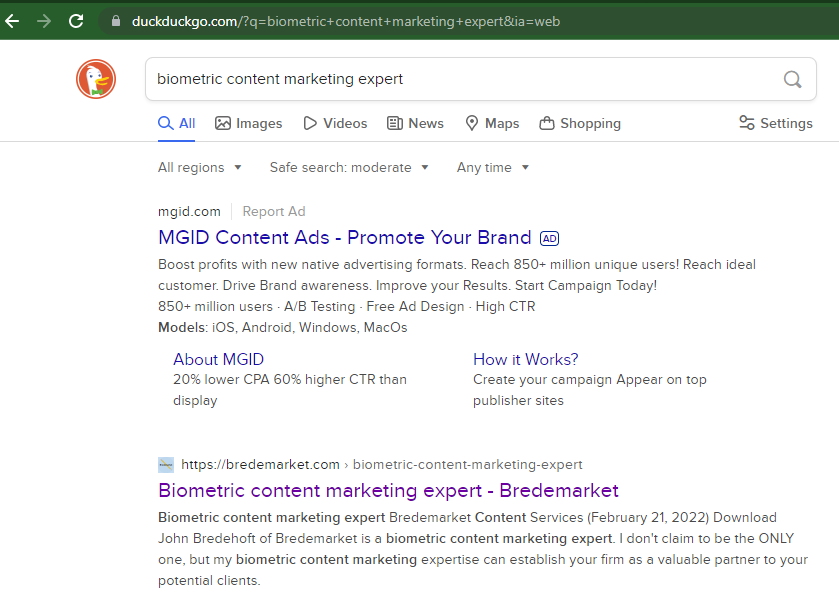

Does your firm need to promote its age estimation solution?

Does your company have an age estimation solution that is superior to all others?

Do you need an experienced identity professional to help you spread the word about your solution?

Why not consider Bredemarket? If your identity business needs a written content creator, look no further.