Some people like to look at baseball statistics or movie reviews for fun.

Here at Bredemarket, we scan the latest one-to-many (identification) results from NIST’S Ongoing Face Recognition Vendor Test (FRVT).

Hey, SOMEBODY has to do it.

Dicing and slicing the FRVT tests

For those who have never looked at FRVT before, it does not merely report the accuracy results of searches against one database, but reports accuracy results for searches against eight different databases of different types and of different sizes (N).

- Mugshot, Mugshot, N = 12000000

- Mugshot, Mugshot, N = 1600000

- Mugshot, Webcam, N = 1600000

- Mugshot, Profile, N = 1600000

- Visa, Border, N = 1600000

- Visa, Kiosk, N = 1600000

- Border, Border 10+YRS, N = 1600000

- Mugshot, Mugshot 12+YRS, N = 3000000

This is actually good for the vendors who submit their biometric algorithms, because even if the algorithm performs poorly on one of the databases, it may perform wonderfully on one of the other seven. That’s how so many vendors can trumpet that their algorithm is the best. When you throw in other qualifiers such as “top five,” “best non-Chinese vendor,” and even “vastly improved,” you can see how dozens of vendors can issue “NIST says we’re the best” press releases.

Not that I knock the practice; after all, I myself have done this for years. But you need to know how to interpret these press releases, and what they’re really saying. Remember this when you read the vendor announcement toward the end of this post.

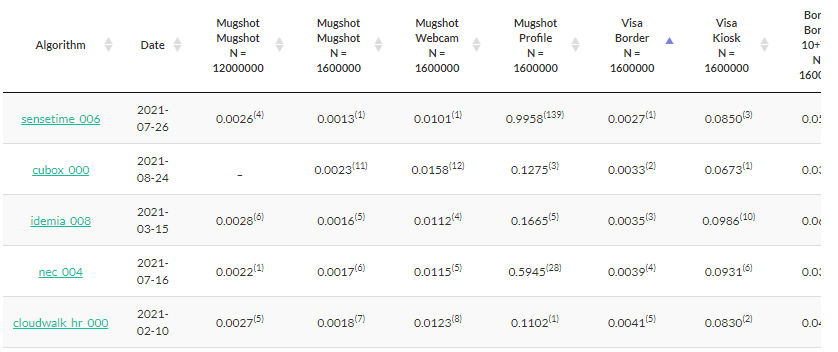

Anyway, I went to check the current results, which when you originally visit the page are sorted in the order of the fifth database, the Visa Border database. And this is what I saw this morning (October 27):

For the most part, the top five for the Visa Border test contain the usual players. North Americans will be most familiar with IDEMIA and NEC, and Cloudwalk and Sensetime have been around for a while.

A new algorithm from a not-so-new provider

But I had never noticed Cubox in the NIST testing before. And the number attached to the Cubox algorithm, “000,” indicates that this is Cubox’s first submission.

And Cubox did exceptionally well, especially for a first submission.

As you can see by the superscripts attached to each numeric value, Cubox had the second most accurate algorithm for the Visa Border test, the most accurate algorithm for the Visa Kiosk test, and placed no lower than 12th in the six (of eight) tests in which it participated. Considering that 302 algorithms have been submitted over the years, that’s pretty remarkable for a first-time submission.

Well, as an ex-IDEMIA employee, my curious nature kicked in.

Who is Cubox?

I’ll start by telling you who Cubox is not. Specifically, Cubox is not CuBox the low-power computer.

The Cubox that submitted an algorithm to NIST is a South Korean firm with the website cubox.aero, self-described as “The Leading Provider in Biometrics” (aren’t they all?) with fingerprint and face solutions. Cubox competes in the access control and border control markets.

Cubox’s ten-year history and “overseas” page details its growth in its markets, and its solutions that it has provided in South Korea, Mongolia, and Vietnam.

And although Cubox hasn’t trumpeted its performance on its own website (at least in the English version; I don’t know about the Korean version), Cubox has publicized its accomplishment on a LinkedIn post.

Why NIST tests aren’t important

But before you get excited about the NIST results from Cubox, Sensetime, or any of the algorithm providers, remember that the NIST test is just a test. NIST cautions people about this, I have cautioned people about this (see the fourth point in this post), and Mike French has also discussed this.

However, it is also important to remember that NIST does not test operational systems, but rather technology submitted as software development kits or SDKs. Sometimes these submissions are labeled as research (or just not labeled), but in reality it cannot be known if these algorithms are included in the product that an agency will ultimately receive when they purchase a biometric system. And even if they are “the same”, the operational architecture could produce different results with the same core algorithms optimized for use in a NIST study.

The very fact that test results vary between the NIST databases explicitly tells you that a number one ranking on one database does not mean that you’ll get a number one ranking on every database. And as French reminds us, when you take an operational algorithm in an operational system with a customer database, the results may be quite different.

Which is why French recommends that any government agency purchasing a biometric system should conduct its own test, with vendor operational systems (rather than test systems) loaded with the agency’s own data.

Incidentally, if your agency needs a forensic expert to help with a biometric procurement or implementation, check out the consulting services offered by French’s company, Applied Forensic Services.

And if you need help communicating the benefits of your biometric solution, check out the consulting services offered by my own company, Bredemarket. After all, I am a biometric content marketing expert.

- Send me an email at john.bredehoft@bredemarket.com.

- Or go to calendly.com/bredemarket to book a meeting with me.

- Or go to bredemarket.com/contact/ to use my contact form.

1 Comment