(Part of the biometric product marketing expert series)

We get all sorts of great tools, but do we know how to use them? And what are the consequences if we don’t know how to use them? Could we lose the use of those tools entirely due to bad publicity from misuse?

Do your federal facial recognition users know what they are doing?

I recently saw a WIRED article that primarily talked about submitting Parabon Nanolabs-generated images to a facial recognition program. But buried in the article was this alarming quote:

According to a report released in September by the US Government Accountability Office, only 5 percent of the 196 FBI agents who have access to facial recognition technology from outside vendors have completed any training on how to properly use the tools.

From https://www.wired.com/story/parabon-nanolabs-dna-face-models-police-facial-recognition/

Now I had some questions after reading that sentence: namely, what does “have access” mean? To answer those questions, I had to find the study itself, GAO-23-105607, Facial Recognition Services: Federal Law Enforcement Agencies Should Take Actions to Implement Training, and Policies for Civil Liberties.

It turns out that the study is NOT limited to FBI use of facial recognition services, but also addresses six other federal agencies: the Bureau of Alcohol, Tobacco, Firearms and Explosives (the guvmint doesn’t believe in the Oxford comma); U.S. Customs and Border Protection; the Drug Enforcement Administration; Homeland Security Investigations; the U.S. Marshals Service; and the U.S. Secret Service.

In addition, the study confines itself to four facial recognition services: Clearview AI, IntelCenter, Marinus Analytics, and Thorn. It does not address other uses of facial recognition by the agencies, such as the FBI’s use of IDEMIA in its Next Generation Identification system (IDEMIA facial recognition technology is also used by the Department of Defense).

Two of the GAO’s findings:

- Initially, none of the seven agencies required users to complete facial recognition training. As of April 2023, two of the agencies (Homeland Security Investigations and the U.S. Marshals Service) required training, two (the FBI and Customs and Border Protection) did not, and the other three had quit using these four facial recognition services.

- The FBI stated that facial recognition training was recommended as a “best practice,” but not mandatory. And when something isn’t mandatory, you can guess what happened:

GAO found that few of these staff completed the training, and across the FBI, only 10 staff completed facial recognition training of 196 staff that accessed the service. FBI said they intend to implement a training requirement for all staff, but have not yet done so.

From https://www.gao.gov/products/gao-23-105607.

So if you use my three levels of importance (TLOI) model, facial recognition training is important, but not critically important. Therefore, it wasn’t done.

The detailed version of the report includes additional information on the FBI’s training requirements…I mean recommendations:

Although not a requirement, FBI officials said they recommend (as

From https://www.gao.gov/assets/gao-23-105607.pdf.

a best practice) that some staff complete FBI’s Face Comparison and

Identification Training when using Clearview AI. The recommended

training course, which is 24 hours in length, provides staff with information on how to interpret the output of facial recognition services, how to analyze different facial features (such as ears, eyes, and mouths), and how changes to facial features (such as aging) could affect results.

However, this type of training was not recommended for all FBI users of Clearview AI, and was not recommended for any FBI users of Marinus Analytics or Thorn.

I should note that the report was issued in September 2023, based upon data gathered earlier in the year, and that for all I know the FBI now mandates such training.

Or maybe it doesn’t.

What about your state and local facial recognition users?

Of course, training for federal facial recognition users is only a small part of the story, since most of the law enforcement activity takes place at the state and local level. State and local users need training so that they can understand:

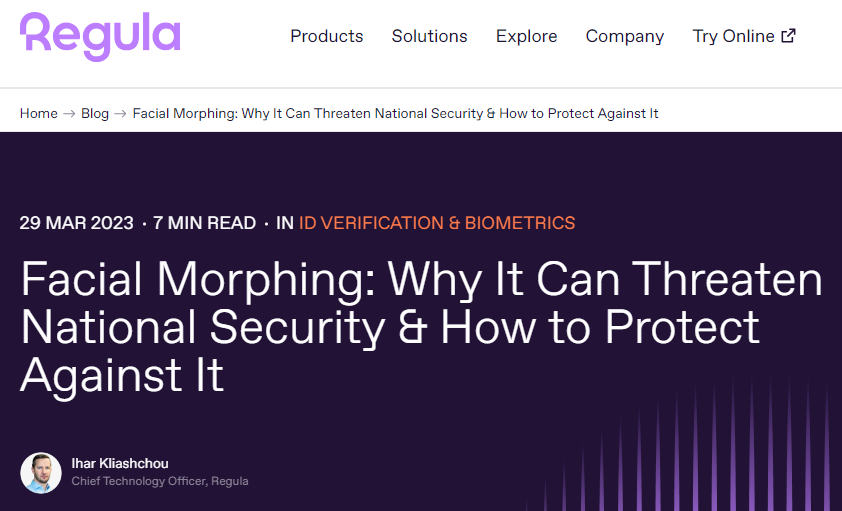

- The anatomy of the face, and how it affects comparisons between two facial images.

- How cameras work, and how this affects comparisons between two facial images.

- How poor quality images can adversely affect facial recognition.

- How facial recognition should ONLY be used as an investigative lead.

If state and local users received this training, none of the false arrests over the last few years would have taken place.

What are the consequences of no training?

Could I repeat that again?

If facial recognition users had been trained, none of the false arrests over the last few years would have taken place.

- The users would have realized that the poor images were not of sufficient quality to determine a match.

- The users would have realized that even if they had been of sufficient quality, facial recognition must only be used as an investigative lead, and once other data had been checked, the cases would have fallen apart.

But the false arrests gave the privacy advocates the ammunition they needed.

Not to insist upon proper training in the use of facial recognition.

But to ban the use of facial recognition entirely.

Like nuclear or biological weapons, facial recognition’s threat to human society and civil liberties far outweighs any potential benefits. Silicon Valley lobbyists are disingenuously calling for regulation of facial recognition so they can continue to profit by rapidly spreading this surveillance dragnet. They’re trying to avoid the real debate: whether technology this dangerous should even exist. Industry-friendly and government-friendly oversight will not fix the dangers inherent in law enforcement’s discriminatory use of facial recognition: we need an all-out ban.

From https://www.banfacialrecognition.com/

(And just wait until the anti-facial recognition forces discover that this is not only a plot of evil Silicon Valley, but also a plot of evil non-American foreign interests located in places like Paris and Tokyo.)

Because the anti-facial recognition forces want us to remove the use of technology and go back to the good old days…of eyewitness misidentification.

Eyewitness misidentification contributes to an overwhelming majority of wrongful convictions that have been overturned by post-conviction DNA testing.

Eyewitnesses are often expected to identify perpetrators of crimes based on memory, which is incredibly malleable. Under intense pressure, through suggestive police practices, or over time, an eyewitness is more likely to find it difficult to correctly recall details about what they saw.

From https://innocenceproject.org/eyewitness-misidentification/.

And these people don’t stay in jail for a night or two. Some of them remain in prison for years until the eyewitness misidentification is reversed.

Eyewitnesses, unlike facial recognition algorithms, cannot be tested for accuracy or bias.

And if we don’t train facial recognition users in the technology, then we’re going to lose it.