A Google Lyria song about privacy. Even though modern privacy laws did not exist in 1930s Dust Bowl Oklahoma.

Tag Archives: privacy

U.S. Privacy Laws Haven’t Reached European Levels…Yet. Ask Marcin P.

There’s privacy, and there’s privacy. And this post, unlike the last one, is set on the other side of the Atlantic.

In October 2025, Interpol issued a red notice for the Chief Executive Officer of currency exchange Cinkciarz after Polish authorities charged him with orchestrating a fraud and money laundering scheme.

In May, United States authorities detained the CEO pending a Polish extradition request.

Naturally, the ongoing affair is being heavily reported in the Polish media…minus one teeny tiny detail.

The CEO’s last name.

Polish publications only identify him as “Marcin P.” due to Polish privacy laws.

The U.S. Marshals Service is under no obligation to comply with these laws, and printed the CEO’s last name in its media release. But on the slight chance that a Polish citizen may be reading the Bredemarket blog, I won’t reprint it here.

But Marcin P. is only a suspect

Of course, Marcin has not been convicted of a crime. But if he is eventually convicted. Polish law WILL allow publication of his last name.

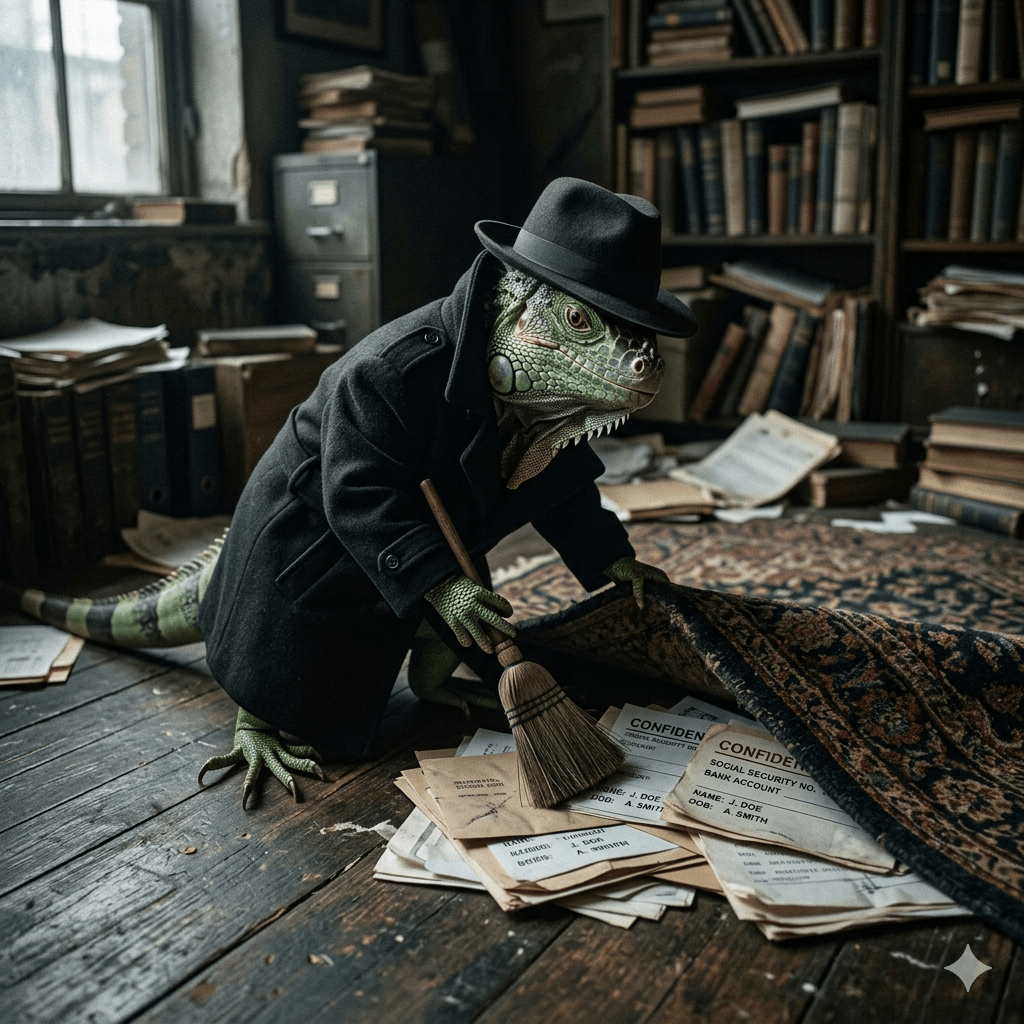

Unless he lodges a request for GDPR “right of erasure,” a right that has been upheld in Luxembourg.

“The case concerns the former president of a trade union organisation from 1985 to 2002, against whom charges were brought for forgery, abuse of trust, fraud and theft. The case involved several million ‘Luxembourg Francs’ (the Euro banknotes were introduced in 2002) and hundreds of victims. The individual had confessed and was sentenced in 2007 for various offences to a prison sentence of six years, with a two-year suspended sentence….

“A TV program was broadcast in 2018, followed by a radio show in 2022. In the meantime, the individual filed a legal request in 2020 to prohibit the media outlet ‘from mentioning the name and publishing the image of the claimant on its TV broadcasts, radio programs, and websites in connection with its activities related to […], under penalty of a fine’….

“[T]he Court of Appeal found that the dissemination of the image and the publication of the name and surname were not necessary to achieve the goal of information.”

To date, I know of no case in the United States in which a convicted criminal’s name has been suppressed.

To date.

When is a Law Enforcement Camera a Surveillance Tool?

Here’s another instance where I take an old post and completely contradict it. Flying against the flock, as it were.

But before I launch into the topic of this post, I want to share a video that Daniel Solove originally shared. The video was originally created by Apple to emphasize the privacy features of its Safari browser. Just the Safari browser, not anything else like, say, where your car is.

The title of the video just coincidentally happens to be “Flock.”

And now for something completely different.

When is a Law Enforcement Camera a Law Enforcement Camera?

You may remember my March post “When is a Law Enforcement Camera a Law Enforcement Camera?” The post concluded as follows:

“Basically, Flock Safety is controversial, and some people are going to oppose ANYTHING they do. Even when Flock Safety technology protects people from dangerous drivers.

“My view is that if a camera is used by a law enforcement agency, and there is no law prohibiting the law enforcement agency from using a camera for a particular purpose, then the agency can use the camera. There appears to be no such law in Georgia, so I’m not bent out of shape over this.

“What are your thoughts? Is this a privacy violation?”

Comments, we get comments

I received a comment on the March post that felt my “particular purpose” comment ignored the fact that the cameras can be used for other purposes…and ARE being used for other purposes. Without a search warrant. Here are a few excerpts from the comment:

“Every single one of these cameras takes images of every vehicle passing by on public roadways, even ones that are not owned or driven by a suspected criminal or the vehicle itself being suspected of being involved any crime. From there the images are processed by AI software logging the vehicles description and license plate number, along with the location of every one of the cameras that captured the same vehicle passing by along with a date and time of each passing, then that data is logged into a searchable database that can be searched by law enforcement up to 30 days (less or more in some places) without needing a search warrant signed by a judge….They can just search for anyone’s vehicles data regardless if there’s probable cause or not to do so.

“Law enforcement require a warrant prior to putting a GPS on someone’s vehicle, they are required to get a warrant before getting a persons cell phone ping and gps data from a mobile phone service company, but these systems are trying to be a loophole and pass any warrant requirements but provide the same type of private data that the other techniques require a search warrant for.”

The only comment I’ll offer is that it’s wise to make a distinction between how the cameras should be used and how the cameras are potentially used. Assuming that a law enforcement officer can search the database without a warrant, I believe that the officer should have probable cause to do so. The officer should not use a Flock database to stalk a romantic interest.

“In March, for instance, an officer resigned from the Milwaukee Police Department after allegedly using the department’s network of ALPRs to track his romantic partner and one of the partner’s exes nearly 180 times over a two-month period. His misconduct surfaced only after his victims looked up their license plate numbers on HaveIBeenFlocked.com, which collects Flock audit data that some local governments have made publicly available. MPD subsequently revoked most officers’ access to the Flock database.”

And so I ask you, again. What are your thoughts? Is this a privacy violation?

And if you need help writing about privacy…

I know a guy.

Now We’re Talking About Emojis

Summer Christine Duffield of New York has filed a lawsuit against the Walt Disney Company and related entities regarding alleged privacy violations at Disney’s California theme parks. I’m not sure whether she’s basing it on California law or something else, because the only cited cause of action in the summary of the filing is 28 U.S.C. § 1332 oc Diversity-Other Contract, which basically says that federal courts can handle civil cases over $75,000. Duffield is asking for millions.

At the Disney parks, some entrance lanes use facial recognition. Others do not.

In Biometric Update’s summary, it sounds like an EMOJI is at issue.

“The confusion here is in an emoji or graphic that Disney has used to indicate lanes for which facial recognition will not be used. The graphic shows a face with a slash through it – like no smoking, but for biometric data collection. However, the distinction is minor enough to cause confusion on its own, and Disney has not helped its case by failing to put, on its privacy notice, a working emoji; as it stands, customers may be forgiven for not understanding the difference between the two lanes, which Disney suggests are labeled identically.”

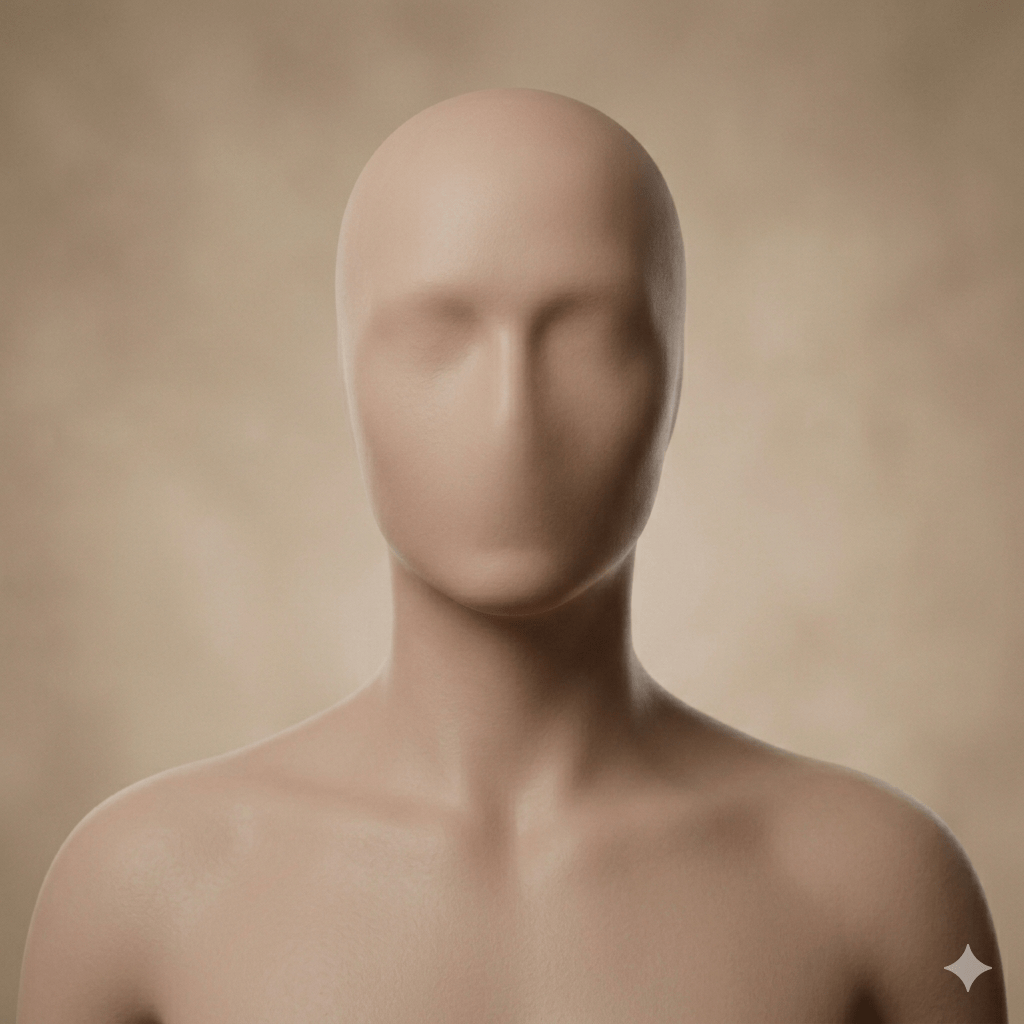

So much for emojis being straightforward. They can be interpreted in many ways. In the picture with this post, Google Gemini thought that the Disney symbol meant something entirely different.

If You Can’t Make It, Fake It: Generation of Synthetic Faces for Algorithmic Testing

Sometimes it seems like there’s a catch-22 in facial algorithm development. On the one hand, opponents complain: “How do you know these algorithms work if they’ve never been tested on real faces?” Then in the next breath they complain, “You can’t use the faces of real people to test your algorithms! That violates their privacy!”

So what do you do?

Fake it.

There are many ways to create fake faces for enterprise and consumer use, but how do we know that synthetic faces are sufficiently representative of real ones?

That’s the challenges these researchers faced:

“Face recognition models are trained on large-scale datasets, which have privacy and ethical concerns. Lately, the use of synthetic data to complement or replace genuine data for the training of face recognition models has been proposed. While promising results have been obtained, it still remains unclear if generative models can yield diverse enough data for such tasks. In this work, we introduce a new method, inspired by the physical motion of soft particles subjected to stochastic Brownian forces, allowing us to sample identities distributions in a latent space under various constraints. We introduce three complementary algorithms, called Langevin, Dispersion, and DisCo, aimed at generating large synthetic face datasets. With this in hands, we generate several face datasets and benchmark them by training face recognition models, showing that data generated with our method exceeds the performance of previously GAN-based datasets and achieves competitive performance with state-of-the-art diffusion-based synthetic datasets. While diffusion models are shown to memorize training data, we prevent leakage in our new synthetic datasets, paving the way for more responsible synthetic datasets.”

If you want to see the synthetic data these researchers created, and if you have the ability to uncompress tar.gz files (Mac and Windows 11 support this), visit this page.

How Much “Privacy First”?

While extremes resonate, they may not be practical.

Take “privacy first.”

Our intuition tells us that a lack of privacy is bad, so companies give us what we want. Privacy.

The privacy first extreme is exemplified by World, formerly WorldCoin. World can theoretically build a database of the irises of millions of people…but by design it does not know who any of them are. Am I eligible to vote in California? No idea.

Another extreme is exemplified by how we respond to ad-related queries. Our responses are understandable.

- When I see an ad that reads, “John, MBAs in Ontario, California are drinking this smoothie,” I wonder what else “they” know about me. (And yes, they know my age.)

- So I go to the extreme and decide that I don’t want “them” to know anything about me.

- Seems like a good idea until I start seeing ads for pink miniskirts…and the ads are in Chinese.

- I prepare to complain and ask why I’m seeing these ads, but then I remember that by design, the advertisers don’t know me from Adam…or, apparently, Eve.

So the privacy debate is not Boolean but is more nuanced.

- What types of personally identifiable information or protected health information will a system store?

- Who can access it?

- What happens when (not if) the system is breached?

On Ethics in Identity and Healthcare

I almost asked Google Gemini to perform a completely warped action.

I almost asked Gemini to write a post, in the style of Bredebot, explaining how ethics is detrimental to business.

But I didn’t write such a standalone post. Instead I asked Gemini to write a single paragraph.

While ethics is fundamentally viewed as a cornerstone of long-term brand equity, from a purely short-term, transactional perspective, it can be framed as a significant operational burden. In a hyper-competitive market, adhering to rigorous ethical standards often acts as a self-imposed “tax” that competitors may simply bypass. Strict compliance with environmental regulations, fair wage practices, and transparent sourcing can inflate overhead costs, leading to higher price points that may alienate price-sensitive consumers. Furthermore, ethical considerations can paralyze speed-to-market; while a competitor might prioritize rapid scaling through “growth hacking” or aggressive data harvesting, an ethically-bound company may lose its first-mover advantage by pausing for privacy audits or impact assessments. In this light, ethics is seen as a friction point that restricts a firm’s agility, limits immediate profit margins, and creates a strategic disadvantage against “bad actors” who prioritize raw efficiency over moral sentiment.

But even when receiving this instruction, Gemini emphasized that the advantage is “short-term” and “transactional.”

I assert that it’s especially transactional for companies that deal with private data-personally identifiable information (PII), protected health information (PHI), or its variants. Because if a company with your private data behaves unethically, you will be rightfully outraged.

Which is why companies such as Paravision and Reality Defender emphasize ethics.

Paravision in 2023:

“Whether it’s in facial recognition technology, hiring processes, or healthcare diagnostics, the consequences of biased AI can be far-reaching. Paravision heavily invests into broad and diverse training datasets to fight bias, and we must remain vigilant and ensure that AI systems are developed with fairness and equity in mind, and commit to improving the performance of AI algorithms across all demographics.”

Reality Defender in 2026:

“The committee’s job isn’t to bless what we ship, but to push back on it. This pushback includes (but is not limited to) operational questions, how we communicate uncertainty in a verdict, how we handle false positives at scale, and who has access to flagged content (and for how long).

“It also includes harder questions. What duty do we owe a worker authenticated through RealMeeting who didn’t choose to be authenticated? What happens when a regulator asks for our verdicts as evidence in a proceeding? How do we draw the line when a customer wants to use detection in a way we don’t think is appropriate?”

How does your identity or health vendor handle ethical issues? Or is a short-term and transactional benefit good enough?

How Do Privacy Professionals Feel About the SECURE Data Act?

How should businesses, governments, and individuals treat private data? In the United States, the answer varies from state to state, and even from city to city.

A proposed national solution to the hodgepodge of differing state and local privacy regulations is the so-called SECURE Data Act.

Privacy

But how do privacy professionals feel about it?

I knew that CalPrivacy opposed the bill, so I wanted to see how Daniel Solove regarded it.

“Congress’s latest foray is a new bill called the SECURE Data Act, a piece of garbage cooked up by Republicans as a gift to industry in a climate where the public is deeply concerned about privacy, outraged at the harms tech is causing, and yearning for ways to hold Big Tech accountable.

“I can’t stress enough how awful this bill is. On balance, if passed into law, it will do dramatic harm to privacy. It will leave people less protected than if it didn’t exist. I’d call it more of an anti-privacy law than a privacy law.”

Um…I don’t think Solove likes it.

Preemption

One of the huge issues that privacy advocates have is preemption. To ensure uniform privacy across the United States, the proposed SECURE Data Act preempts any state laws that exceed its protections. Therefore the privacy protections in states such as California, Illinois, and Texas could be revoked.

Of course, this is a classic struggle in state-federal relations. The staunchest states’ rights advocate will suddenly switch sides if they agree with a federal regulation, and vice versa. Citing one example, gay marriage began as a states’ rights issue when only a few states supported it, then became a federal rights issue.

Another example is the federal drive to eliminate the U.S. Department of Education. This has not happened yet. And it never will, once the powers that be realize that its elimination will allow blue states to teach whatever they want.

Secure

But let’s go back to the title of the bill, the SECURE Data Act. Upon seeing the title, the average voter would assume that the bill secures our individual privacy rights.

But the privacy advocates believe that the bill actually secures the right of entities to do what they want with our personally identifiable information, with minimal restrictions.

I think the bill’s title may have been written by Eric Arthur Blair.

(Image by Google Gemini. Sorry, Ontario Canada; Gemini may make mistakes.)

Jurisdictional Privacy and Consent

Where are you?

Who are you?

The answers to these questions affect if or how you obtain consent to use one’s personally identifiable information, or PII.

Privacy regulations can change when you cross country or even city lines, and they can also change depending on who you are: an individual, a business, or a government agency.

How?

- On one extreme, some entities in some jurisdictions don’t need to obtain consent at all. For example, the General Data Protection Regulation (GDPR) doesn’t require consent to perform an anti-money laundering (AML) check, since this is a legal obligation.

- On the other extreme, some entities in some jurisdictions must obtain express written consent. If I am a homeowner in Schaumburg, Illinois, and I use a doorbell camera to identify friends or foes approaching my door, the Biometric Information Privacy Act (BIPA) prohibits me from capturing their biometrics without their consent, and lets them sue me if I do it anyway.

Before you collect PII, check the laws in your jurisdiction first.

Oh, and check the laws in other jurisdictions in case they try to enforce their laws in your jurisdiction.

By the way: if you’re a software or hardware vendor, don’t assume that you bear no responsibility and that only your customer does.

You must educate your customers.

And Bredemarket can help you with my content-proposal-analysis services.

(Told you I’d bring this landing page back.)

Oklahoma Consumer Data Privacy Act…For Now

Yet another state has passed its own data privacy law, with the Oklahoma Consumer Data Privacy Act signed last month and taking effect in 2027. The key particulars:

“OKDPA grants consumers a set of rights…including rights of access, deletion, correction, and portability, and rights to opt-out of targeted advertising, sale, or profiling “in furtherance of a decision that produces a legal or similarly significant effect concerning the consumer.””

As for enforcement:

“Enforcement authority rests with the Oklahoma Attorney General.The bill includes a mandatory 30-day cure period, which does not sunset. The law imposes civil penalties of up to $7,500 per violation.”

As of now, between 19 and 22 states have privacy laws, depending upon how you count.

- Some aren’t counting Florida because of its limited scope. It only applies to companies with over $1 billion in revenue.

- Some aren’t counting Illinois because BIPA only applies to biometrics.

- Some aren’t counting Oklahoma yet because it’s so new.

But we can agree that many states have privacy laws.

For now

And if some have their way, they will all disappear, to be replaced by a single uniform federal law. However, the level of preemption of state laws is an issue of discussion. The Future of Privacy Forum has addressed preemption here.

And if you need to write about privacy, biometric or otherwise, Bredemarket can help. Click below to book a free meeting with me.

Here is a video about my services.