I know that the experts say that “too much knowledge is actually bad in tech.” But based upon what I just saw from an (unnamed) identity verification company, I assert that too little knowledge is much worse.

As a biometric product marketing expert and biometric product marketing writer, I pay a lot of attention to how identity verification companies and other biometric and identity companies market themselves. Many companies know how to speak to their prospects…and many don’t.

Take a particular company, which I will not name. Here is the “marketing” from this company.

- We have funding!

- We have long lists of features!

- We offer lower pricing than selected competitors!

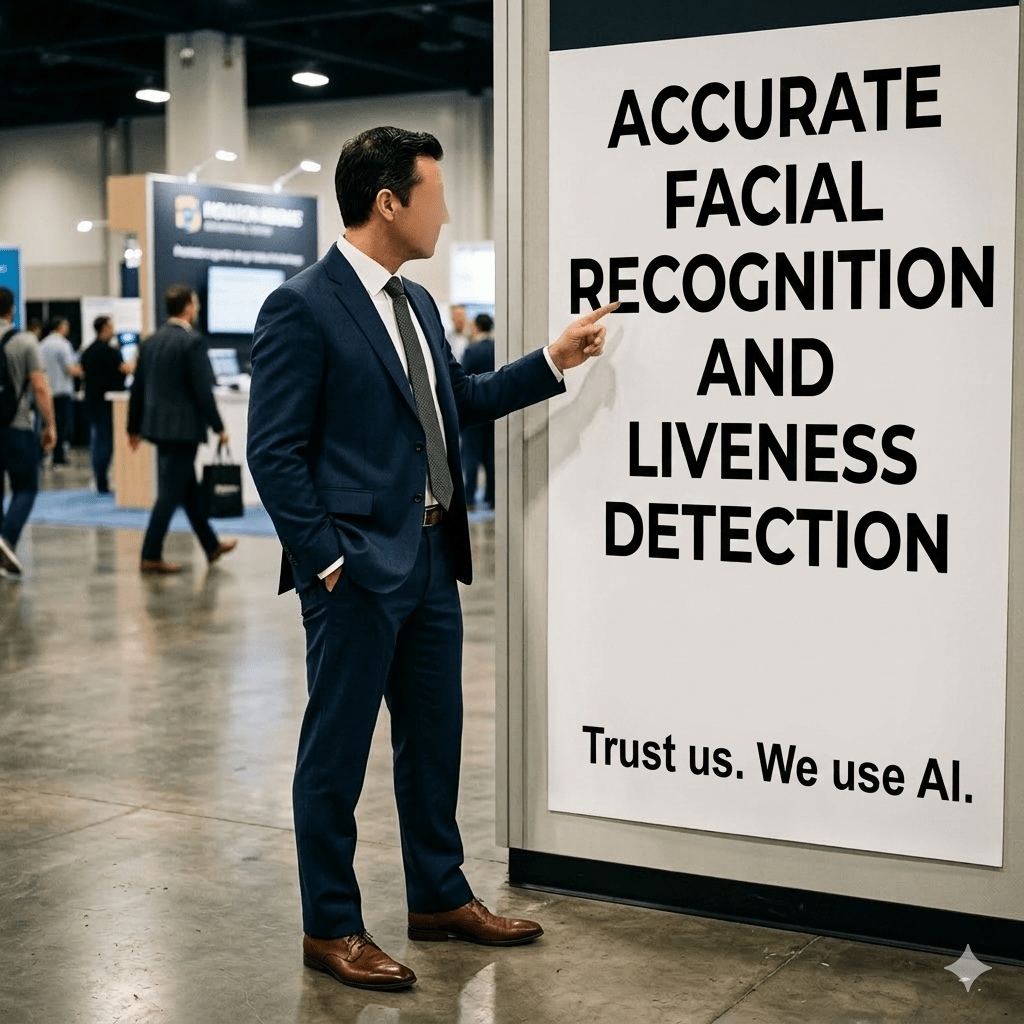

- We claim high facial recognition accuracy but don’t publish our NIST FRTE results! (While the company claims to author its technology, the company name does not appear in either the NIST FRTE 1:1 or NIST FRTE 1:N results.)

- We claim liveness detection (presentation attack detection) but don’t publish any confirmation letters! (Again, I could not find the company name on the confirmation letter lists from BixeLab or iBeta.)

So what is the difference between this company and the other 100+ identity verification companies…many of which explicitly state their benefits, trumpet their NIST FRTE performance, and trumpet their third-party liveness detection confirmation letters?

If you claim great accuracy and great liveness detection but can’t support it via independent third-party verification, your claim is “so what?” worthless. Prove your claims.

Now I’m sure I could help this company. Even if they have none of the certifications or confirmations I mentioned, I could at least get the company to focus on meaningful differentiation and meaningful benefits. But there’s no need to even craft a Bredemarket pitch to the company, since the only marketer on staff is an intern who is indifferent to strategy.

Because while many companies assert that all they need is a salesperson, an engineer, an African data labeler, and someone to run the generative AI for everything else…there are dozens of competitors doing the exact same thing.

But some aren’t. Some identity/biometric companies are paying attention to their long-term viability, and are creating content, proposals, and analyses that support that viability.

Take a look at your company’s marketing. Does it speak to prospects? Does it prove that you will meet your customers’ needs? Or does it sound like every other company that’s saying “We use AI. Trust us“?

And if YOUR company needs experienced help in conveying customer-focused benefits to your prospects…contact Bredemarket. I’ve delivered meaningful biometric materials to two dozen companies over the years. And yes, I have experience. Let me use it for your advantage.