I’ve talked ad nauseum about the need for a firm to differentiate itself from its competitors. If your firm engages in “me too” marketing, prospects have no reason to choose you.

But what about companies that DO differentiate themselves…and suddenly stop doing so?

There are four reasons why companies could stop differentiating themselves:

- The differentiator no longer exists.

- The differentiator is no longer important to prospects.

- The market has changed and the differentiator is no longer applicable.

- The differentiator still exists, but the company forgot about it.

Let’s look at these in turn.

The differentiator no longer exists

Sometimes companies gain a temporary competitive advantage that disappears as other firms catch up. But more often, the company only pursues the differentiator temporarily.

In 1985, amid anxiety about trade deficits and the loss of American manufacturing jobs, Walton launched a “Made in America” campaign that committed Wal-Mart to buying American-made products if suppliers could get within 5 percent of the price of a foreign competitor. This may have compromised the bottom line in the short term, but Walton understood the long-term benefit of convincing employees and customers that the company had a conscience as well as a calculator.

From https://reclaimdemocracy.org/brief-history-of-walmart/.

Now some of you may not remember Walmart’s “Made in America” banners, but I can assure you they were prevalent in many Walmarts in the 1980s and 1990s. Sam Walton’s autobiography even featured the phrase.

But as time passed, Walmart stocked fewer and fewer “Made in America” items as customers valued low prices over everything else. And some of the “Made in America” banners in Walmarts in the 1990s shouldn’t have been there:

“Dateline NBC” produced an exposé on the company’s sourcing practices. Although Wal-Mart’s “Made in America” campaign was still nominally in effect, “Dateline” showed that store-level associates had posted “Made in America” signs over merchandise actually produced in far away sweatshops. This sort of exposure was new to a company that had been a press darling for many years, and Wal-Mart’s stock immediately declined by 3 percent.

From https://reclaimdemocracy.org/brief-history-of-walmart/.

The decline was only temporary as Walmart stock bounced back. And 20 years later, the cycle would repeat as Walmart launched a similar “Made in USA” campaign in 2013, only to run into Federal Trade Commission (FTC) enforcement actions two years later.

The differentiator is no longer important

The Walmart domestic production episodes illustrate something else. If Walmart wanted to, it could have persevered and bought from domestic suppliers, even if the supplier price differential was greater than 5%.

But the buying customers didn’t really care.

Affordability was much more important to buyers than U.S. job creation.

So while labor leaders, politicians, and others may have complained about Walmart’s increasing reliance on Chinese goods, the company’s customers continued to do business with Walmart, bringing profitability to the company.

And before you decry the actions of consumers who act against their national self-interest…where was YOUR phone manufactured? China? Vietnam? Unless you own a Librem 5 USA, your phone isn’t from around here. We’re all Commies.

The market has changed

Sometimes the market changes and consumers look at things a little differently.

I’ve previously told the story of Mita, and its 1980s slogan “all we make are great copiers.” In essence, Mita had to adopt this slogan because, unlike its competitors, it did NOT have a diversified portfolio.

This worked for a while…until the “document solutions” industry (copiers and everything else) embraced digital technologies. Well, Fuji-Xerox, Ricoh and Konica did. Mita didn’t, and went bankrupt.

The former Mita is now part of Kyocera Document Solutions.

And stand-alone copiers aren’t even offered.

The company forgot

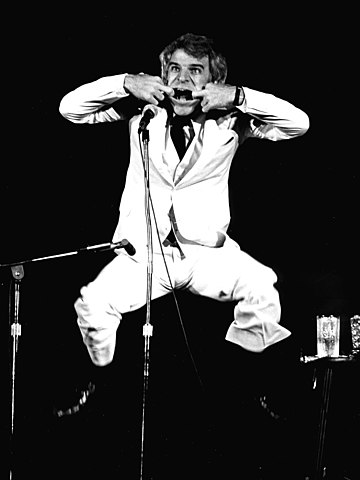

Before Walmart emphasized “Made in America” products, former (and present) stand-up comedian Steve Martin was dispensing tax advice.

“Steve.. how can I be a millionaire.. and never pay taxes?” First.. get a million dollars. Now.. you say, “Steve.. what do I say to the tax man when he comes to my door and says, ‘You.. have never paid taxes’?” Two simple words. Two simple words in the English language: “I forgot!”

From https://tonynovak.com/how-to-be-a-millionaire-and-not-pay-any-taxes/.

While the IRS will not accept this defense, there are times when people, and companies, forget things.

- I know of one company that had a clear differentiator over most of its competition: the fact that a key component of its solution was self-authored, rather than being sourced from a third party.

- For a time, the company strongly emphasized this differentiator, casting fear, uncertainty, and doubt against its competitors who depended upon third parties for this key component.

- But time passes, priorities change, and the company’s website now buries this differentiator on a back page…making the company sound like all its competitors.

But the company has an impressive array of features, so there’s that.

Restore your differentiators

If your differentiators have faded away, or your former differentiators are no longer important, perhaps it’s time to re-emphasize them so that your prospects have a reason to choose you.

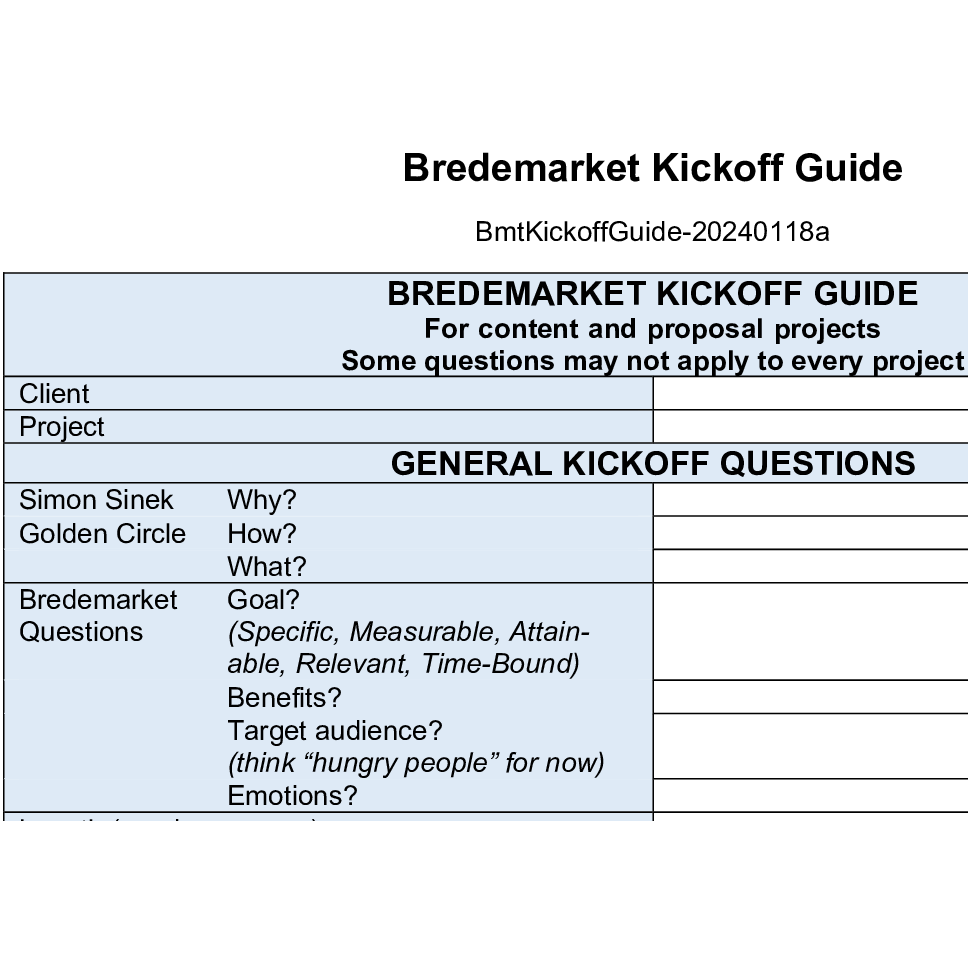

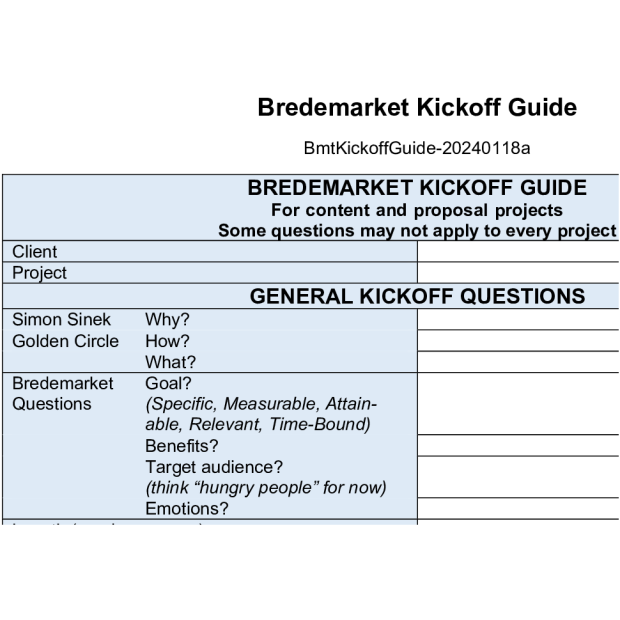

Ask yourself questions about why your firm is great, why all the other firms suck, and what benefits (not features) your customers enjoy that the competition’s customers don’t. Only THEN can you create content (or have your content creator do it for you).

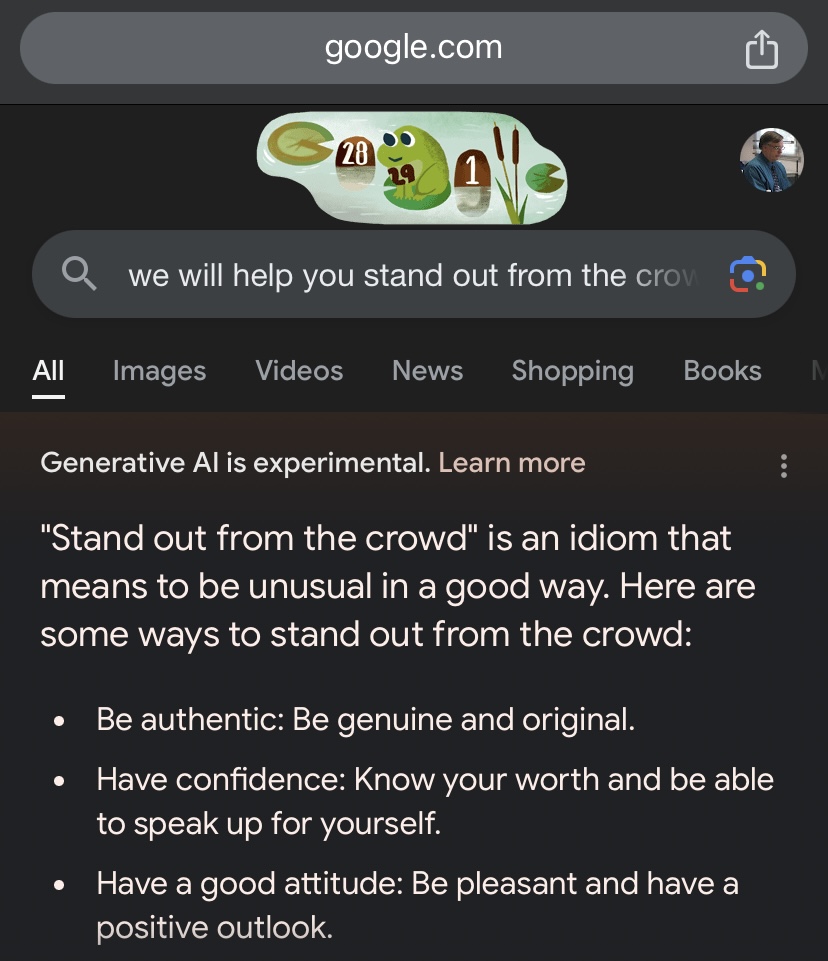

A little postscript: originally I was only going to list three items in this post, but Hana LaRock counsels against this because bots default to three-item lists (see her item 4).