(Image from LockPickingLawyer YouTube video)

This metal injection attack isn’t from an Ozzy Osbourne video, but from a video made by an expert lock picker in 2019 against a biometric gun safe.

The biometric gun safe is supposed to deny access to a person whose fingerprint biometrics aren’t registered (and who doesn’t have the other two access methods). But as Hackaday explains:

“(T)he back of the front panel (which is inside the safe) has a small button. When this button is pressed, the device will be instructed to register a new fingerprint. The security of that system depends on this button being inaccessible while the safe is closed. Unfortunately it’s placed poorly and all it takes is a thin piece of metal slid through the thin opening between the door and the rest of the safe. One press, and the (closed) safe is instructed to register and trust a new fingerprint.”

Biometric protection is of no use if you can bypass the biometrics.

But was the safe (subsequently withdrawn from Amazon) over promising? The Firearm Blog asserts that we shouldn’t have expected much.

“To be fair, cheap safes like this really are to keep kids, visitors, etc from accessing your guns. Any determined person will be able to break into these budget priced sheet metal safes….”

But still the ease at bypassing the biometric protection is deemed “inexcusable.”

So how can you detect this injection attack? One given suggestion: only allow the new biometric registration control to work when the safe is open (meaning that an authorized user has presumably opened the safe). When the safe is closed, insertion of a thin piece of metal shouldn’t allow biometric registration.

For other discussions of injection attack detection, see these posts: one, two.

By the way, this is why I believe passwords will never die. If you want a cheap way to lock something, just use a combination. No need to take DNA samples or anything.

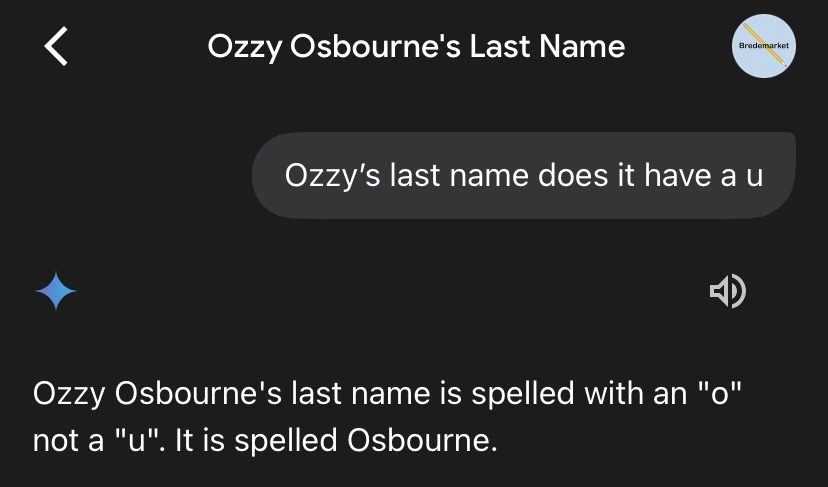

Oh, and a disclosure: I used Google Gemini to research this post. Not that it really helped.