I’ve been trying to add more local (Inland Empire West) content to the Bredemarket blog. Obviously I’m attempting to promote Bredemarket’s services to local businesses by writing local-area content such as these two recent posts centered on Upland.

Old SEO

Back in the old days, I might have done optimized for my local audience by going to the bottom of relevant pages on the Bredemarket website and inserting hundreds of words in very small gray text that cite every single Inland Empire West community (yes, even Narod) and every single service that Bredemarket provides. In the old days, the rationale was that this additional text would positively affect search engine optimization (SEO), so that the next person searching for “case study writer in Narod, California” would automatically go to the page with all of the small gray text.

Of course, that doesn’t work any more, because Google penalizes keyword stuffers or content stuffers who make these unnatural pages.

More modern SEO

I use more acceptable forms of SEO. For example, I’ve devoted significant effort to make sure that the two phrases biometric content marketing expert and biometric proposal writing expert direct searchers to the relevant pages on this website. (Provided, of course, that someone is actually searching for a biometric content marketing expert or a biometric proposal writing expert.)

I believe SEO is important.

However, SEO is not very important (NVI).

(As an aside, I commonly distinguish between “important” stuff and “very important” stuff. And you also have to distinguish between importance and urgency; see the Eisenhower matrix.)

What is very very important (VVI)? Human readable format (HRF).

The two audiences: the bots, and the real humans

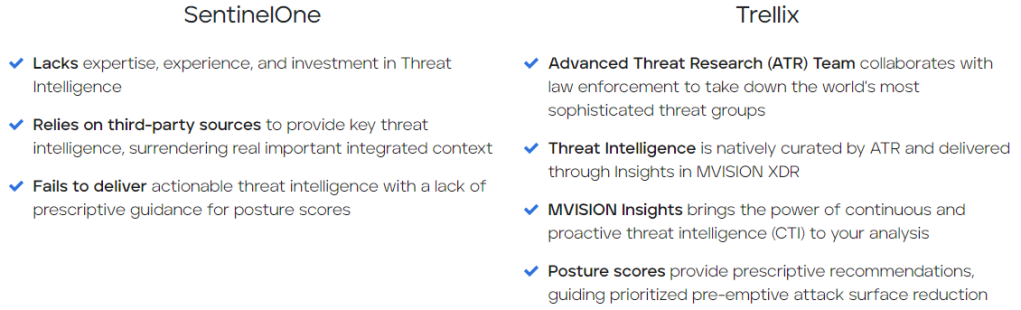

And SEO-optimized content may differ from human-readable content because of their different audiences. SEO text is written for machines, not people. In extreme cases, SEO-optimized text is unreadable by humans, and human readable format cannot be interepreted by machine-based web crawlers.

Here’s a comparison:

| Machine readable | Human readable |

| Easily parsed and processed by systems | Easily read by humans |

| Hard for humans to read | Hard for machines to read |

| Adheres to FAIR principles (findable, accessible, interoperable, reusable) | Communicates with humans via visual presentation |

| Example: machines have problems reading tables like this one | Example: humans can easily read and understand tables like this one |

Here’s another example of how SEO-optimized text and human readable format sometimes diverge. I mentioned this example in a recent LinkedIn article:

I recently updated my proposal resume to include the headline “John E. Bredehoft, CF APMP,” under the assumption that this headline would impress proposal professionals. However, when I ran my resume through an ATS (applicant tracking system) simulator, it was unable to find my name because of its non-standard format. Because of this, there was a chance that a proposal professional would never even see my resume. I adjusted accordingly.

From https://www.linkedin.com/pulse/what-happens-when-proposal-evaluators-longer-human-bredemarket/

In this case, I had to cater to two separate audiences:

- The computerized applicant tracking systems that read resumes and decide which resumes to pass on to human beings.

- The human beings that read resumes, often after the applicant tracking systems have pre-selected the resumes to read.

I was able to come up with a workaround to satisfy both audiences, therefore ensuring that (a) my resume would get past the ATS, and (b) a human that viewed my ATS-approved resume could actually read it. It’s a clumsy workaround, but it works.

While that particular example is complicated by the gate-keeping ubiquity of applicant tracking systems in the employment industry, it is not unique. All industries are depending more on artificial intelligence, and almost all human beings are turning to Google, Bing, DuckDuckGo, and the like to find stuff.

These are our users (who use search engines for EVERYTHING)

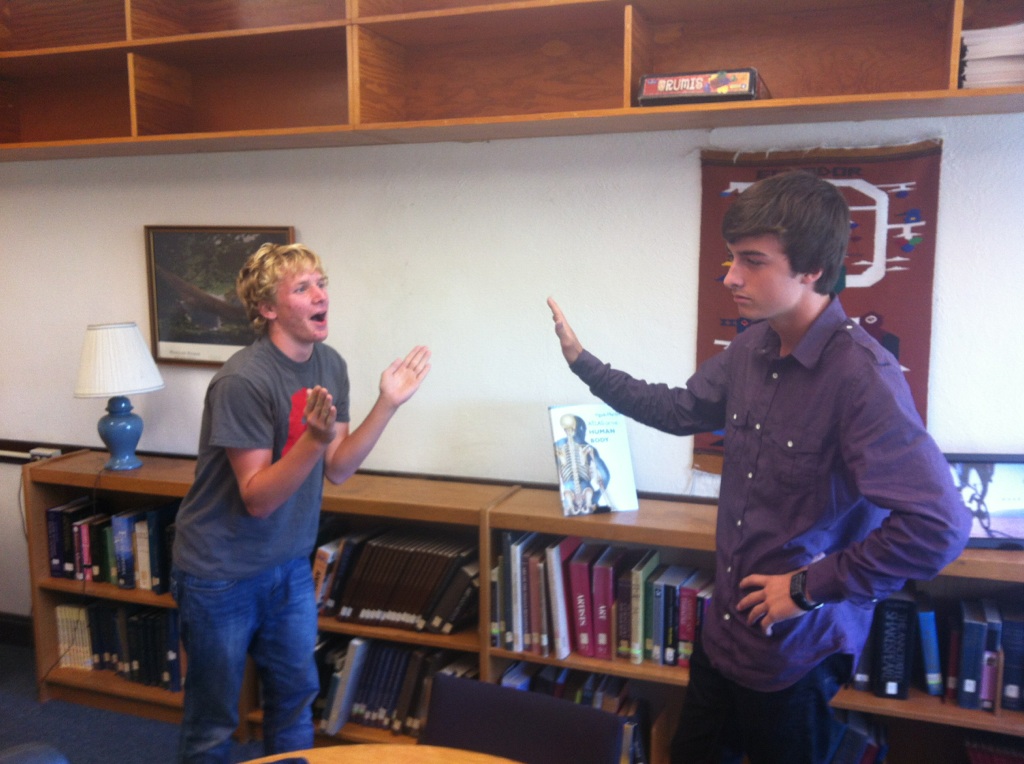

There’s an old example of how dependent we are on search engines. In a famous case over a decade ago, people used the Google search engine to get to the Facebook login page, and were confused when a non-Facebook page made its way to the top of the search results. The original article that prompted the brouhaha is gone, but Jake Kuramoto’s summary still remains. TL;DR:

ReadWriteWeb posted “Facebook Wants to Be Your One True Login“.

Google indexed the post.

The post became the top result for the keywords “facebook login”.

People using Google to find their way to Facebook were misdirected to the post.

The comments on the post were littered with unhappy people, unable to login to Facebook.

There are more than 300 comments on this post, the majority of them from confused Facebook users.

Despite the fact that RWW added bold text to the post, directing users to Facebook, and the fact that the post is no longer the top result for “facebook login”, people continue to arrive there by accident, looking for Facebook.

From http://theappslab.com/2010/02/11/these-are-our-users/

What if we can only optimize for one of the two audiences?

So people who create content have to simultaneously satisfy the bots and the real people. But what if they couldn’t satisfy both? If you were forced to choose between optimizing text for a search engine, and optimizing text for a human, what would you choose?

If it were up to Google and the other search engine providers, you wouldn’t have to choose. The ultimate goal of Google Search (and other searches) is to mimic the way that real humans would search for things if they had all of the computing resources that the search engine providers have. It’s only because of our imperfect application of artificial intelligence that there is any divergence between search engines and human searches.

But until AI gets a lot better than it is now, there will continue to be a divergence.

And if I ever had to choose, I’d write for humans rather than bots.

After all, a human is going to have to read the text at some point. Might as well make the human comfortable, since the bots aren’t making final binding decisions. (Yet.)