If I become known for anything in biometrics, I want to be known for my extremely frequent use of the words “investigative lead.”

Whether you are talking about DNA or facial recognition, these types of biometric evidence should not be the sole evidence used to arrest a person.

For an example of why DNA shouldn’t be your only evidence, see my recent post about Amanda Knox.

Facial recognition misuse in law enforcement

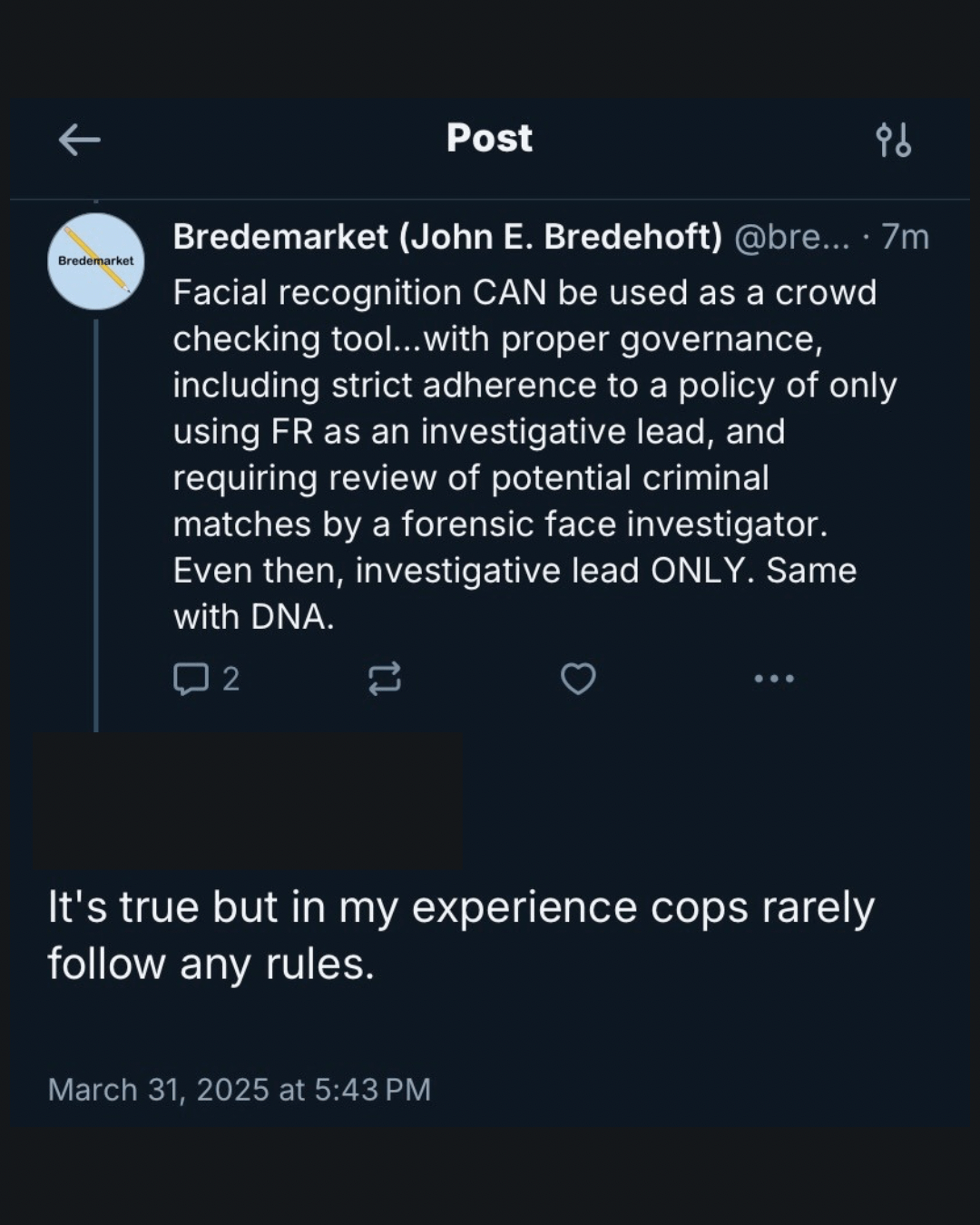

Regarding facial recognition, I wrote this in a social media conversation earlier today:

“Facial recognition CAN be used as a crowd checking tool…with proper governance, including strict adherence to a policy of only using FR as an investigative lead, and requiring review of potential criminal matches by a forensic face investigator. Even then, investigative lead ONLY. Same with DNA.”

I received this reply:

“It’s true but in my experience cops rarely follow any rules.”

Now I could have claimed that this view was exaggerated, but there are enough examples of cops who DON’T follow the rules to tarnish all of them.

Revisiting Robert Williams’ Detroit arrest

I’ve already addressed the sad story of Robert Williams, who was “wrongfully arrested based upon faulty facial recognition results.”

At the time, I did not explicitly share the circumstances behind Williams’ arrest:

“The complaint alleges that the surveillance footage is poorly lit, the shoplifter never looks directly into the camera and still a Detroit Police Department detective ran a grainy photo made from the footage through the facial recognition technology.”

There’s so much that isn’t said here, such as whether a forensic face examiner made a definitive conclusion, or if the detective just took the first candidate from the list and ran with it.

But I am willing to bet that there was no independent evidence placing Williams at the shop location.

Why this matters

The thing that concerns me about all this? It just provides ammo to the people who want to ban facial recognition entirely.

Not realizing that the alternative—manual witness (mis)identification—is far more inaccurate and far more racist.

But the controversy would pretty much go away if criminal investigators only used facial recognition and DNA as investigative leads.