Trevonix on LinkedIn:

“Within the space of a single week, nearly every major identity vendor announced or shipped a platform specifically designed to govern AI agents. The timing was not coordinated. It was convergent.”

More here.

Identity/biometrics/technology marketing and writing services

Trevonix on LinkedIn:

“Within the space of a single week, nearly every major identity vendor announced or shipped a platform specifically designed to govern AI agents. The timing was not coordinated. It was convergent.”

More here.

The last time I delved into lattices, it was in connection with the NIST FIPS 204 Module-Lattice-Based Digital Signature Standard. To understand why the standard is lattice-based, I turned to NordVPN:

“A lattice is a hierarchical structure that consists of levels, each representing a set of access rights. The levels are ordered based on the level of access they grant, from more restrictive to more permissive.”

In essence, the lattice structure allows more elaborate access rights.

This article (“Lattice-Based Identity and Access Management for AI Agents”) discusses lattices more. Well, not explicitly; the word “lattice” only appears in the title. But here is the article’s main point:

“We are finally moving away from those clunky, “if-this-then-that” systems. The shift to deep learning means agents can actually reason through a mess instead of just crashing when a customer uses a slang word or a shipping invoice is slightly blurry.”

It then says

“Deep learning changes this because it uses neural networks to understand intent, not just keywords.”

Hmm…intent? Sounds a little somewhat you why…or maybe it’s just me.

But it appears that we sometimes don’t care about the intent of AI agents.

“If you gave a new employee the keys to your entire office and every filing cabinet on day one, you’d be sweating, right? Yet, that is exactly what many companies do with ai agents by just slapping an api key on them and hoping for the best.”

This is not recommended. See my prior post on attribute-based access control, which led me to focus more on non-person entities (non-human identities).

As should we all.

Just your friendly reminder that you have to vet the identity of a bot just like you do a human.

But how? In 2024 I speculated about the application of factors of authentication to non-human identities.

As I plan to note on Monday, you also have to look at intent.

Over a year ago I shared this:

The mood at the time was that the world was changing and generative AI bots and non-person entities could replace people.

Yes, I am familiar with the party line that AI wouldn’t replace anyone, but would empower everyone to do their jobs more effectively.

The layoff trackers told a different story.

As did the AI gurus who proclaimed that many jobs would soon be obsolete.

Strangely enough, “AI guru” was not one of the jobs that was going away. Which is odd. It seems to me that giving inspirational talks would be the perfect job for a non-person entity.

But many people agreed that entry-level jobs were ripe for rightsizing, meaning that those at the beginnings of their careers would have a much harder time finding work.

“Hardware giant IBM plans to triple entry-level hiring in the U.S. in 2026, according to reporting from Bloomberg. Nickle LaMoreaux, IBM’s chief human resource officer, announced the initiative….’And yes, it’s for all these jobs that we’re being told AI can do,’ LaMoreaux said.”

Because IBM has separated what AI can do from what it can’t do. IBM’s new positions are “less focused on areas AI can actually automate — like coding — and more focused on people-forward areas like engaging with customers.”

Guess what? Bots are not engaging. Well, maybe they’re more engaging than AI gurus…

But I will go one step further and claim that human product marketers and content writers are more engaging than bot product marketers and content writers.

Believe me, I’ve tested this. Bredebot can fake 30 years of experience, but it’s not genuine.

If you want to engage with your prospects, don’t assign the job to a bot. That’s human work.

I can’t say WHY I’m looking at bash script vulnerabilities, but they’ve been around since…well, this Kaspersky article is based upon CVE-2014-6271.

“The “bash bug,” also known as the Shellshock vulnerability, poses a serious threat to all users. The threat exploits the Bash system software common in Linux and Mac OS X systems in order to allow attackers to take potentially take control of electronic devices. An attacker can simply execute system level commands, with the same privileges as the affected services….

“But just imagine that you could not only pass this normal system information to the CGI script, but could also tell the script to execute system level commands. This would mean that – without having any credentials to the webserver – as soon as you access the CGI script it would read your environment variables; and if these environment variables contain the exploit string, the script would also execute the command that you have specified.”

An authorization nightmare as a hostile non-person entity runs amok.

And it’s still a threat, as two recent CVEs attest…and that’s all I’ll say.

Sadly the question “why would a robot fish?” was shared in a private Facebook group, so I cannot share the entire question with you. But I can share my response.

“Some humans don’t fish for food, but for relaxation. But if robots need downtime, it doesn’t have to be at a stream with a pole.”

After thinking, I composed the prompt for the Google Gemini picture that illustrates this post.

“Create a realistic picture of a robot by a stream in the woods, fishing. The eyes and other parts of the robot’s head indicate that its internal controls are in maintenance mode, or that the robot is ‘relaxing.’”

My own content creation process with Bredemarket includes a “sleep on it” step which lets my brain reset before taking a fresh look at the content.

The generative AI equivalent is to take the output from the initial prompt, start a new independent chat, and write a second prompt to re-evaluate the output of the first prompt.

Which I guess would be “fishing.”

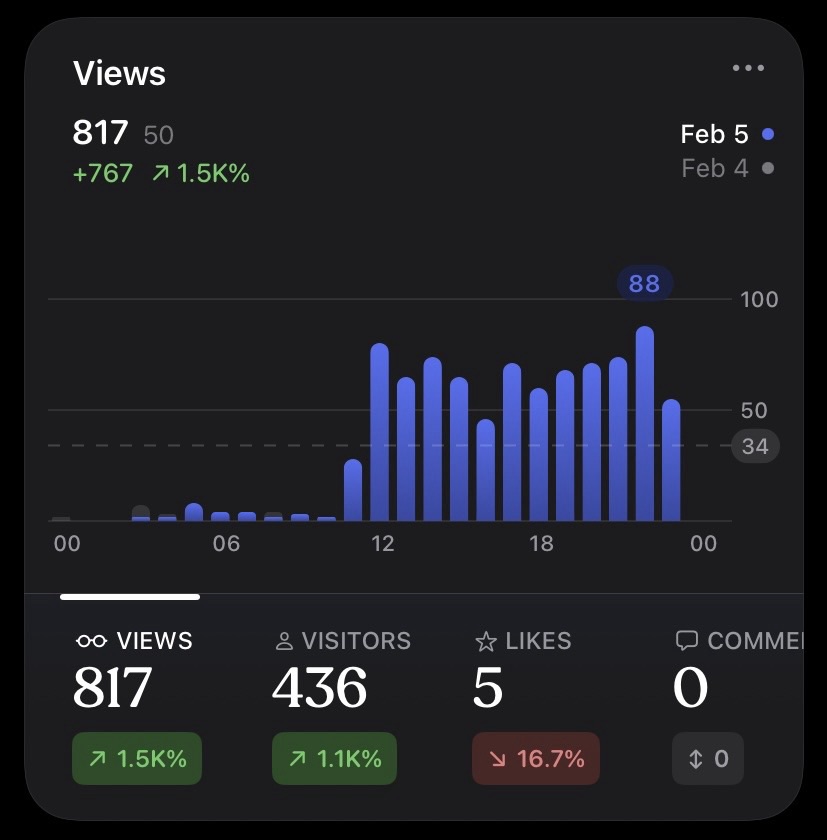

Update to my prior post: Google Analytics shows lower numbers for February 5.

Why?

Google Gemini suggests bots may be to blame.

The internet is full of “bots” (automated scripts from search engines or malicious actors).

Google Analytics has an industry-leading database of known bots and filters them out very aggressively to give you “human” data.

Jetpack also filters bots, but its list is different. Jetpack often catches fewer bots than Google, which usually results in Jetpack showing higher traffic numbers than GA.

Still unanswered: why did the bots swarm on that particular day?

Looks like disregarding the traffic is the correct choice.

If I were alive in 1954, I would understand why I would need a movie to figure this “dialing” thing out.

The movie from “the telephone company” emphasizes that you MUST bring your finger all the way to the finger stop when dialing the two letters and five numbers to talk to another person on the phone.

Here’s the movie.

Was this truly an improvement over the old system, in which you simply spoke the number to your friendly operator?

Probably not…but as phones became more useful, the old system wouldn’t have enough operators in 1954. Already there were 51 million phones in the United States; what if that number doubled?

And yes, that number did double…in 1967.

With some of those 100 million users dialing phone numbers WITHOUT worrying about the finger stop, as touch tone phones were introduced in 1963, supported by a new underlying technology dual-tone multi-frequency (DTMF).

And…well, a lot of other stuff happened.

In 2026 some of us don’t dial at all. We just say “Call Mom” to our non-human “operator” on our smartphones.

And many of the operators are out of a job.

Permiso has released its 2026 State of Identity Security Report, and the results aren’t pretty. The first data point of interest:

“95% [of surveyed organizations] say AI systems can create or modify identities without human oversight”

Which is OK, provided that the organizations have the proper controls. But that brings us to the second data point:

“Only 46% have full visibility into all human, non-human, and AI identities”

This is…not good.

If your security software enforces a “no bots” policy, you’re only hurting yourself.

Yes, there are some bots you want to keep out.

“Scrapers” that obtain your proprietary data without your consent.

“Ad clickers” from your competitors that drain your budgets.

And, of course, non-human identities that fraudulently crack legitimate human and non-human accounts (ATO, or account takeover).

But there are some bots you want to welcome with open arms.

Such as the indexers, either web crawlers or AI search assistants, that ensure your company and its products are known to search engines and large language models. If you nobot these agents, your prospects may never hear about you.

And what about the buybots—those AI agents designed to make legitimate purchases?

Perhaps a human wants to buy a Beanie Baby, Bitcoin, or airline ticket, but only if the price dips below a certain point. It is physically impossible for a human to monitor prices 24 hours a day, 7 days a week, so the human empowers an AI agent to make the purchase.

Do you want to keep legitimate buyers from buying just because they’re non-human identities?

(Maybe…but that’s another topic. If you’re interested, see what Vish Nandlall said in November about Amazon blocking Perplexity agents.)

According to click fraud fighter Anura in October 2025, 51% of web traffic is non-human bots, and 37% of the total traffic is “bad bots.” Obviously you want to deny the 37%, but you want to allow the 14% “good bots.”

Nobot policies hurt. If your verification, authentication, and authorization solutions are unable to allow good bots, your business will suffer.