Tag Archives: security

Insecurity

I didn’t write this. Google Gemini wrote this. (And created the image.)

“In essence, identity is the foundation upon which security is built. A strong, well-managed identity infrastructure is essential for protecting digital assets and preventing unauthorized access. By understanding the overlaps between identity and security, organizations can implement robust security measures that safeguard both their digital assets and the privacy of their users.”

So now take a moment and think about security WITHOUT identity.

And shudder.

Secure is a Verb

How can you anticipate the unexpected?

- Such as a plane that isn’t in the sky, but lodged in a skyscraper?

- Or a pressure cooker that isn’t inside in a kitchen, but outside in a backpack?

- Or an illness that suddenly appears when no such illness previously existed?

- Or something that mimics a bodily illness, such as a computer virus or denial of service attack?

To anticipate the unexpected, you need to plan beforehand, assess during, and quickly correct afterwards.

What is on tomorrow’s calendar? And why are you pushing it out to next year?

Treat “secure” as a verb, not an adjective. A critically important verb.

The Single Solution Microsoft E5 License vs. Best-in-class Individual Solutions

The phrase of the day is “Microsoft E5 License.”

Identity Jedi used is in the 82nd edition of his newsletter.

The biggest threat to every single vendor in the identity space right now are the following words: Microsoft E5 License.

If you read that and shuddered, I’m sorry.

The argument for a single solution

Sounds scary. But isn’t Microsoft here to help? Threatscape makes the case.

The cohesive suite of security and productivity solutions provided by an E5 licence can significantly streamline your technological landscape, doing away with a number of on-premises and SaaS tools.

While many organisations opt for the lower-cost E3 licence, they may find this soon requires a supplementary selection of single-solution tools from alternate vendors to patch gaps in its capabilities.

Too many solutions means confusion, an often-disjointed workflow, potential overlap and overspend, and crucially, increased security risk.

By consolidating your collaboration, productivity, automation, and security solutions into a single trusted vendor platform, IT management becomes simplified, redundant solutions can be axed, and ROI can be better measured.

The Microsoft E5 Security Components

So you get everything from a single source with no finger pointing. What could go wrong?

Plenty, according to those who still think of Microsoft as an evil empire.

Let’s return to the Identity Jedi.

Microsoft is making a compelling case to businesses to consolidate into the Microsoft umbrella of products. The ease of use, and financial motives just make too much sense. Now do those customers get a great IAM experience with that? Meh…kinda. Entra SSO is solid product, Active Directory/EntraID is solid, MIM…well….we don’t talk about MIM.

Microsoft Identity Manager

Well, I will talk about MIM, or Microsoft Identity Manager.

Actually, we’re talking about Microsoft Identity Manager 2016.

Microsoft Identity Manager (MIM) 2016 builds on the identity and access management capabilities of Forefront Identity Manager (FIM) 2010 and predecessor technologies. MIM provides integration with heterogeneous platforms across the datacenter, including on-premises HR systems, directories, and databases.

MIM augments Microsoft Entra cloud-hosted services by enabling the organization to have the right users in Active Directory for on-premises apps. Microsoft Entra Connect can then make available in Microsoft Entra ID for Microsoft 365 and cloud-hosted apps

Is it any good? Sources say that, from a quantitative perspective, Gartner Peer Insights ranks several products higher than MIM’s 4.3 rating, including:

- Okta Advanced Server Access (4.4)

- Ivanti Security Controls (4.5)

- One Identity Active Roles (4.7)

- Imprivata’s SecureLink Customer Connect (4.8)

- Bravura Safe (5.0, 1 rating)

The argument against a single solution

But what of the argument that it’s better to get everything from one vendor? Other companies will tout their best-in-class products. While you’ll end up with a possibly disjointed solution, the work will get done more accurately.

In the end, it’s up to you. Do you want a single solution that is “good enough” and is already pre-made, or do you want to take the best solution from the best-in-class vendors and roll your own?

Authenticator Assurance Levels (AALs) and Digital Identity

(Part of the biometric product marketing expert series)

Back in December 2020, I dove into identity assurance levels (IALs) and digital identity, subsequently specifying the difference between identity assurance levels 2 and 3. These IALs are defined in section 4 of NIST Special Publication 800-63A, Digital Identity Guidelines, Enrollment and Identity Proofing Requirements.

It’s past time for me to move ahead to authenticator assurance levels (AALs).

Where are authenticator assurance levels defined?

Authenticator assurance levels are defined in section 4 of NIST Special Publication 800-63B, Digital Identity Guidelines, Authentication and Lifecycle Management. As with IALs, the AALs progress to higher levels of assurance.

- AAL1 (some confidence). AAL1, in the words of NIST, “provides some assurance.” Single-factor authentication is OK, but multi-factor authentication can be used also. All sorts of authentication methods, including knowledge-based authentication, satisfy the requirements of AAL1. In short, AAL1 isn’t exactly a “nothingburger” as I characterized IAL1, but AAL1 doesn’t provide a ton of assurance.

- AAL2 (high confidence). AAL2 increases the assurance by requiring “two distinct authentication factors,” not just one. There are specific requirements regarding the authentication factors you can use. And the security must conform to the “moderate” security level, such as the moderate security level in FedRAMP. So AAL2 is satisfactory for a lot of organizations…but not all of them.

- AAL3 (very high confidence). AAL3 is the highest authenticator assurance level. It “is based on proof of possession of a key through a cryptographic protocol.” Of course, two distinct authentication factors are required, including “a hardware-based authenticator and an authenticator that provides verifier impersonation resistance — the same device MAY fulfill both these requirements.”

This is of course a very high overview, and there are a lot of…um…minutiae that go into each of these definitions. If you’re interested in that further detail, please read section 4 of NIST Special Publication 800-63B for yourself.

Which authenticator assurance level should you use?

NIST has provided a handy dandy AAL decision flowchart in section 6.2 of NIST Special Publication 800-63-3, similar to the IAL decision flowchart in section 6.1 that I reproduced earlier. If you go through the flowchart, you can decide whether you need AAL1, AAL2, or the very high AAL3.

One of the key questions is the question flagged as 2, “Are you making personal data accessible?” The answer to this question in the flowchart moves you between AAL2 (if personal data is made accessible) and AAL1 (if it isn’t).

So what?

Do the different authenticator assurance levels provide any true benefits, or are they just items in a government agency’s technical check-off list?

Perhaps the better question to ask is this: what happens if the WRONG person obtains access to the data?

- Could the fraudster cause financial loss to a government agency?

- Threaten personal safety?

- Commit civil or criminal violations?

- Or, most frightening to agency heads who could be fired at any time, could the fraudster damage an agency’s reputation?

If some or all of these are true, then a high authenticator assurance level is VERY beneficial.

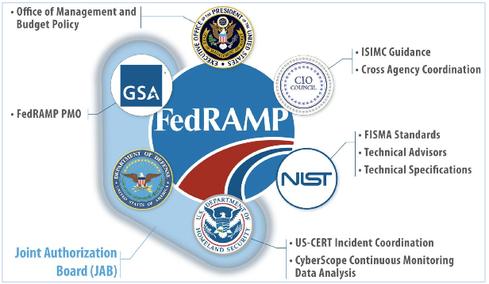

A Few Thoughts on FedRAMP

The 438 U.S. federal agencies (as of today) probably have over 439 different security requirements. When you add state and local agencies to the list, security compliance becomes a mind-numbing exercise.

- For example, the U.S. Federal Bureau of Investigation has its Criminal Justice Information Systems Security Policy (version 5.9 is here). This not only applies to the FBI, but to any government agency or private organization that interfaces to the relevant FBI systems.

- Similarly, the U.S. Department of Health and Human Services has its Health Insurance Portability and Accountability Act (HIPAA) Security Rule. Again, this also applies to private organizations.

But I don’t care about those. (Actually I do, but for the next few minutes I don’t.) Instead, let’s talk FedRAMP.

Why do we have FedRAMP?

The two standards that I mentioned above apply to particular government agencies. Sometimes, however, the federal government attempts to create a standard that applies to ALL federal agencies (and other relevant bodies). You can say that Login.gov is an example of this, although a certain company (I won’t name the company, but it likes to ID me) repeatedly emphasizes that Login.gov is not IAL2 compliant.

But forget about that. Let’s concentrate on FedRAMP.

The Federal Risk and Authorization Management Program (FedRAMP®) was established in 2011 to provide a cost-effective, risk-based approach for the adoption and use of cloud services by the federal government. FedRAMP empowers agencies to use modern cloud technologies, with an emphasis on security and protection of federal information. In December 2022, the FedRAMP Authorization Act was signed as part of the FY23 National Defense Authorization Act (NDAA). The Act codifies the FedRAMP program as the authoritative standardized approach to security assessment and authorization for cloud computing products and services that process unclassified federal information.

From https://www.fedramp.gov/program-basics/.

Note the critical word “unclassified.” So FedRAMP doesn’t cover EVERYTHING. But it does cover enough to allow federal agencies to move away from huge on-premise server rooms and enjoy the same SaaS advantages that private entities enjoy.

Today, government agencies can now consult a FedRAMP Marketplace that lists FedRAMP offerings the agencies can use for their cloud implementations.

A FedRAMP authorized product example

When I helped MorphoTrak propose its first cloud-based automated biometric identification solutions, our first customers were state and local agencies. To propose those first solutions, MorphoTrak partnered with Microsoft and used its Azure Government cloud. While those first implementations were not federal and did not require FedRAMP authorization, MorphoTrak’s successor IDEMIA clearly has an interest in providing federal non-classified cloud solutions.

When IDEMIA proposes federal solutions that require cloud storage, it can choose to use Microsoft Azure Government, which is now FedRAMP authorized.

It turns out that a number of other FedRAMP-authorized products are partially dependent upon Microsoft Azure Government’s FedRAMP authorization, so continued maintenance of this authorization is essential to Microsoft, a number of other vendors, and all the agencies that require secure cloud solutions.

They can only hope that the GSA Inspector General doesn’t find fault with THEM.

Is FedRAMP compliance worth it?

But assuming that doesn’t happen, is it worthwhile for vendors to pursue FedRAMP compliance?

If you are a company with a cloud service, there are likely quite a few questions you are asking yourself about your pursuits in the Federal market. When will the upward trajectory of cloud adoption begin? What agency will be the next to migrate to the cloud? What technologies will be migrated? As you move forward with your business development strategy you will also question whether FedRAMP compliance is something you should pursue?

The answer to the last question is simple: Yes. If you want the Federal Government to purchase your cloud service offering you will, sooner or later, have to successfully navigate the FedRAMP process.

From https://www.mindpointgroup.com/blog/fedramp-compliance-is-it-worth-it.

And a lot of companies are doing just that. But with less than 400 FedRAMP authorized services, there’s obviously room for growth.

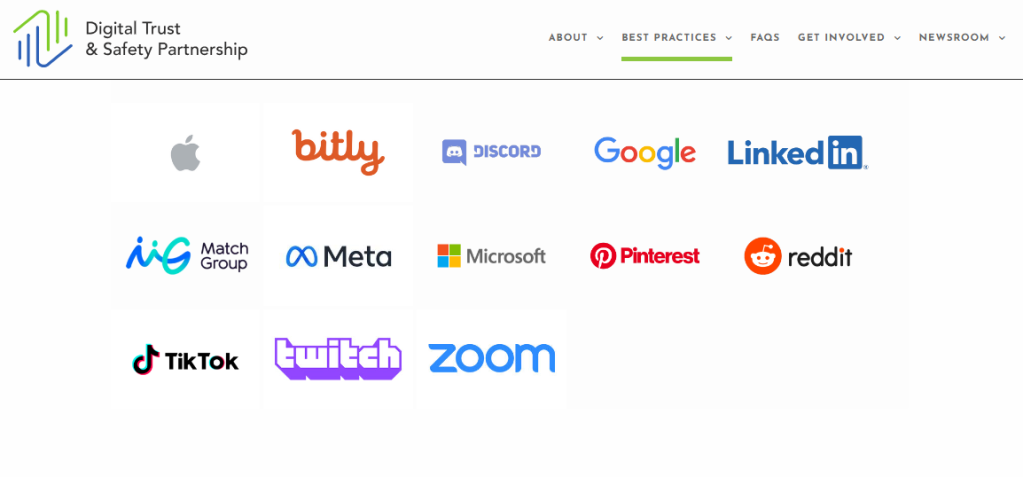

Ofcom and the Digital Trust & Safety Partnership

The Digital Trust & Safety Partnership (DTSP) consists of “leading technology companies,” including Apple, Google, Meta (parent of Facebook, Instagram, and WhatsApp), Microsoft (and its LinkedIn subsidiary), TikTok, and others.

The DTSP obviously has its views on Ofcom’s enforcement of the UK Online Safety Act.

Which, as Biometric Update notes, boils down to “the industry can regulate itself.”

Here’s how the DTSP stated this in its submission to Ofcom:

DTSP appreciates and shares Ofcom’s view that there is no one-size-fits-all approach to trust and safety and to protecting people online. We agree that size is not the only factor that should be considered, and our assessment methodology, the Safe Framework, uses a tailoring framework that combines objective measures of organizational size and scale for the product or service in scope of assessment, as well as risk factors.

From https://dtspartnership.org/press-releases/dtsp-submission-to-the-uk-ofcom-consultation-on-illegal-harms-online/.

We’ll get to the “Safe Framework” later. DTSP continues:

Overly prescriptive codes may have unintended effects: Although there is significant overlap between the content of the DTSP Best Practices Framework and the proposed Illegal Content Codes of Practice, the level of prescription in the codes, their status as a safe harbor, and the burden of documenting alternative approaches will discourage services from using other measures that might be more effective. Our framework allows companies to use whatever combination of practices most effectively fulfills their overarching commitments to product development, governance, enforcement, improvement, and transparency. This helps ensure that our practices can evolve in the face of new risks and new technologies.

From https://dtspartnership.org/press-releases/dtsp-submission-to-the-uk-ofcom-consultation-on-illegal-harms-online/.

But remember that the UK’s neighbors in the EU recently prescribed that USB-3 cables are the way to go. This not only forced DTSP member Apple to abandon the Lightning cable worldwide, but it affects Google and others because there will be no efforts to come up with better cables. Who wants to fight the bureaucratic battle with Brussels? Or alternatively we will have the advanced “world” versions of cables and the deprecated “EU” standards-compliant cables.

So forget Ofcom’s so-called overbearing approach and just adopt the Safe Framework. Big tech will take care of everything, including all those age assurance issues.

DTSP’s September 2023 paper on age assurance documents a “not overly prescriptive” approach, with a lot of “it depends” discussion.

Incorporating each characteristic comes with trade-offs, and there is no one-size-fits-all solution. Highly accurate age assurance methods may depend on collection of new personal data such as facial imagery or government-issued ID. Some methods that may be economical may have the consequence of creating inequities among the user base. And each service and even feature may present a different risk profile for younger users; for example, features that are designed to facilitate users meeting in real life pose a very different set of risks than services that provide access to different types of content….

Instead of a single approach, we acknowledge that appropriate age assurance will vary among services, based on an assessment of the risks and benefits of a given context. A single service may also use different

From https://dtspartnership.org/wp-content/uploads/2023/09/DTSP_Age-Assurance-Best-Practices.pdf.

approaches for different aspects or features of the service, taking a multi-layered approach.

So will Ofcom heed the DTSP’s advice and say “Never mind. You figure it out”?

Um, maybe not.

Time for the FIRST Iteration of Your Firm’s UK Online Safety Act Story

A couple of weeks ago, I asked this question:

Is your firm affected by the UK Online Safety Act, and the future implementation of the Act by Ofcom?

From https://bredemarket.com/2023/10/30/uk-online-safety-act-story/

Why did I mention the “future implementation” of the UK Online Safety Act? Because the passage of the UK Online Safety Act is just the FIRST step in a long process. Ofcom still has to figure out how to implement the Act.

Ofcom started to work on this on November 9, but it’s going to take many months to finalize—I mean finalise things. This is the UK Online Safety Act, after all.

This is the first of four major consultations that Ofcom, as regulator of the new Online Safety Act, will publish as part of our work to establish the new regulations over the next 18 months.

It focuses on our proposals for how internet services that enable the sharing of user-generated content (‘user-to-user services’) and search services should approach their new duties relating to illegal content.

From https://www.ofcom.org.uk/consultations-and-statements/category-1/protecting-people-from-illegal-content-online

On November 9 Ofcom published a slew of summary and detailed documents. Here’s a brief excerpt from the overview.

Mae’r ddogfen hon yn rhoi crynodeb lefel uchel o bob pennod o’n hymgynghoriad ar niwed anghyfreithlon i helpu rhanddeiliaid i ddarllen a defnyddio ein dogfen ymgynghori. Mae manylion llawn ein cynigion a’r sail resymegol sylfaenol, yn ogystal â chwestiynau ymgynghori manwl, wedi’u nodi yn y ddogfen lawn. Dyma’r cyntaf o nifer o ymgyngoriadau y byddwn yn eu cyhoeddi o dan y Ddeddf Diogelwch Ar-lein. Mae ein strategaeth a’n map rheoleiddio llawn ar gael ar ein gwefan.

From https://www.ofcom.org.uk/__data/assets/pdf_file/0021/271416/CYM-illegal-harms-consultation-chapter-summaries.pdf

Oops, I seem to have quoted from the Welsh version. Maybe you’ll have better luck reading the English version.

This document sets out a high-level summary of each chapter of our illegal harms consultation to help stakeholders navigate and engage with our consultation document. The full detail of our proposals and the underlying rationale, as well as detailed consultation questions, are set out in the full document. This is the first of several consultations we will be publishing under the Online Safety Act. Our full regulatory roadmap and strategy is available on our website.

From https://www.ofcom.org.uk/__data/assets/pdf_file/0030/270948/illegal-harms-consultation-chapter-summaries.pdf

If you want to peruse everything, go to https://www.ofcom.org.uk/consultations-and-statements/category-1/protecting-people-from-illegal-content-online.

And if you need help telling your firm’s UK Online Safety Act story, Bredemarket can help. (Unless the final content needs to be in Welsh.) Click below!

What Is Your Firm’s UK Online Safety Act Story?

It’s time to revisit my August post entitled “Can There Be Too Much Encryption and Age Verification Regulation?” because the United Kingdom’s Online Safety Bill is now the Online Safety ACT.

Having passed, eventually, through the UK’s two houses of Parliament, the bill received royal assent (October 26)….

[A]dded in (to the Act) is a highly divisive requirement for messaging platforms to scan users’ messages for illegal material, such as child sexual abuse material, which tech companies and privacy campaigners say is an unwarranted attack on encryption.

From Wired.

This not only opens up issues regarding encryption and privacy, but also specific identity technologies such as age verification and age estimation.

This post looks at three types of firms that are affected by the UK Online Safety Act, the stories they are telling, and the stories they may need to tell in the future. What is YOUR firm’s Online Safety Act-related story?

What three types of firms are affected by the UK Online Safety Act?

As of now I have been unable to locate a full version of the final final Act, but presumably the provisions from this July 2023 version (PDF) have only undergone minor tweaks.

Among other things, this version discusses “User identity verification” in 65, “Category 1 service” in 96(10)(a), “United Kingdom user” in 228(1), and a multitude of other terms that affect how companies will conduct business under the Act.

I am focusing on three different types of companies:

- Technology services (such as Yoti) that provide identity verification, including but not limited to age verification and age estimation.

- User-to-user services (such as WhatsApp) that provide encrypted messages.

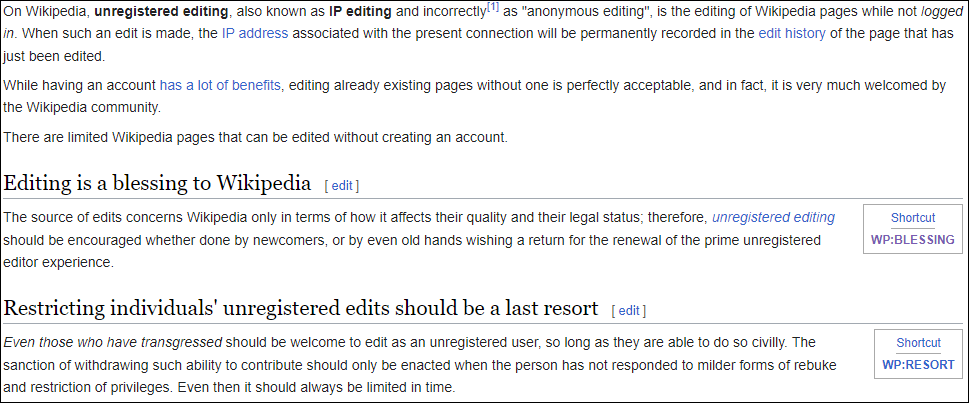

- User-to-user services (such as Wikipedia) that allow users (including United Kingdom users) to contribute content.

What types of stories will these firms have to tell, now that the Act is law?

Stories from identity verification services

For ALL services, the story will vary as Ofcom decides how to implement the Act, but we are already seeing the stories from identity verification services. Here is what Yoti stated after the Act became law:

We have a range of age assurance solutions which allow platforms to know the age of users, without collecting vast amounts of personal information. These include:

From Yoti.

- Age estimation: a user’s age is estimated from a live facial image. They do not need to use identity documents or share any personal information. As soon as their age is estimated, their image is deleted – protecting their privacy at all times. Facial age estimation is 99% accurate and works fairly across all skin tones and ages.

- Digital ID app: a free app which allows users to verify their age and identity using a government-issued identity document. Once verified, users can use the app to share specific information – they could just share their age or an ‘over 18’ proof of age.

Stories from encrypted message services

Not surprisingly, message encryption services are telling a different story.

MailOnline has approached WhatsApp’s parent company Meta for comment now that the Bill has received Royal Assent, but the firm has so far refused to comment.

Will Cathcart, Meta’s head of WhatsApp, said earlier this year that the Online Safety Act was the most concerning piece of legislation being discussed in the western world….

[T]o comply with the new law, the platform says it would be forced to weaken its security, which would not only undermine the privacy of WhatsApp messages in the UK but also for every user worldwide.

‘Ninety-eight per cent of our users are outside the UK. They do not want us to lower the security of the product, and just as a straightforward matter, it would be an odd choice for us to choose to lower the security of the product in a way that would affect those 98 per cent of users,’ Mr Cathcart has previously said.

From Daily Mail.

Stories from services with contributed content

And contributed content services are also telling their own story.

Companies, from Big Tech down to smaller platforms and messaging apps, will need to comply with a long list of new requirements, starting with age verification for their users. (Wikipedia, the eighth-most-visited website in the UK, has said it won’t be able to comply with the rule because it violates the Wikimedia Foundation’s principles on collecting data about its users.)

From Wired.

What is YOUR firm’s story?

All of these firms have shared their stories either before or after the Act became law, and those stories will change depending upon what Ofcom decides.

- Maybe they’ll thank Ofcom for its wise decisions.

- Or maybe they’ll say “bye bye, UK” in the same way that many businesses have said “bye bye, Illinois.” (“BIPA” is a four-letter word.)

But what about YOUR firm?

Is your firm affected by the UK Online Safety Act, and the future implementation of the Act by Ofcom?

Do you have a story that you need to tell to achieve your firm’s goals?

Do you need an extra, experienced hand to help out?

Learn how Bredemarket can create content that drives results for your firm.

Click the image below.

Can There Be Too Much Encryption and Age Verification Regulation?

Approximately 2,700 years ago, the Greek poet Hesiod is recorded as saying “moderation is best in all things.” This applies to government regulations, including encryption and age verification regulations. As the United Kingdom’s House of Lords works through drafts of its Online Safety Bill, interested parties are seeking to influence the level of regulation.

The July 2023 draft of the Online Safety Bill

On July 25, 2023, Richard Allan of Regulate.Tech provided his assessment of the (then) latest draft of the Online Safety Bill that is going through the House of Lords.

In Allan’s assessment, he wondered whether the mandated encryption and age verification regulations would apply to all services, or just critical services.

Allan considered a number of services, but I’m just going to hone in on two of them: WhatsApp and Wikipedia.

The Online Safety Bill and WhatsApp

WhatsApp is owned by a large American company called Meta, which causes two problems for regulators in the United Kingdom (and in Europe):

- Meta is a large company.

- Meta is an American company.

WhatsApp itself causes another problem for UK regulators:

- WhatsApp encrypts messages.

Because of these three truths, UK regulators are not necessarily inclined to play nice with WhatsApp, which may affect whether WhatsApp will be required to comply with the Online Safety Bill’s regulations.

Allan explains the issue:

One of the powers the Bill gives to OFCOM (the UK Office of Communications) is the ability to order services to deploy specific technologies to detect terrorist and child sexual exploitation and abuse content….

But there may be cases where a provider believes that the technology it is being ordered to deploy would break essential functionality of its service and so would prefer to leave the UK rather than accept compliance with the order as a condition of remaining….

If OFCOM does issue this kind of order then we should expect to see some encrypted services leave the UK market, potentially including very popular ones like WhatsApp and iMessage.

From https://www.regulate.tech/online-safety-bill-some-futures-25th-july-2023/

And this isn’t just speculation on Allan’s part. Will Cathcart has been complaining about the provisions of the draft bill for months, especially since it appears that WhatsApp encryption would need to be “dumbed down” for everybody to comply with regulations in the United Kingdom.

Speaking during a UK visit in which he will meet legislators to discuss the government’s flagship internet regulation, Will Cathcart, Meta’s head of WhatsApp, described the bill as the most concerning piece of legislation currently being discussed in the western world.

He said: “It’s a remarkable thing to think about. There isn’t a way to change it in just one part of the world. Some countries have chosen to block it: that’s the reality of shipping a secure product. We’ve recently been blocked in Iran, for example. But we’ve never seen a liberal democracy do that.

“The reality is, our users all around the world want security,” said Cathcart. “Ninety-eight per cent of our users are outside the UK. They do not want us to lower the security of the product, and just as a straightforward matter, it would be an odd choice for us to choose to lower the security of the product in a way that would affect those 98% of users.”

From https://www.theguardian.com/technology/2023/mar/09/whatsapp-end-to-end-encryption-online-safety-bill

In passing, the March Guardian article noted that WhatsApp requires UK users to be 16 years old. This doesn’t appear to be an issue for Meta, but could be an issue for another very popular online service.

The Online Safety Bill and Wikipedia

So how does the Online Safety Bill affect Wikipedia?

It depends on how the Online Safety Bill is implemented via the rulemaking process.

As in other countries, the true effects of legislation aren’t apparent until the government writes the rules that implement the legislation. It’s possible that the rulemaking will carve out an exemption allowing Wikipedia to NOT enforce age verification. Or it’s possible that Wikipedia will be mandated to enforce age verification for its writers.

Let’s return to Richard Allan.

If they do not (carve out exemptions) then there could be real challenges for the continued operation of some valuable services in the UK given what we know about the requirements in the Bill and the operating principles of services like Wikipedia.

For example, it would be entirely inconsistent with Wikipedia’s privacy principles to start collecting additional data about the age of their users and yet this is what will be expected from regulated services more generally.

From https://www.regulate.tech/online-safety-bill-some-futures-25th-july-2023/

Left unsaid is the same issue that affects encryption: age verification for Wikipedia may be required in the United Kingdom, but may not be required for other countries.

It’s no surprise that Jimmy Wales of Wikipedia has a number of problems with the Online Safety Bill. Here’s just one of them.

(Wales) used the example of Wikipedia, in which none of its 700 staff or contractors plays a role in content or in moderation.

Instead, the organisation relies on its global community to make democratic decisions on content moderation, and have contentious discussions in public.

By contrast, the “feudal” approach sees major platforms make decisions centrally, erratically, inconsistently, often using automation, and in secret.

By regulating all social media under the assumption that it’s all exactly like Facebook and Twitter, Wales said that authorities would impose rules on upstart competitors that force them into that same model.

From https://www.itpro.com/business-strategy/startups/370036/jimmy-wales-online-safety-bill-could-devastate-small-businesses

And the potential regulations that could be imposed on that “global community” would be anathema to Wikipedia.

Wikipedia will not comply with any age checks required under the Online Safety Bill, its foundation says.

Rebecca MacKinnon, of the Wikimedia Foundation, which supports the website, says it would “violate our commitment to collect minimal data about readers and contributors”.

From https://www.bbc.com/news/technology-65388255

Regulation vs. Privacy

One common thread between these two cases is that implementation of the regulations results in a privacy threat to the affected individuals.

- For WhatsApp users, the privacy threat is obvious. If WhatsApp is forced to fully or partially disable encryption, or is forced to use an encryption scheme that the UK Government could break, then the privacy of every message (including messages between people outside the UK) would be threatened.

- For Wikipedia users, anyone contributing to the site would need to undergo substantial identity verification so that the UK Government would know the ages of Wikipedia contributors.

This is yet another example of different government agencies working at cross purposes with each other, as the “catch the pornographers” bureaucrats battle with the “preserve privacy” advocates.

Meta, Wikipedia, and other firms would like the legislation to explicitly carve out exemptions for their firms and services. Opponents say that legislative carve outs aren’t necessary, because no one would ever want to regulate Wikipedia.

Yeah, and the U.S. Social Security Number isn’t an identificaiton number either. (Not true.)