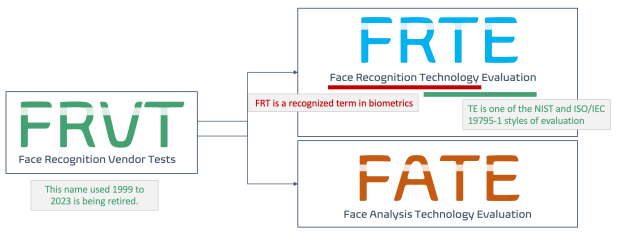

I’m guilty of acronym overuse. I just wrote a post that mentioned something called “FRTE,” and I belatedly realized that many of the people who read the post…and many of the people who need to read the post…have no idea how to spell FRTE, much less WHY it’s important. So let me explain.

But before I explain FRTE, I should explain NIST. It’s the National Institute of Standards and Technology, part of the U.S. Department of Commerce, and it promotes technology standards throughout the country and throughout the world.

Among the many, many, many things that NIST does, it looks at the use of biometrics for identification and classification of individuals, including face. NIST’s face work is split into face recognition and face analysis. While the latter concerns classification of faces (whether the face is real or a presentation attack, the estimated age of the person), the former focuses on individualization.

But I’m not going to talk about FATE today. Let’s focus on FRTE.

Why FRTE?

There are hundreds upon hundreds of algorithms out there that purport to compare a face to another face, or to compare a face to many faces, and indicate the likelihood that the compared faces belong to the same person.

And any algorithm provider can claim that its facial recognition algorithm provides 100.00% accuracy or 99.99% accuracy or whatever.

Or that it can search a trillion record database in 0.1 seconds or whatever.

Perhaps the provider even backs up this claim with published data in which the provider tested its algorithm with 1,000 searches against a 100,000 record database and the algorithm did not make a single error.

Are you impressed?

I’m not.

Anyone can score 100% on a self-test.

But what happens when you are given a test by someone else…closed book…with no answer key?

(And yes, I’m aware of the claims that these independent tests are flawed. So design a better one that more than one algorithm provider supports.)

If you’re looking to buy facial recognition technology, the second best way to evaluate the different facial recognition algorithms is to consult the NIST FRTE tests.

- These tests are continuous, with new algorithms usually added monthly.

- These tests are complex, measuring umpteen diffferent databases and search types. One or more of these may match your particular use case.

- These tests are black box. The algorithm providers send their algorithms to NIST, and they are tested against all the other algorithms on identical setups.

Most importantly, the results of these tests are public, and you can view them yourself. The 1:1 testing is here, and the 1:N testing is here.

Oh, and the tests are listed by the algorithm provider, so if Omnigarde says they’ve been tested by NIST, you can look at the test results and find Omnigarde’s algorithm.

And if Vendor X says its algorithm tested well, but you can’t find Vendor X in the algorithm list, then you need to ask Vendor X which algorithm it’s using.

And if Vendor Y says it’s really accurate, but doesn’t state that the algorithm it uses was NIST tested…ask Vendor Y to prove its accuracy claims.

So that’s FRTE. And if your facial recognition vendor isn’t talking about FRTE…ask why.