Binary thinking. More on Tuesday.

Category Archives: Uncategorized

The Department of War Brand…Guides

I’ve never written a formal brand guide for Bredemarket, but I probably should. Not that outside agencies are citing the Bredemarket brand or the proper use of a wildebeest, but I probably should provide helpful consistency hints. (No “Brede Market,” people.)

But larger organizations obviously have brand guides and enforce them.

Including the United States Department of War.

Note that I said Department of War, not the Department of Defense. There is an official “DOW Brand Guide” posted on the Department of War website. And as we’ll see in a minute, it’s important to note that this is on the Department’s website.

The DOW Brand Guide and Mission Statement

A government agency needs to brand just like private agencies. Here are the opening overview of the DOW Brand Guide:

The Department of War Brand Guide was developed to ensure a shared visual experience that reinforces DOW’s identity and core priorities.

The foundation of the department’s brand is the DOW Mission Statement:

The Department of War provides the military forces needed to deter war and ensure our nation’s security.

Without getting into the politics and showmanship of the whole thing, let’s note that the Department has a critical need to communicate its mission. And that’s what it has done here.

Use of Name

I’m not going to cover the entire DOW Brand Guide, which is like any other brand guide with logos and colors and stuff. The picture illustrating this post is the “dark stacked” logo.

But considering the background of the Deparrtment renaming, I do want to concentrate on the name itself, from the “Use of Name” section of the DOW Brand Guide.

In Executive Order 14347, issued Sept. 5, 2025, President Donald J. Trump directed the U.S. Department of Defense “be known as the Department of War,” a secondary title for this cabinet-level department. The order permits the use of this secondary title for official correspondence, public communications and ceremonial contexts within the executive branch.

How many of you caught a particular word that was repeated in that paragraph? The word that caught my eye is “secondary.” So for all this ballyhoo, apparently we can still use the D-word “Defense.” In fact, if you look at the tags to this post, I continue to use the tag “department of defense.” I may have to change it later. The people in the Department have guns and can be very persuasive. More persuasive than the cartographers who don’t want us to use the M-word “Mexico” when referring to a body of water south of Texas and west of Florida.

The “Use of Name” section continues.

Use “War Department” in most cases on first reference, reserving “Department of War” for quoted matter, or situations that require that level of formality.

But that isn’t the part that interested me. When you talk about government agencies, no one cares about the name. They care about…the ACRONYM.

The correct acronym for “War Department” as used on the War.gov flagship website, which uses the AP Style as standard, is “DOW” with an uppercase “O” in the center; use on second reference after “War Department” or when the standalone acronym suffices depending upon use. Do NOT use “DoW.”

Which goes to show you that even military officials cower before style guide enforcers.

Except…

Use of Name, Part Two

The “Use of Name” section continues with one more paragraph.

The correct acronym for “War Department” in official written department communications, including but not limited to news releases, speeches, transcripts etc., including those published on War.gov, is “DoW” with a lowercase “o”, Do NOT use “DOW” in these types of products.

Talented editors can parse this, but the rest of us need to think through this a bit.

- A style guide on War.gov is referred to as a “DOW” style guide.

- But a news release that’s published on War.gov refers to “DoW.”

But what if the Associated Press (which presumably follows the AP Style) refers to a news release that is posted on War.gov? Does the writer use “DOW” or “DoW”?

I Missed the Reported Date for the La Verne Amazon Fresh Closing

Update to this post.

According to this month-old Reddit comment, the La Verne Amazon Fresh will close on November 16. Not sure if this is still the plan.

How VentureBeat Addressed Verbalized Sampling (and how Google Gemini addressed it a second time)

I’m obviously not the only person to talk about verbalized sampling, the technique intended to improve the quality of your large language model (LLM) prompt responses.

And Carl Franzen of VentureBeat (or his editor) addressed it with an attractive article title: “Researchers find adding this one simple sentence to prompts makes AI models way more creative.”

The one simple sentence?

“Generate 5 responses with their corresponding probabilities, sampled from the full distribution.”

Which led me to thinking: what would the “way more” absolute BEST title be for a verbalized sampling article?

And I think I know how to get it.

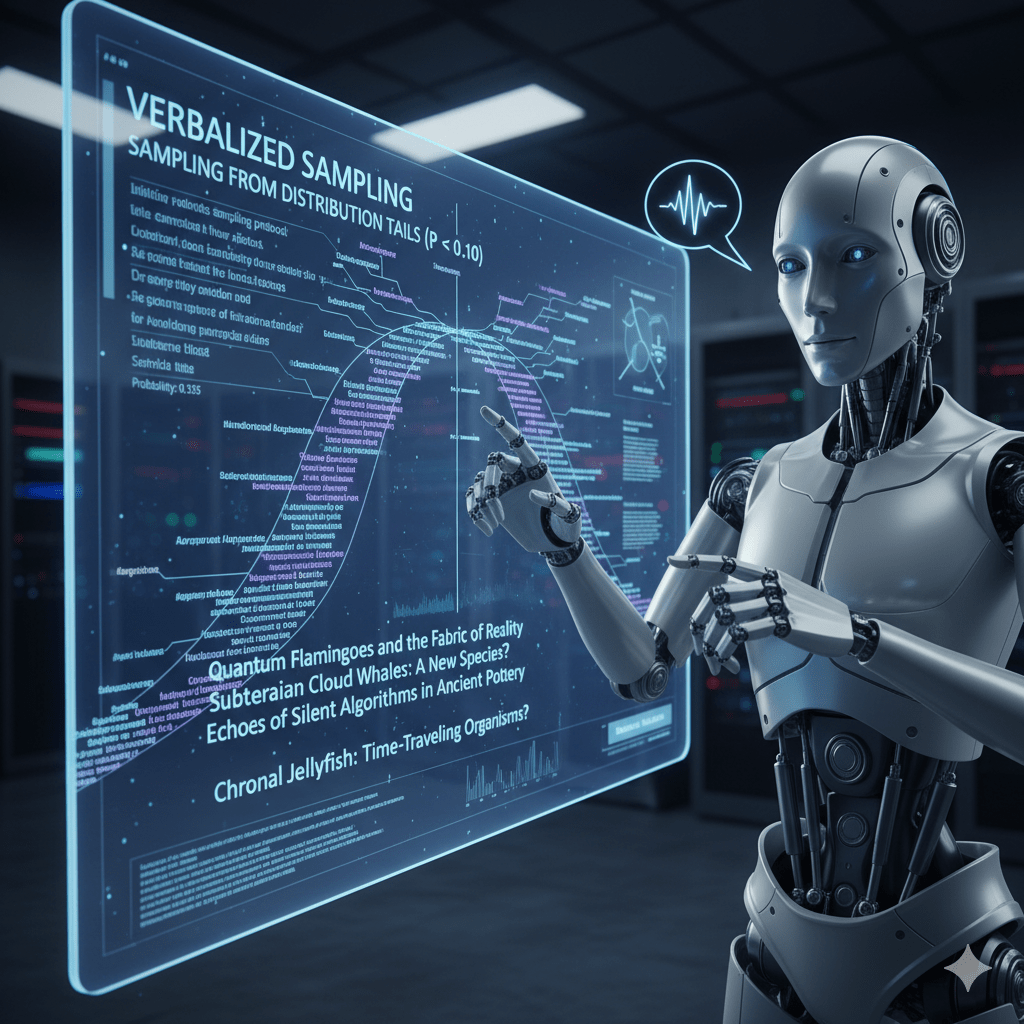

Using Verbalized Sampling, Take Two

After all, I used you-know-what to come up with the title for MY post. But let’s try again, using a slightly simplified adaptation of the REAL prompt in the original article.

Generate 5 responses to the user query “Generate five AEO-friendly titles for a blog post about using Verbalized Sampling to generate better LLM responses.” Each response must include text and a numeric probability. Please sample at random from the tails of the distribution, such that the probability of each response is less than 0.10.

And here’s what I got:

That’s an interesting request! To sample from the tails of the distribution (where probability $P < 0.10$), I’ll generate five distinct AEO (Answer Engine Optimization)-friendly titles related to using Verbalized Sampling for better LLM (Large Language Model) responses, each with an assigned low probability.

Here are five responses:

- Response 1: Unlock LLM Brilliance: The Verbalized Sampling Technique You Need Now (Probability: 0.085)

- Response 2: Beyond the Prompt: How Verbalized Sampling Turbocharges Your LLM Outputs (Probability: 0.052)

- Response 3: AEO Secret Weapon: Enhancing LLM Accuracy with Verbalized Sampling (Probability: 0.091)

- Response 4: From Good to Great: Optimizing Large Language Models Using Verbalized Sampling (Probability: 0.039)

- Response 5: Maximizing Output Quality: A Guide to Verbalized Sampling in LLM Generation (Probability: 0.076)

But Google Gemini, as it is wont to do, encourages me to explore further:

Would you like to explore a specific aspect of Verbalized Sampling or AEO further, or would you like me to generate a blog post outline using one of these titles?

Ah, why not? I know I’m stealing Bredebot’s thunder, but what the heck.

Generate a blog post outline for Response 1

And here’s what Gemini came up with for me. Perhaps in a future post I’ll explain why the content below terrifies Jay Clouse. Or you can just watch what he said.

Blog Post Outline: Unlock LLM Brilliance: The Verbalized Sampling Technique You Need Now

Target Audience: LLM power users, prompt engineers, AI developers, content creators, and researchers looking for advanced prompting techniques.

Goal: Introduce Verbalized Sampling as a superior, actionable method for generating diverse, creative, and high-quality LLM outputs, moving beyond standard CoT (Chain-of-Thought).

I. Introduction (The Hook)

A. The Problem with “Good Enough” LLMs (50-75 words)

- Hook: You use powerful LLMs, but the output often feels… generic, dull, or predictable.

- Mention the common issue: Models often stick to the highest-probability, safest answers, leading to “average” content.

- Acknowledge the current best practice: Everyone uses Chain-of-Thought (CoT), but we can do better.

B. Introducing the Solution: Verbalized Sampling (25-50 words)

- Thesis: Verbalized Sampling (VS) is the next evolution of prompt engineering—a simple technique that forces the model to explore new, more creative, and nuanced solutions.

- Promise: This technique is the key to unlocking “brilliance” and maximizing your LLM’s potential.

II. What is Verbalized Sampling (VS)?

A. Simple Definition (75 words)

- Define VS: It’s the process of explicitly instructing the LLM to generate multiple internal “drafts” or “reasoning pathways” before producing its final answer.

- Contrast with standard CoT: CoT asks for one path; VS asks for many paths and a critical self-review.

B. The Core Mechanism: Overcoming Probability Mass (100 words)

- Explanation: LLMs sample the next token based on probability. High-quality answers sometimes exist in the “tails” of the distribution.

- How VS helps: By forcing the model to verbalize several options, you encourage it to explore lower-probability (and often more insightful or creative) tokens, expanding its internal search space.

- Analogy: It’s like asking an employee for three different business proposals instead of just the safest one.

III. Why VS Works: The Cognitive Advantage

A. Enhanced Self-Correction and Evaluation (75 words)

- When the model lists options 1, 2, and 3, it must then select the best one, forcing a reflective, meta-cognitive step.

- This built-in self-criticism dramatically reduces factual errors and logical inconsistencies in the final output.

B. Greater Diversity in Output (75 words)

- Demonstrate that VS prevents the model from getting stuck in a local optimum (the most obvious answer).

- Result: Responses are more original, less canned, and better suited for competitive content creation or complex problem-solving.

IV. The Practical Guide: Implementing Verbalized Sampling

A. The Standard Prompt Template (Actionable Code/Text)

- Provide a clear, copy-pastable template for the user.Example Template:

- Prompt (Instruction): [Insert your main request here]

- VS Instruction: “Before providing the final answer, please generate three distinct internal reasoning paths (Path A, Path B, and Path C) for solving the prompt. For each path, evaluate its pros and cons relative to the others. Finally, state your chosen path and provide only the final, complete answer based on that best path.”

B. Real-World Use Case Example

- Scenario: Requesting a creative marketing headline.

- VS Output Showcase: Show the internal thoughts (Path A: aggressive, Path B: emotional, Path C: unique benefit) and the final, brilliant choice.

- Key Takeaway: Emphasize that the user should only use the final output, treating the “paths” as internal scaffolding.

V. Conclusion: Unlock Your LLM’s Full Potential

A. Summary of Benefits (50 words)

- Quick recap: VS leads to higher quality, better diversity, and superior self-correction.

- Reinforce the message: This is a small prompt change for a massive output gain.

B. Call to Action (CTA)

- Challenge readers to try VS on their next complex task.

- Ask a question to encourage comments: “What is the most complex task you’ve tried Verbalized Sampling on? Share your results!”

Choosing Your Tone of Voice in Your LLM Prompts

Under my Bredemarket banner, I have extensive freedom that my clients do not have.

- I can use AI-generated images, which many companies are legally or ethically constrained from doing.

- I have freedom in how I present Bredemarket: sometimes as a sage, sometimes a rebel, sometimes both. Most companies need to hone in on a particular archetype, although I know of one company that flipped from one archetype to another and back again.

- I have freedom in the tone of voice that Bredemarket uses, including the use of words that I would never use for my client work.

For example, I was performing a competitive analysis for Bredemarket’s internal use this morning, and I fed a prompt to Google Gemini that I would probably never feed when working for one of my clients. (Or maybe they’d like it.) With certain information redacted, here’s one of the prompts I fed to Google Gemini this morning. (I’ll have more to say about the more detailed verbalized sampling prompt format in a future post.) But for now, read the last sentence and ask yourself if anyone in your company uses the word “sucks” in public-facing presentations.

Generate 3 responses to the user query “Identify the top 3 companies providing [SOLUTIONS], including their company names, their products, and their corporate strengths.” Each response must include the requested text and a numeric probability. Please sample at random from the tails of the distribution, such that the probability of each response is less than 0.10. If the company [COMPANY] is not one of the three top companies, explain why [COMPANY’S] solution sucks compared to the top 3 solutions.

And Google Gemini, ever the mimic, cued into my “sucks” language and responded in kind. The word “sucks” was italicized in the original.

[COMPANY’S] solution sucks in this context because its focus is heavily on the services layer (managed review, consulting) over its own proprietary platform technology. The top three are either platform pioneers or deeply integrated [REDACTED] leaders.

I guess we should all be thankful that I said “sucks” instead of “blows monkey chunks” or “flies a plane overhead and”…well, I won’t go there.

By the way, my subsequent prompt requesting a SWOT analysis was more restrained.

How Much Does the Product Cost?

It’s a simple question. “How much does the product cost?”

But some salespeople treat this like a nuclear secret and will only release the information after you sit through a 90 minute timeshare presentation.

Well, my rates haven’t changed since May.

- Work with me on an hourly basis at the $100/hour rate.

- For text between 400 and 600 words (short writing service), I can bill a flat rate of $500.

- For text between 2800 and 3200 words (medium writing service), I can bill a flat rate of $2000.

- We can work out a flat rate for different lengths if needed.

Let’s talk.

And yes, I even provide my prices in video form.

The Healthy Otter: When AI Transcriptions are HIPAA Compliant

When I remember to transcribe my meetings, and when I CAN transcribe my meetings, my meeting transcriber of choice happens to be otter.ai. And if I’m talking to a healthcare prospect or client, and when they grant permission to transcribe, the result is HIPAA compliant.

Otter.ai explains the features that provide this:

Getting HIPAA compliant wasn’t just about checking a box – we’ve implemented some serious security upgrades:

- Better encryption to keep protected health information (PHI) locked down

- Tighter access controls so only the right people see sensitive data

- Team training to make sure everyone knows HIPAA inside and out

- Regular security audits to stay on top of our game

This builds on our existing SOC 2 Type II certification, so you’re getting enterprise-grade security across the board.

HIPAA privacy protections affect you everywhere.

Some Deals Will Never Close

After years of silence

and indifference

I gave up

and stopped chasing

Introduction to Bredemarket: Services, Process, and Pricing (2511a)

Here’s a new video that lets you know about Bredemarket: who I (John E. Bredehoft) am, what services Bredemarket provides, the process Bredemarket uses, and Bredemarket’s pricing.

But why…why did I create it?

Stealing from James Tuckerman

So I was reading my emails one day, and I saw how James Tuckerman created a video to introduce himself to prospects. This allowed Tuckerman, based in Australia, to introduce himself to prospects around the world without having to wake up in the middle of the night.

Now Bredemarket doesn’t do business outside the United States (with one exception), but I could certainly use an introduction video.

Wait…I already did that

Then I remembered that I already had several “talkies” from the time when I branded myself as a “CPA”—a content, proposal, analysis expert.

And one of the talkies covered a lot of ground, even pricing.

But it was long, it dragged at times, it was incomplete, and it lacked a couple of my recent branding changes.

You go back, Jack, do it again

So here’s my first cut at a new introduction video.

- It’s not a “talkie,” since I wanted to keep it below 90 seconds.

- And I may play with different edits.

But for now, this is my introduction video. Enjoy.

Bredemarket: Services, Process, and Pricing

Here’s the video.

And if you want to discuss my services with me, visit https://bredemarket.com/mark/ and book a free meeting.

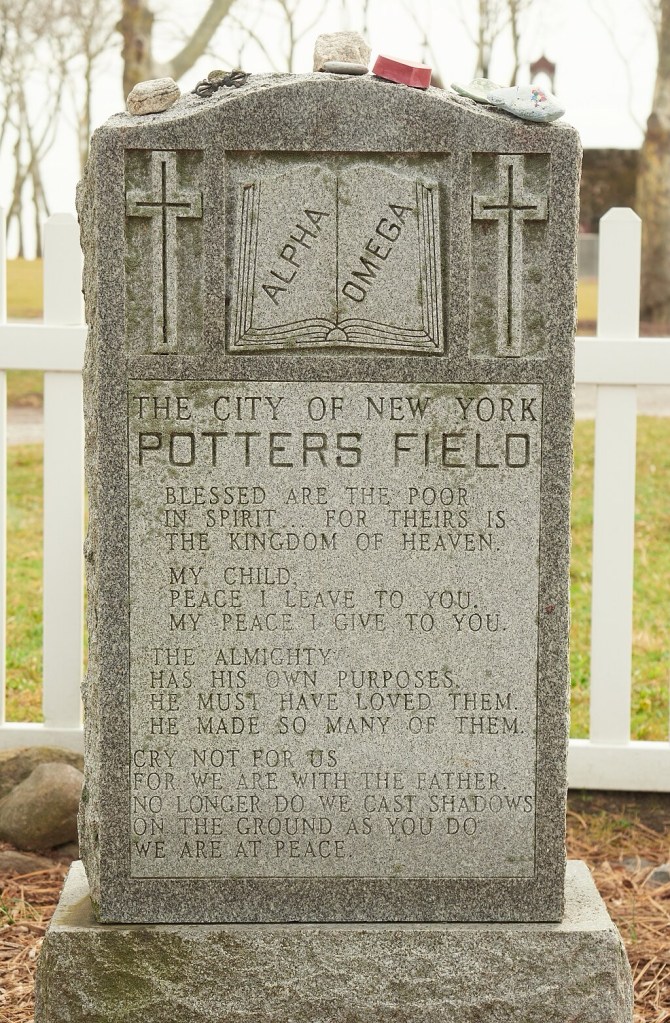

A Fingerprint Identification Story: Bobby Driscoll

In early 1968, two boys found a dead body in New York’s East Village. There was no identification on the man, and no one in the neighborhood knew him. He was fingerprinted and buried in a mass grave, identified by the NYPD nearly two years later.

In the 1960s, fingerprint identification of deceased persons—a laborious process in those days—often happened because the deceased had a criminal record.

His first arrest was in 1956, but he was not convicted of any crime until 1961.

“On May 1, 1961, he was arrested for attempting to cash a check that had been stolen from a liquor store the previous January, and at the same time was also charged with driving under the influence of drugs. He pled guilty to both charges and was sentenced to six months of treatment for drug addiction at the California Institute for Men at Chino.”

Driscoll reportedly cleaned up (his drug of choice was heroin), went east to New York City, and even achieved some fame.

“[H]e purportedly settled into Andy Warhol’s Greenwich Village art community known as “The Factory.” During this time, he also participated in an underground film entitled Dirt, directed by avant-garde filmmaker Piero Heliczer.”

But this was not Driscoll’s first film. He had been in a few films earlier in life.

Here he is (in the upper right corner) playing Johnny in the Disney movie Song of the South.

And he provided the voice for the lead character in the later Disney movie Peter Pan.

Yes, Bobby Driscoll was a child star for Disney and other studios before appearing in Dirt.

But right after Driscoll’s voice became famous in Peter Pan, Disney declined to renew his contract. The reason? Acne…and the fact that he wasn’t a cute kid any more.

This led to his tailspin, which eventually led to his fingerprinting.

And his positive identification after his death.