Because I have talked about differentiation ad nauseum, I’m always looking for ways to see how identity/biometric and technology vendors have differentiated themselves. Yes, almost all of them overuse the word “trust,” but there is still some differentiation out there.

And I found a source that measured differentiation (or “unique positioning”) in various market segments. Using this source, I chose to concentrate on vendors who concentrate on identity verification (or “identity proofing & verification,” but close enough).

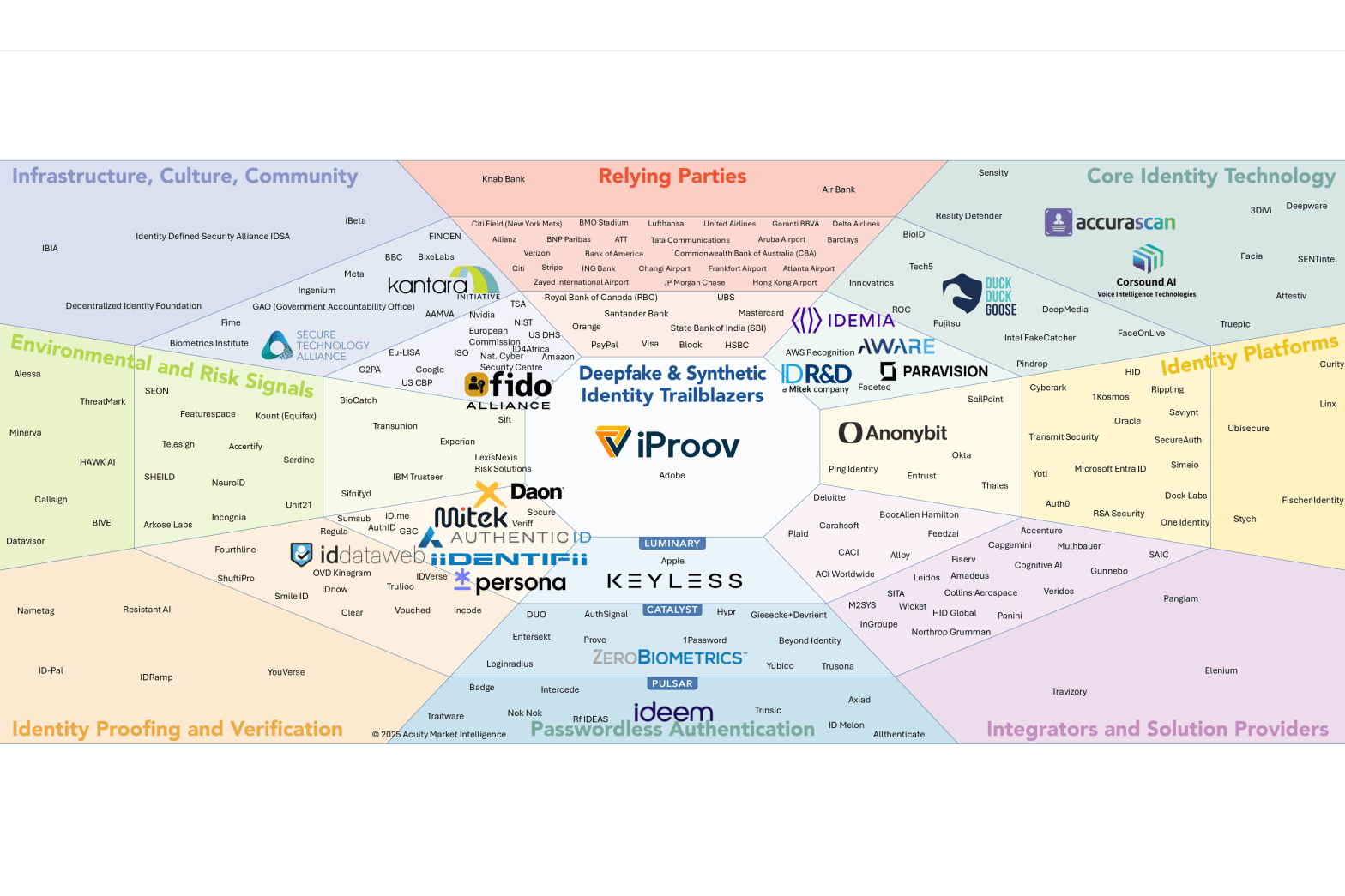

My source? The recently released “Biometric Digital Identity Deepfake and Synthetic Identity Prism Report” from The Prism Project, which you can download here by providing your business address.

Before you read this, I want to caution you that this is NOT a thorough evaluation of The Prism Project deepfake and synthetic identity report. After some preliminaries, it focuses on one small portion of the report, concentrating on ONLY one “beam” (IDV) and ONLY one evaluation factor (differentiation).

Four facts about the report

First, the report is comprehensive. It’s not merely a list of ranked vendors, but also provides a, um, deep dive into deepfakes and synthetic identity. Even if you don’t care about the industry players, I encourage you to (a) download the report, and (b) read the 8 page section entitled “Crash Course: The Identity Arms Race.”

- The crash course starts by describing digital identity and the role that biometrics plays in digital identity. It explains how banks, government agencies, and others perform identity verification; we’ll return to this later.

- Then it moves on to the bad people who try to use “counterfeit identity elements” in place of “authentic identity elements.” The report discusses spoofs, presentation attacks, countermeasures such as multi-factor authentication, and…

- Well, just download the report and read it yourself. If you want to understand deepfakes and synthetic identities, the “Crash Course” section will educate you quickly and thoroughly, as will the remainder of the report.

Second, the report is comprehensive. Yeah, I just said that, but it’s also comprehensive in the number of organizations that it covers.

- In a previous life I led a team that conducted competitive analysis on over 80 identity organizations.

- I then subsequently encountered others who estimated that there are over 100 organizations.

- This report evaluates over 200 organizations. In part this is because it includes evaluations of “relying parties” that are part of the ecosystem. (Examples include Mastercard, PayPal, and the Royal Bank of Canada who obviously don’t want to do business with deepfakes or synthetic identities.) Still, the report is amazing in its organizational coverage.

Third, the report is comprehensive. In a non-lunatic way, the report categorizes each organization into one or more “beams”:

- The aforementioned relying parties

- Core identity technology

- Identity platforms

- Integrators & solution providers

- Passwordless authentication

- Environmental risk signals

- Infrastructure, community, culture

- And last but first (for purposes of this post), identity proofing and verification.

Fourth, the report is comprehensive. Yes I’m repetitive, but each of the 200+ organizations are evaluated on a 0-6 scale based upon seven factors. In listed order, they are:

- Growth & Resources

- Market Presence

- Proof Points

- Unique Positioning, defined as “Unique Value Proposition (UVP) along with diferentiable technology and market innovation generally and within market sector.”

- Business Model & Strategy

- Biometrics and Document Authentication

- Deepfakes & Synthetic Identity Leadership

In essence, the wealth of data makes this report look like a NIST report: there are so many individual “slices” of the prism that every one of the 200+ organizations can make a claim about how it was recognized by The Prism Project. And you’ve probably already seen some organizations make such claims, just like they do whenever a new NIST report comes out.

So let’s look at the tiny slice of the prism that is my, um, focus for this post.

Unique positioning in the IDV slice of the Prism

So, here’s the moment all of you have been waiting for. Which organizations are in the Biometric Digital Identity Deepfake and Synthetic Identity Prism?

Yeah, the text is small. Told you there were a lot of organizations.

For my purposes I’m going to concentrate on the “identity proofing and verification” beam in the lower left corner. But I’m going to dig deeper.

In the illustration above, organizations are nearer or farther from the center based upon their AVERAGE score for all 7 factors I listed previously. But because I want to concentrate on differentiation, I’m only going to look at the identity proofing and verification organizations with high scores (between 5 and the maximum of 6) for the “unique positioning” factor.

I’ll admit my methodology is somewhat arbitrary.

- There’s probably no great, um, difference between an organization with a score of 4.9 and one with a score of 5. But you can safely state that an organization with a “unique positioning” score of 2 isn’t as differentiated from one with a score of 5.

- And this may not matter. For example, iBeta (in the infrastructure – culture – community beam) has a unique positioning score of 2, because a lot of organizations do what iBeta does. But at the same time iBeta has a biometric commitment of 4.5. They don’t evaluate refrigerators.

So, here’s my list of identity proofing and verification organizations who scored between 5 and 6 for the unique positioning factor:

- ID.me

- iiDENTIFii

- Socure

Using the report as my source, these three identity verification companies have offerings that differentiate themselves from others in the pack.

Although I’m sure the other identity verification vendors can be, um, trusted.

Oh, by the way…did I remember to suggest that you download the report?