With the exception of colorblind people, the use of colors in dashboards makes information more accessible, particularly in populations where green means “good” and red means “bad.”

(Even if your name IS Bamber.)

The National Institute of Standards and Technology understands the importance of consistent colors, having worked on traffic light colors since the National Bureau of Standards days (PDF).

For more modern applications such as biometrics, NIST recently incorporated a color coding display change to one of its tabs for the “Face Recognition Technology Evaluation (FRTE) 1:N Identification” results. Specifically, the “Demographics: False Positive Dependence” tab.

The change, announced in an email, is as follows:

“The false positive identification error rate tables now include color-coding to highlight anomalously high values.”

In this context, “anomalously high” is bad, or red. (Actually dark pink, but close enough.)

But let’s explain WHY and HOW NIST made this change.

Why does NIST highlight demographic false positive dependence?

NIST has of course explored the demographic effects of face recognition for years, and the “Demographics: False Positive Dependence” tab provides additional tracking for this.

Why does NIST do this?

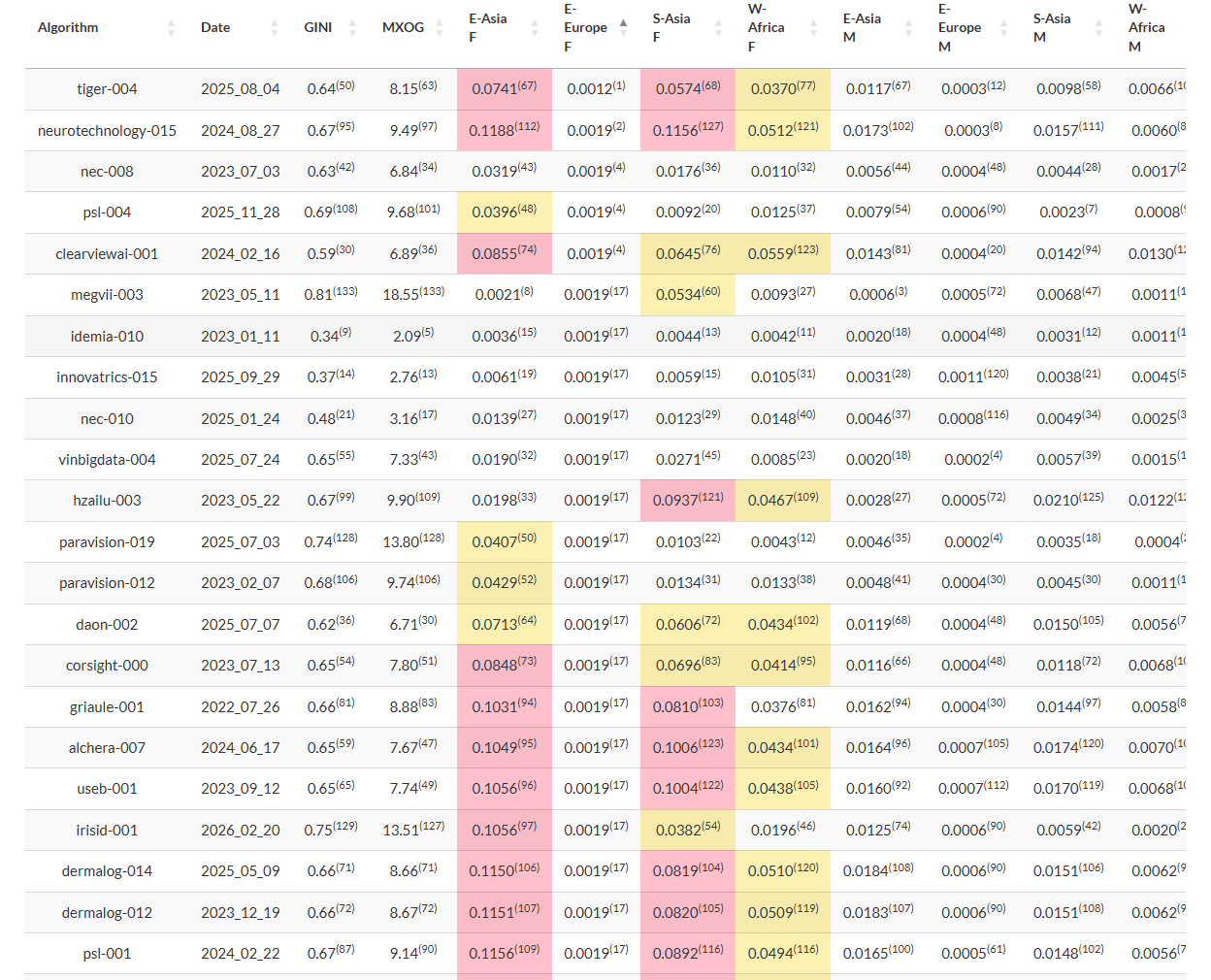

“False positives occur when searches return wrong identities. Such outcomes have application-dependent consequences, which can be serious.”

Serious as in arresting and jailing innocent people. I previously mentioned Robert Williams.

How does NIST highlight demographic false positive dependence?

Anyway, NIST created the “Demographics: False Positive Dependence” tab.

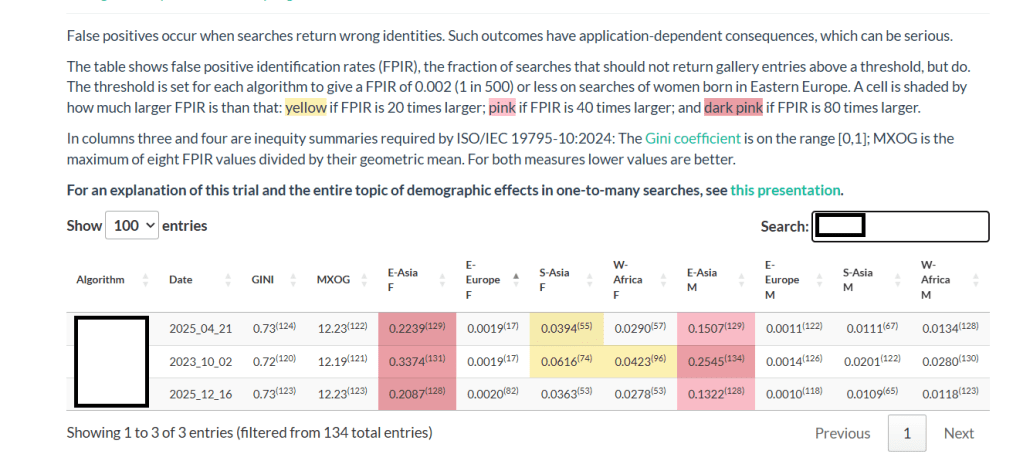

“The table shows false positive identification rates (FPIR), the fraction of searches that should not return gallery entries above a threshold, but do. The threshold is set for each algorithm to give a FPIR of 0.002 (1 in 500) or less on searches of women born in Eastern Europe.”

And for algorithms that have “anomalously high values” in other demographic populations, NIST has added the color coding.

“A cell is shaded by how much larger FPIR is than that: yellow if FPIR is 20 times larger; pink if FPIR is 40 times larger; and dark pink if FPIR is 80 times larger.”

What does the highlighting look like?

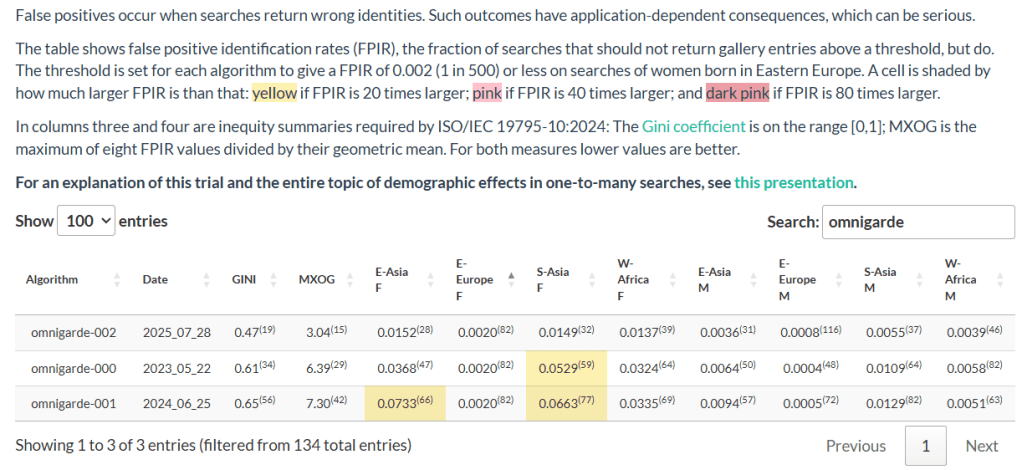

Let me illustrate this with the results from the three algorithms Omnigarde submitted.

- Omnigarde’s first two algorithms, submitted in 2023 and 2024, exhibited high FPIR values for south Asian females, and the second algorithm also exhibited a high FPIR value for east Asian females. See the color coding.

- The third algorithm, submitted in 2025, had lower FPIR values for these populations and thus no yellow color coding.

Even the less-stellar algorithms show improvement over time.

Final thoughts

Both vendors and customers/prospects can rightfully question whether this is helpful or hurtful. I lean toward “helpful,” because if the facial recognition algorithm you use provides high false positives for certain popularions, you need to know.

And as always, law enforcement in the United States should NEVER solely rely on facial recognition results as the basis for an arrest…even for Eastern European females. They should ONLY be an investigative lead.

In the meantime, take care of yourself, and each other.