My thoughts. Or our thoughts.

Tag Archives: deepfake

Two Footballs, Two Biscuits, Two Presidents: A Cybersecurity Nightmare.

Last year I wrote about a biscuit and a football, but I wasn’t talking about the snack spread on game day.

I was talking about the tools the United States President uses (as Commander-in-Chief) for identity verification to launch a nuclear attack.

But sometimes you have to pass the football. If the President is temporarily or permanently incapacitated in an attack, the Vice President also has a football and a biscuit. Normally the Vice President’s biscuit isn’t activated, but when certain Constitutional criteria are met it becomes operative.

Other than this built-in redundancy, the system assumes one football, one biscuit, and one President.

If you’re a cybersecurity expert, you know this assumption is the assumption of a fool.

- It is not impossible to have duplicate functional footballs and duplicate functional biscuits.

- And it is not impossible to have duplicate functional Presidents, with identical face, voice, finger, and iris biometrics. Yes, it’s highly unlikely, but it’s not impossible. If the target is important enough, adversaries will spend the money.

And most of us will never know the answer to this question, but how do government cybersecurity experts prevent this?

Deepfake Squared

What if TWO fraudsters go after a real person at once?

On Misteaks

This morning I loudly proclaimed that three companies had received independent assessments of conformance to Level 3 Presentation Attack Detection (liveness detection).

This is important, because Level 3 conveys an enhanced certainty that the face the software sees (the face that is “presented”) is a real face and not some type of deepfake.

And as I loudly proclaimed, products from three companies had received the Level 3 designation.

But I was wrong.

As I noted several hours later, FOUR companies have received that PAD Level 3 designation: Aware, FaceTec, Paravision, and Yoti.

There are three ways to correct a mistake:

- Don’t. Keep the incorrect information.

- Quietly correct the mistake without admitting it. Change “three” to “four,” and you’re done with no one the wiser.

- Admit the mistake. “Yeah, I originally said three, but it’s really four.”

I chose the third option, just in case someone remembers that I initially said three.

A Live Soul Performance

Some of you knew these deepfake thoughts were coming. Might as well finish side one.

When we last met, I had discussed the other two tracks from side one of Elton John’s “Goodbye Yellow Brick Road.” Of course albums no longer have sides, but these three tracks/four songs give an idea of the breadth of Elton John’s first double album.

But this isn’t merely a music review. It also has to satisfy an Important Purpose. So this post about “Bennie and the Jets” will talk about…deepfakes.

Is it live?

After all the negativity that started the album, it was time for a little joy.

A happy song about a band.

About fans who read things in magazines.

All topped off by Elton’s charged performance in front of a live, cheering audience.

Um…no.

While Elton John has given many live performances, the recording of “Bennie and the Jets” is not one of them.

“Elton’s producer Gus Dudgeon wanted a live feel on this recording, so he mixed in crowd noise from a show Elton played in 1972 at Royal Festival Hall. He also included a series of whistles from a live concert in Vancouver B.C., and added hand claps and various shouts.”

But in retrospect, I can’t imagine the song as a straight studio recording. Dudgeon was right: it HAD to sound live.

And that “live” performance yielded a bigger surprise…and Reginald Dwight was most surprised of all.

Reginald Dwight, soul god

For those who don’t know who Reginald Dwight is, he was born in 1947 in an English town. A shy boy whose parents divorced in his teens, the most notable aspect of his life was his piano talent. He obviously wasn’t going to become a banker as his father originally intended.

Oh, and one more thing. Dwight was Caucasian. And all of his reinventions, including his adoption of the stage name Elton John, wasn’t going to alter that.

But Elton was pretty fly for a white guy. Worked his way up to musical ladder until he achieved success on his own. First with some notable performances, then some hit albums, then some number ones.

Reg could not have predicted what happened next. But first, let’s examine the relationship between “Bennie” and its album predecessor.

“When Elton recorded “Bennie And The Jets,” MCA, his label, wanted to make the song a single. Elton disagreed vociferously. His pick was “Candle In The Wind”…”

What persuaded Elton to change his mind…at least in the North American market?

“But Elton was swayed when “Bennie And The Jets” started doing well on Detroit R&B radio.”

Yes, R&B. “Bennie and the Jets” reached #15 on Billboard’s R&B charts. Which both pleased and amused Elton.

“What am I going to do on my next American tour? Play the Apollo for a week, open with ‘Bennie,’ and then say, ‘Thanks, you can all go home now.'”

If only there were a forum where he could play a single song for a large black audience…

Don Cornelius was all too happy to provide the forum.

Elton John wasn’t the first white artist to perform on Soul Train, but he was one of the few.

Technically this wasn’t a deepfake; Elton John didn’t pretend to be black. But he clearly absorbed some distinct musical influences: Little Richard, Ray Charles, Aretha Franklin, Fats Domino, Otis Redding, Nina Simone, Marvin Gaye, Wilson Pickett, James Brown, Stevie Wonder, The Temptations, Martha Reeves, Clyde McPhatter, and Sam Cooke. (Google Gemini helped me assemble this list.)

And he wasn’t a one hit wonder. Subsequent Philadelphia-influenced songs “Philadelphia Freedom” and “Mama Can’t Buy You Love” also made the R&B top 40.

Compare with Mick Jagger, who rarely made the R&B charts either as a Rolling Stone or as a solo artist. “Miss You” did, but Jagger’s highest placement was when he guested on the Jacksons’ “State of Shock.”

On Adversarial Gait Recognition

You’ve presumably noticed the multiple biometrics on display in my “landscape” reel. Fingers, a face, irises, a voice, veins, and even DNA.

But you may have missed one.

Gait.

I still remember the time that I ran into a former coworker from my pre-biometric years, and she said she recognized me by the way I walk.

But it’s no surprise that gait recognition is susceptible to spoofing.

“[A]dversarial Gait Recognition has arisen as a major challenge in video surveillance systems, as deep learning-based gait recognition algorithms become more sensitive to adversarial attacks.”

So Zeeshan Ali and others are working on IMPROVING gait-based adversarial attacks…the better to counter them.

“Our technique includes two major components: AdvHelper, a surrogate model that simulates the target, and PerturbGen, a latent-space perturbation generator implemented in an encoder-decoder framework. This design guarantees that adversarial samples are both effective and perceptually realistic by utilizing reconstruction and perceptual losses. Experimental results on the benchmark CASIA-gait dataset show that the proposed method achieves a high attack success rate of 94.33%.”

Now we need to better detect these adversarial attacks.

Let’s Talk About Occluded Face Expression Reconstruction

ORFE, OAFR, ORecFR, OFER. Let’s go!

As you may know, I’ve often used Grok to convert static images to 6-second videos. But I’ve never tried to do this with an occluded face, because I feared I’d probably fail. Grok isn’t perfect, after all.

Facia’s 2024 definition of occlusion is “an extraneous object that hinders the view of a face, for example, a beard, a scarf, sunglasses, or a mustache covering lips.” Facia also mentions the COVID practice of wearing masks.

Occlusion limits the data available to facial recognition algorithms, which has an adverse effect on accuracy. At the time, “lower chin and mouth occlusions caused an inaccuracy rate increase of 8.2%.” Occlusion of the eyes naturally caused greater inaccuracies.

So how do we account for occlusions? Facia offers three tactics:

- Occlusion Robust Feature Extraction (ORFE)

- Occlusion Aware Facial Recognition (OAFR)

- Occlusion Recovery-Based Facial Recognition (ORecFR)

But those acronyms aren’t enough, so we’ll add one more.

At the 2025 Computer Vision and Pattern Recognition conference, a group of researchers led by Pratheba Selvaraju presented a paper entitled “OFER: Occluded Face Expression Reconstruction.” This gives us one more acronym to play around with.

Here’s the abstract of the paper:

“Reconstructing 3D face models from a single image is an inherently ill-posed problem, which becomes even more challenging in the presence of occlusions. In addition to fewer available observations, occlusions introduce an extra source of ambiguity where multiple reconstructions can be equally valid. Despite the ubiquity of the problem, very few methods address its multi-hypothesis nature. In this paper we introduce OFER, a novel approach for singleimage 3D face reconstruction that can generate plausible, diverse, and expressive 3D faces, even under strong occlusions. Specifically, we train two diffusion models to generate a shape and expression coefficients of face parametric model, conditioned on the input image. This approach captures the multi-modal nature of the problem, generating a distribution of solutions as output. However, to maintain consistency across diverse expressions, the challenge is to select the best matching shape. To achieve this, we propose a novel ranking mechanism that sorts the outputs of the shape diffusion network based on predicted shape accuracy scores. We evaluate our method using standard benchmarks and introduce CO-545, a new protocol and dataset designed to assess the accuracy of expressive faces under occlusion. Our results show improved performance over occlusion-based methods, while also enabling the generation of diverse expressions for a given image.

Cool. I was just writing about multimodal for a biometric client project, but this is a different meaning altogether.

In my non-advanced brain, the process of creating multiple options and choosing the one with the “best” fit (however that is defined) seems promising.

Although Grok didn’t do too badly with this one. Not perfect, but pretty good.

New Hank

Obviously fake. Created in response to this Threads response to the question “Do you happen to know any old Hank Williams songs?”

“As opposed to new ones?”

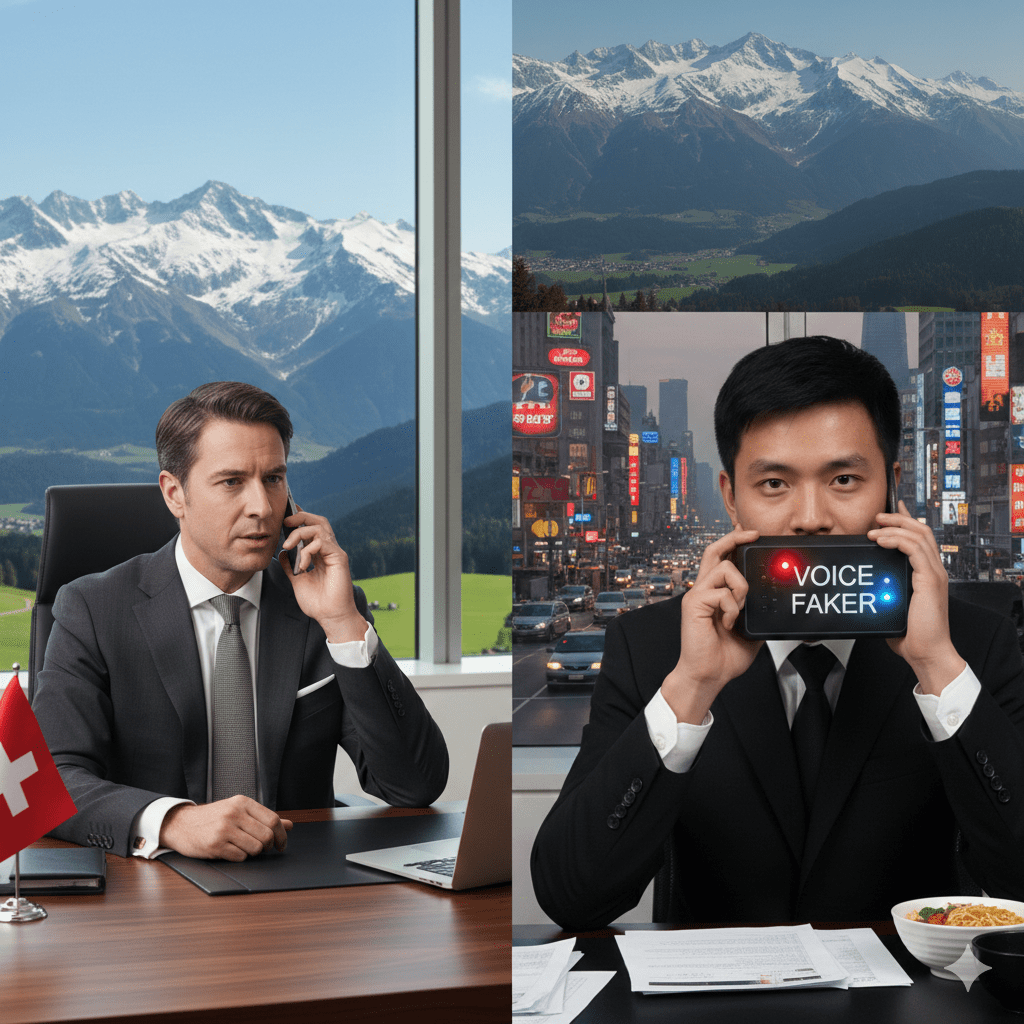

Another Voice Deepfake Fraud Scam

Time for another voice deepfake scam.

This one’s in Schwyz, in Switzerland, which makes reading of the original story somewhat difficult. But we can safely say that “Eine unbekannte Täterschaft hat zur Täuschung künstliche Intelligenz eingesetzt und so mehrere Millionen Franken erbeutet” is NOT a good thing.

And that’s millions of Swiss francs, not millions of Al Frankens.

Luckily, someone at Biometric Update speaks German well enough to get the gist of the story.

“Deploying audio manipulated to sound like a trusted business partner, fraudsters bamboozled an entrepreneur from the canton of Schwyz into transferring “several million Swiss francs” to a bank account in Asia.”

And what do the canton police recommend? (Google Translated)

“Be wary of payment requests via telephone or voice message, even if the voice sounds familiar.”

On Acquired Identities

Most of my discussions regarding identity assume the REAL identity of a person.

But what if someone acquires the identity of another? For example, when the late Steve Bridges impersonated George W. Bush?

Or better still, what when multiple people adopt an identity?

And by the way, Charlie Chaplin said that he NEVER entered a Charlie Chaplin lookalike contest…and came in third.

Of course, these assumed identities require alterations that liveness detection should detect.

As a biometric product marketing expert should know.