(Welcome to my A/B test. For the other version of this post, click here.)

I feel like Ben Maller, a sports radio broadcaster who likes to take three seemingly unrelated phrases and spin them into a monologue.

For this post, the three phrases are “common sense,” “succinct,” and “expert juror testimony.”

I want to (succinctly) pose the question, “Is ‘common sense’ enough for a jury to consider expert testimony?'”

Common Sense

Mitch Wagner recently shared a New Yorker article by Matthew Hutson that claimed that artificial intelligence was lacking in “common sense.” Here is one of the examples cited in the article:

By definition, common sense is something everyone has; it doesn’t sound like a big deal. But imagine living without it and it comes into clearer focus. Suppose you’re a robot visiting a carnival, and you confront a fun-house mirror; bereft of common sense, you might wonder if your body has suddenly changed.

From https://www.newyorker.com/tech/annals-of-technology/can-computers-learn-common-sense

But is the human ability to discern that one’s body was NOT modified truly “common sense”? I argued otherwise in a comment on Wagner’s LinkedIn post.

But is there really a difference between “common sense” and the data acquisition that AI engines perform?

Take the fun-house mirror example in the article. When I first encountered a fun-house mirror, I would either (a) think like a robot and wonder if my body had changed, or (b) draw upon a vast reservoir of past experiences with mirrors and bent objects to deduce that this particular mirror had properties that would display my body in a different way. A person who had never encountered a mirror or a bent object would not have the “common sense” to realize how a fun-house mirror operates.

From https://www.linkedin.com/feed/update/urn:li:activity:6917849217554100225/?commentUrn=urn%3Ali%3Acomment%3A%28activity%3A6917849217554100225%2C6917865639671980032%29

Perhaps I’m wrong, but it seems that everyone takes cues from their past experiences (touching a hot stove, or watching “Perry Mason” or “C.S.I.” on TV), and that helps form our “common sense.”

Succinct

If you read my original comment on Wagner’s post, you’ll notice that I only reproduced the first part here, since the other parts weren’t relevant to this post.

Concise writing is something that Becca Phengvath of Robin Writers reminded us about in a recent post. She started by telling a story about a meeting with one of her college English teachers to review a writing assignment.

“This is good,” she said. But I felt her preparing to say more.

“But look what happens when you remove ‘able to’,” she added, pointing to several spots where I wrote the phrase. “It’s never really needed, see?”

She read a few of my sentences with “able to” omitted and I was shocked at how it immediately strengthened my writing. Since then, I have very very rarely used that phrase in my writing thanks to her.

From https://robinwriters.com/2022/04/07/how-to-make-your-writing-more-concise/

I liked this post so much that I shared in on various social accounts, with the following comment:

I really need to do this more often.

I should do this more.

Expert Juror Testimony

Now let’s get to the meat of the post. (I could have left the first two parts of the post out, I guess, but I wanted to provide some background.)

As I’ve previously noted, the 2009 NAS report on forensic science has revolutionized fingerprint identification and a number of other forensic disciplines.

One part of this revolution was the Department of Justice 2018 document entitled “UNIFORM LANGUAGE FOR TESTIMONY AND REPORTS FOR THE FORENSIC LATENT PRINT DISCIPLINE.” A PDF version of the report can be found here.

This document applies to Department of Justice examiners who are authorized to prepare reports and provide expert witness testimony regarding the forensic examination of latent print evidence.

From https://www.justice.gov/olp/page/file/1083691/download

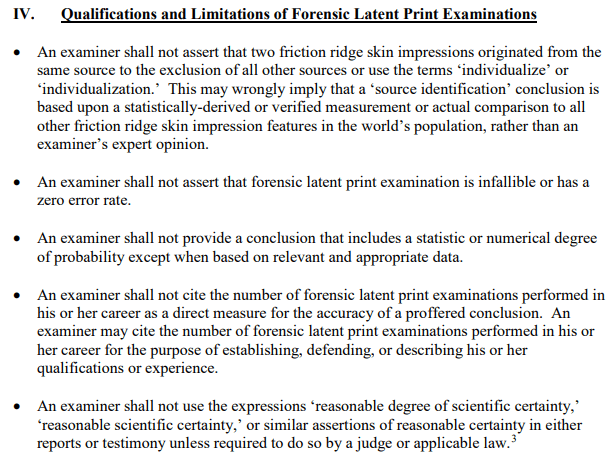

Based upon the NAS report and subsequent efforts, Part IV of the DOJ document (“Qualifications and Limitations of Forensic Latent Print Examinations”) sets some clear guidelines about how a latent print examiner should describe his or her findings.

So a pre-2009 latent print examiner who appeared in court and said “I am 100% certain that these two fingerprints belong to the same person, to the exclusion of all other persons, because I have never been wrong in the last 25 years” would fail the 2018 DOJ criteria.

(Remember that even experienced examiners have been wrong before, as was shown in the Brandon Mayfield case.)

But would a jury know that?

That’s what CSAFE discussed in a recent post, “AAFS 2022 RECAP: UNDERSTANDING JUROR COMPREHENSION OF FORENSIC TESTIMONY: ASSESSING JURORS’ DECISION MAKING AND EVIDENCE EVALUATION.” Feel free to read the entire article, or if you like you can read my “succinct” excerpt from two portions of the article:

Koolmees and her colleagues examined whether jurors could distinguish low-quality testimony from high-quality testimony of forensic experts, using the language guidelines released by the Department of Justice (DOJ) in 2018 as an indicator of quality….

Only when comparing the conditions of zero guideline violations and four and five violations were there any changes in guilty verdicts, signifying jurors may notice a change in the quality of forensic testimony only when the quality is severely low.

From https://forensicstats.org/blog/2022/03/29/aafs-2022-recap-understanding-juror-comprehension-of-forensic-testimony-assessing-jurors-decision-making-and-evidence-evaluation/

Think about it. While forensic and criminal justice professionals, who have been immersed in forensic science testimony debates for over a decade, would be unimpressed by someone testifying that two fingerprints are a “100% match,” there’s a good chance that a jury would be very impressed with an “expert” who provided such convincing testimony.

So this raises the question of whether the NAS report, for all of its revolutionary effects, will have any true results in courtroom testimony.

- Will jurors heed the advice from the DOJ guidelines to accurately consider the testimony of a forensic expert?

- Or will the jurors’ “common sense,” based on old Perry Mason and C.S.I. TV shows, prevent jurors from consiering expert testimony in the correct light?

1 Comment